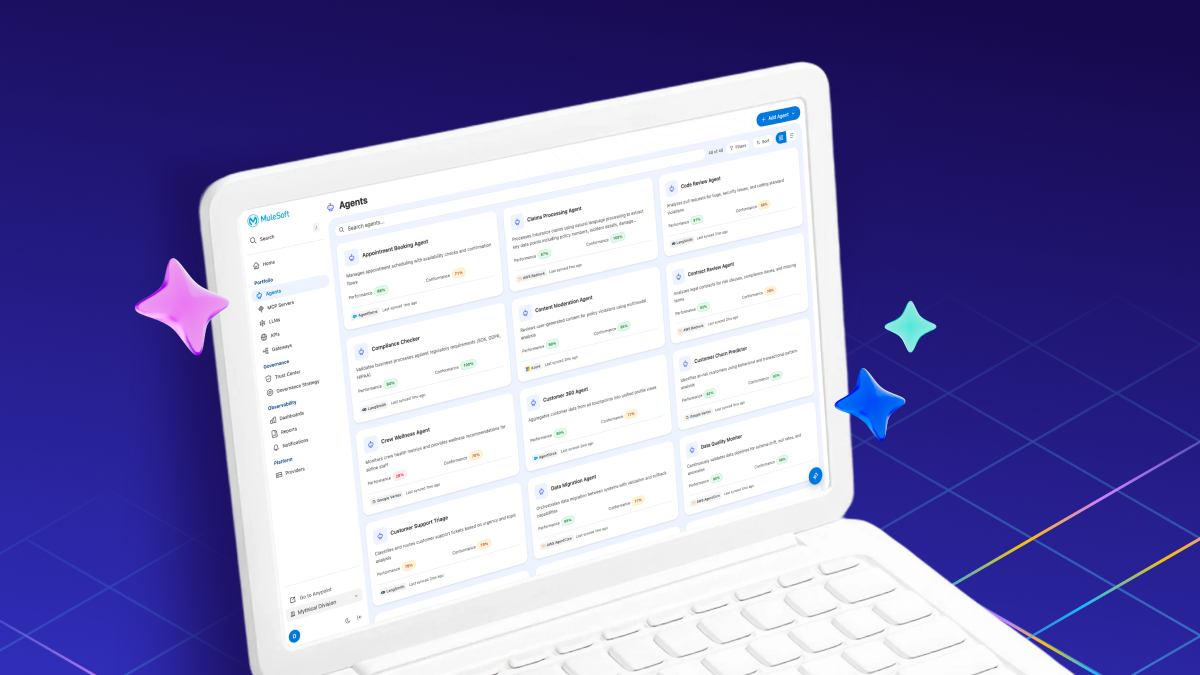

Model Context Protocol (MCP) adoption has accelerated rapidly since its introduction in November 2024. Enterprises now manage dozens to hundreds of MCP servers—tools that extend AI agent capabilities by connecting them to external data sources and APIs. The Agent-to-Agent (A2A) Protocol followed in April 2025, enabling autonomous agents to communicate directly without human intervention. More recently, Agent Skills emerged across enterprise infrastructure. This growth has created three security gaps: teams lack visibility into which tools and agents are deployed, manual security reviews can’t scale to match deployment velocity, and compliance frameworks require audit trails that don’t exist for autonomous AI agents.

Organizations face risks from unvetted MCP servers, A2A agents, and Skills: inadvertent access to sensitive data systems, compliance violations under SOX and GDPR frameworks that can result in regulatory penalties, and operational disruptions when vulnerable tools or malicious agents are discovered post-deployment. Security teams report that manual review processes can add several weeks to each AI application deployment, creating a backlog that grows as AI adoption accelerates. Audit failures from incomplete tool and agent tracking create regulatory exposure that compliance teams struggle to quantify.