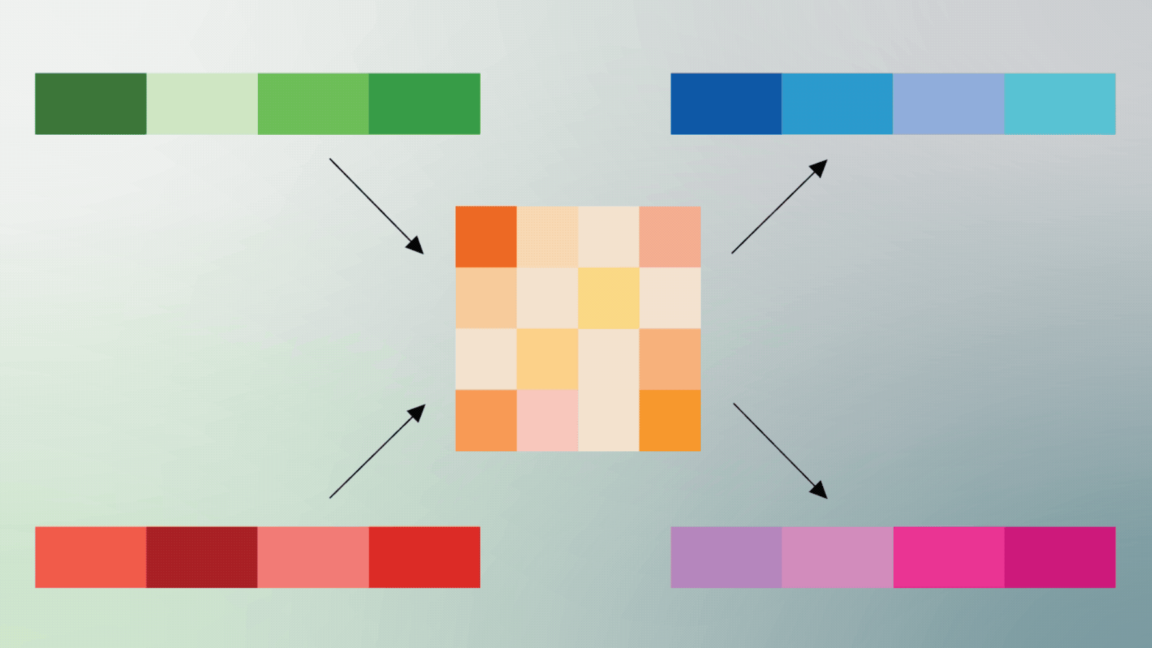

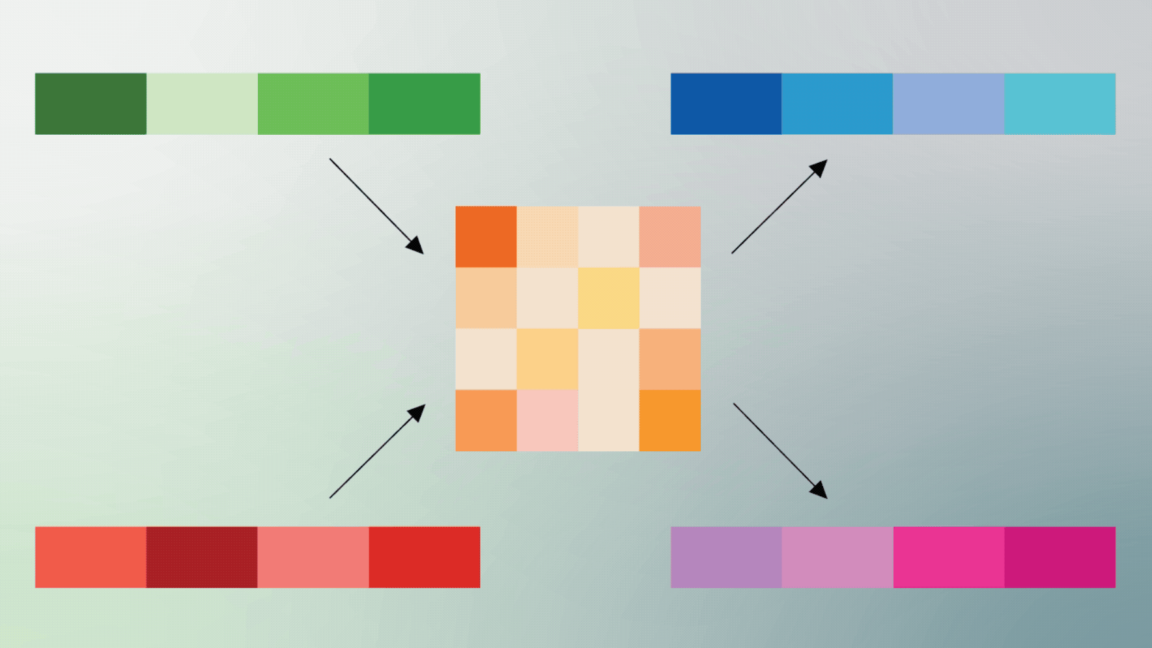

2026-04-1712 min readThis post is also available in 日本語 and 한국어.Running inference within 50ms of 95% of the world's Internet-connected population means being ruthlessly efficient with GPU memory. Last year we improved memory utilization with Infire, our Rust-based inference engine, and eliminated cold-starts with Omni, our model scheduling platform. Now we are tackling the next big bottleneck in our inference platform: model weights.Generating a single token from an LLM requires reading every model weight from GPU memory. On the NVIDIA H100 GPUs we use in many of our datacenters, the tensor cores can process data nearly 600 times faster than memory can deliver it, leading to a bottleneck not in compute, but memory bandwidth. Every byte that crosses the memory bus is a byte that could have been avoided if the weights were smaller.To solve this problem, we built Unweight: a lossless compression system that can make model weights up to 15–22% smaller while preserving bit-exact outputs, without relying on any special hardware. The core breakthrough here is that decompressing weights in fast on-chip memory and feeding them directly to the tensor cores avoids an extra round-trip through slow main memory. Depending on the workload, Unweight’s runtime selects from multiple execution strategies – some prioritize simplicity, others minimize memory traffic – and an autotuner picks the best one per weight matrix and batch size.This post dives into how Unweight works, but in the spirit of greater transparency and encouraging innovation in this rapidly developing space, we’re also publishing a technical paper and open sourcing the GPU kernels.Our initial results on Llama-3.1-8B show ~30% compression of Multi-Layer Perceptron (MLP) weights alone. Because Unweight works selectively on the parameters for decoding, this leads to a 15-22% in model size reduction and ~3 GB VRAM savings. As shown in the graphic below, this enables us to squeeze more out of our GPUs and thus run more models in more places — making inference cheaper and faster on Cloudflare’s network.

Unweight: how we compressed an LLM 22% without sacrificing quality

Running LLMs across Cloudflare’s network requires us to be smarter and more efficient about GPU memory bandwidth. That’s why we developed Unweight, a lossless inference-time compression system that achieves up to a 22% model footprint reduction, so that we can deliver faster and cheaper inference than ever before.