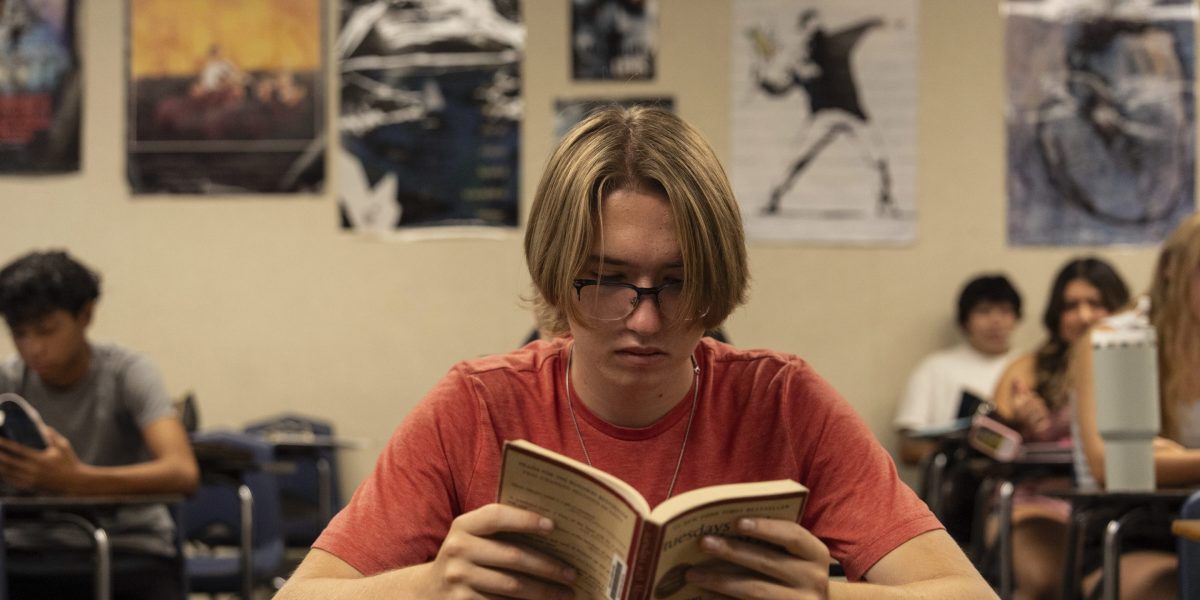

In the 1980s and 1990s, if a high school student was down on their luck, short on time, and looking for an easy way out, cheating took real effort. You had a few different routes. You could beg your smart older sibling to do the work for you, or à la Back to School (1989), you could even hire a professional writer. You could enlist a daring friend to find the answer key to your homework on the teacher’s desk. Or you had the classic excuse: “The dog ate my homework,” and the like.

The advent of the internet made things easier, but not effortless. Sites like CliffsNotes and LitCharts let students skim summaries when they skipped the reading. Homework-help platforms such as GradeSaver or Course Hero offered solutions to common math textbook problems.

The thing that all these strategies had in common was effort: There was a cost to not doing your work. Sometimes it was more work to cheat than it was just to have done the work yourself.

Today, the process has collapsed into three steps: Log on to ChatGPT or a similar platform, paste the prompt, get the answer.

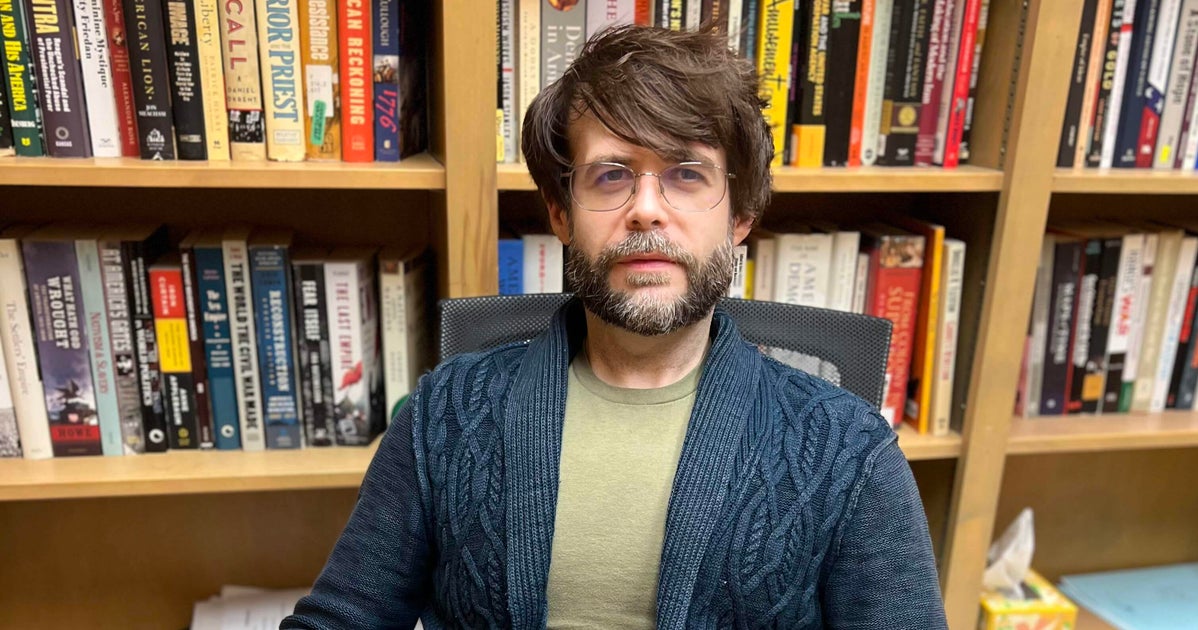

Experts, parents, and educators have spent the past three years worrying AI made cheating too easy. A massive Brookings report released in January suggests they weren’t worried enough: The deeper problem, the report argues, is that AI is so good at cheating that it’s causing a “great unwiring” of students’ brains.The report concludes the qualitative nature of AI risks—including cognitive atrophy, “artificial intimacy,” and the erosion of relational trust—currently overshadows the technology’s potential benefits.