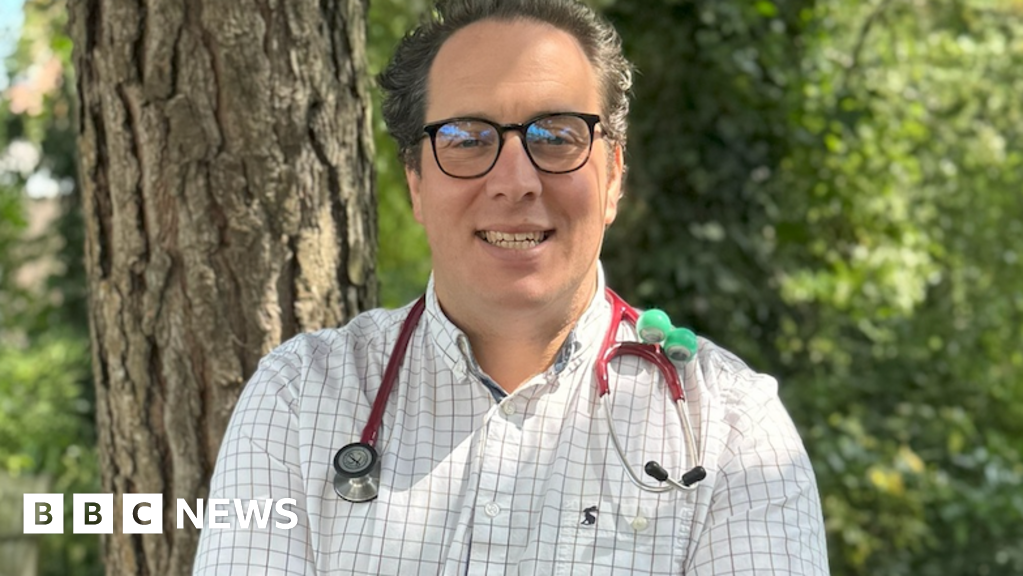

GPs have been warned to look out for ‘inaccurate or fabricated’ information when using AI to write their medical notes.

Family doctors are increasingly using tools that listen to their consultations with patients and automatically add summaries to their records.

But the Royal College of GPs has warned AI can misinterpret the nuance of conversations, with potential dangerous consequences.

The Medicines and Healthcare products Regulatory Agency (MHRA) also says there is a 'risk of hallucination which users should be aware of, and manufacturers should actively seek to minimise and mitigate the potential harms of their occurrence’.

The safety watchdog is now urging GPs to report issues with AI scribes through its Yellow Card Scheme, which is typically used to report adverse reactions to medicines.