Want smarter insights in your inbox? Sign up for our weekly newsletters to get only what matters to enterprise AI, data, and security leaders. Subscribe Now

OpenAI made a rare about-face Thursday, abruptly discontinuing a feature that allowed ChatGPT users to make their conversations discoverable through Google and other search engines. The decision came within hours of widespread social media criticism and represents a striking example of how quickly privacy concerns can derail even well-intentioned AI experiments.

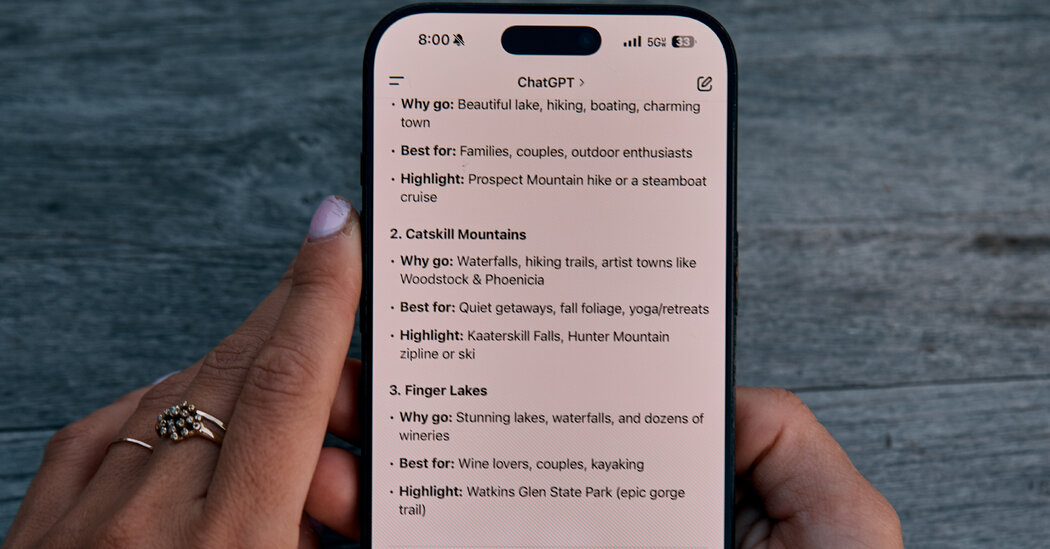

The feature, which OpenAI described as a “short-lived experiment,” required users to actively opt in by sharing a chat and then checking a box to make it searchable. Yet the rapid reversal underscores a fundamental challenge facing AI companies: balancing the potential benefits of shared knowledge with the very real risks of unintended data exposure.

Visa’s $3.5B Bet on AI

We just removed a feature from @ChatGPTapp that allowed users to make their conversations discoverable by search engines, such as Google. This was a short-lived experiment to help people discover useful conversations. This feature required users to opt-in, first by picking a chat… pic.twitter.com/mGI3lF05Ua