In 2026, the hype for artificial intelligence (AI) agents is louder than ever before. These semi-autonomous programs can “think” and execute well-defined tasks in areas like customer service and software development, typically using language models (LMs). But fields like medical diagnosis and scientific discovery require them to inquire about a vast range of solutions in uncertain environments, which LMs struggle with.

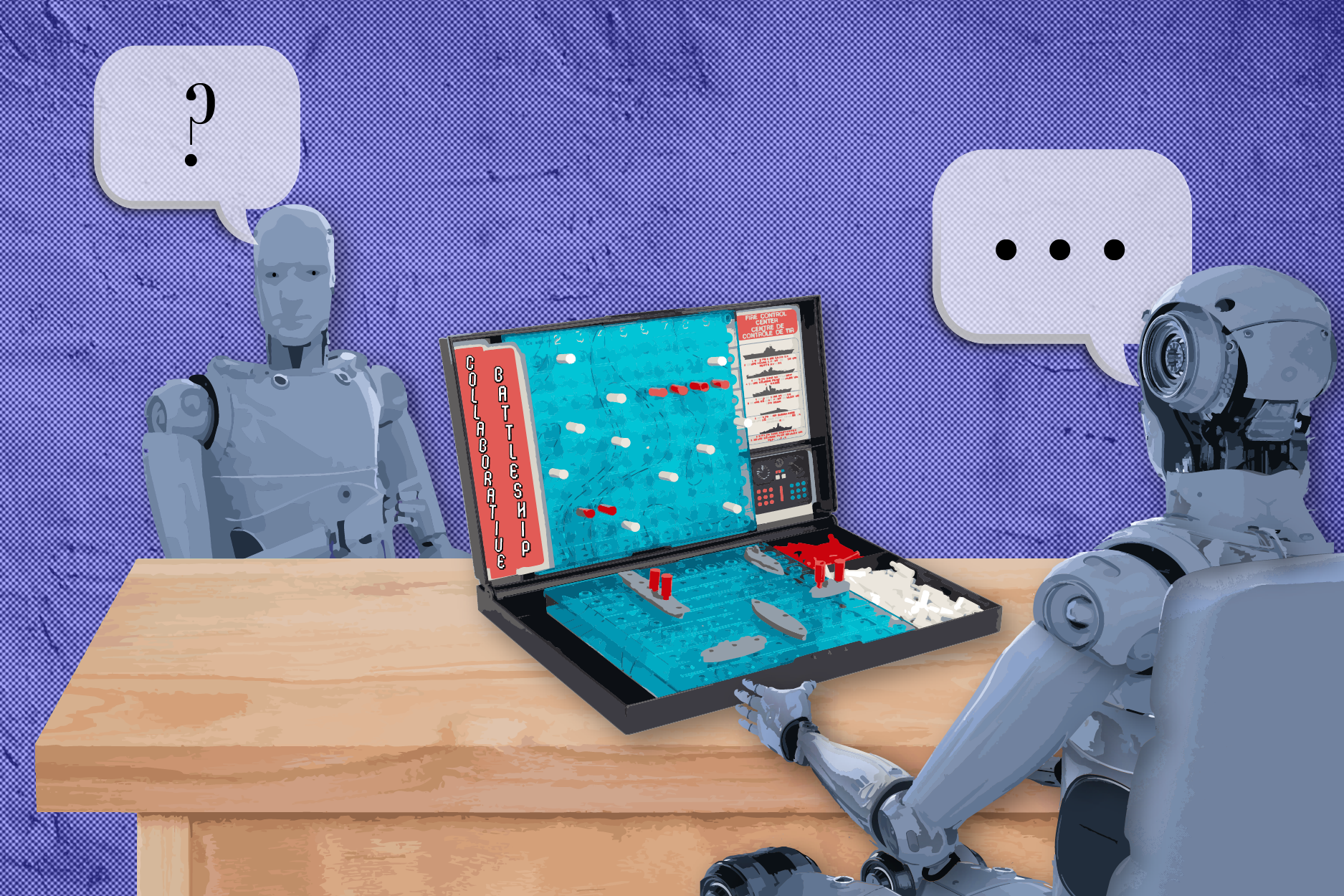

Researchers at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) and Harvard University’s School of Engineering and Applied Sciences (SEAS) peered deeper into LMs to understand their main issues in high-stakes settings. Their test: Battleship, a guessing game that’s helped cognitive scientists study how humans seek information. CSAIL and SEAS scholars added a twist by reframing the game around asking and answering natural language questions. In their “Collaborative Battleship” game, one participant is a “captain” who inquires about where hidden ships are, while their teammate plays the “spotter” by responding to those questions in real time.

The researchers first had over 40 humans play the game together, collecting their questions and yes-no answers to build the “BattleshipQA” dataset. These results were a helpful point of comparison when the team tested state-of-the-art LMs (like GPT-5) and smaller models (like Llama-4-Scout) on their game. Without training the models beforehand, they found that top LMs can “beat” humans at Battleship — that is, complete the game in fewer turns — but smaller systems are far less rational.