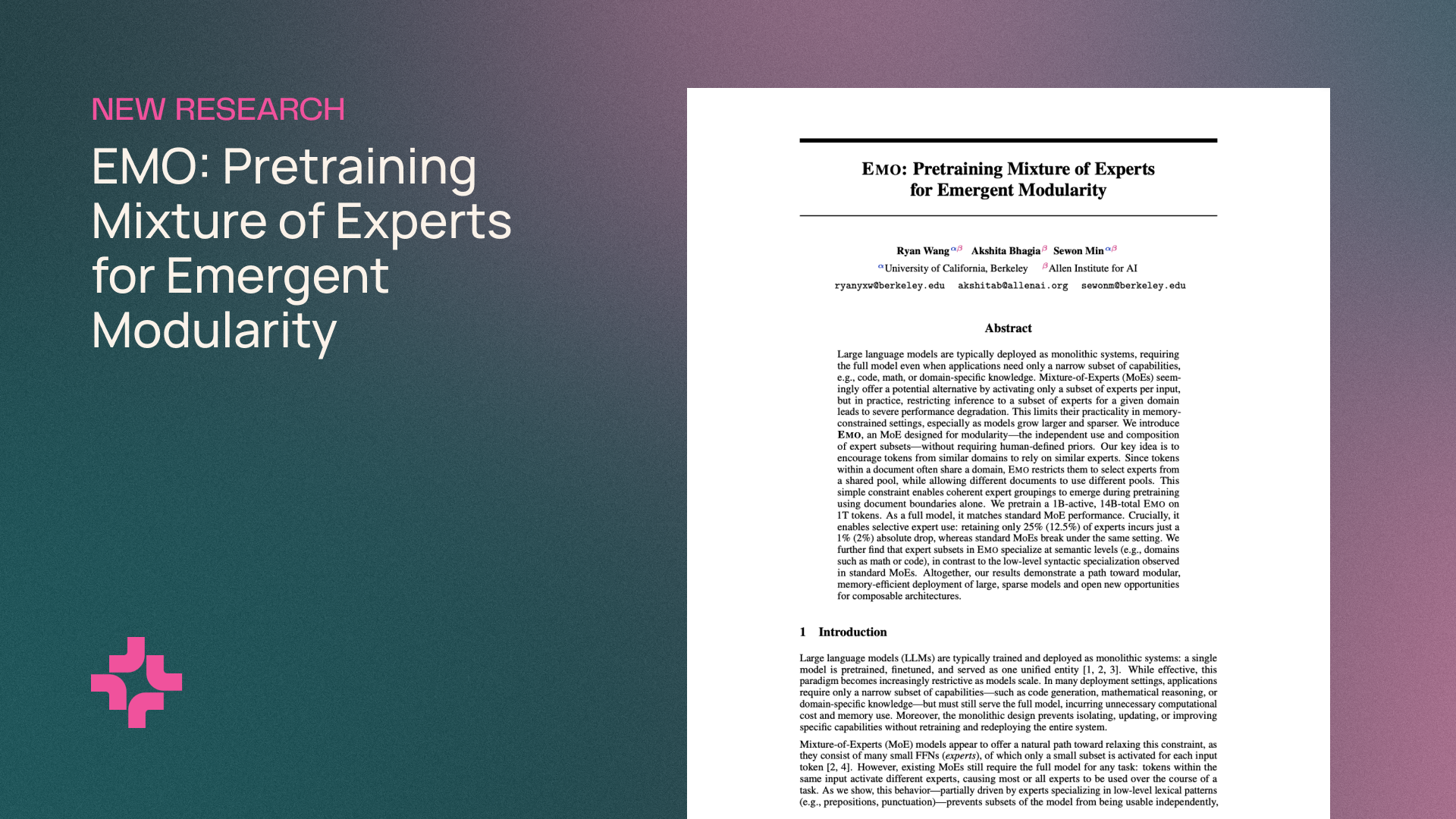

Researchers at the Allen Institute for AI and UC Berkeley have built EMO, a mixture-of-experts model that develops modular structures during pre-training. The model can be stripped down to a small fraction of its experts with barely any drop in performance.

Mixture-of-experts (MoE) architectures are now standard in language models like DeepSeek-V4 or Qwen3.5. They activate only a handful of experts per token, which lets them scale to hundreds of billions of parameters without blowing up compute costs. But the full model still has to sit in memory because different tokens within a task call on different experts. If you only want to do math or code, you can't just load a slice of the model and call it a day.

According to the paper, that's because experts in standard MoEs tend to latch onto shallow language patterns. They respond to things like prepositions, punctuation, or articles instead of higher-level domains like math or code. That makes it impossible to carve out a useful subset.

Document boundaries as a training signal

EMO tackles this with a simple trick. Instead of sorting training data into fixed domains like math or biology ahead of time—the way projects like BTX or Ai2's own FlexOlmo do—the authors use document boundaries. Tokens within a document usually belong to the same domain.