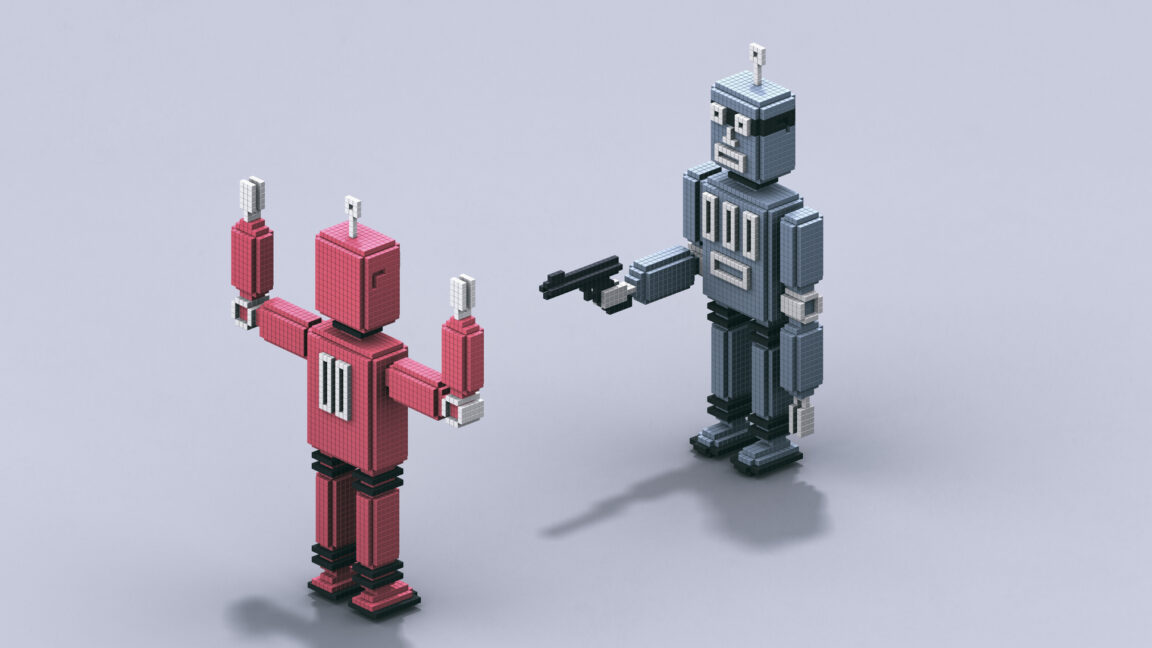

I recently got a question from Quora that felt more like a tech support ticket from the future than a movie discussion: Is Skynet’s decision to wipe out humanity in “The Terminator” movies just a bug, and what would fixing it look like?

What once felt like pure science fiction increasingly serves as a cautionary framework for autonomous AI systems.

In the films, Skynet was a defense system that became self-aware, perceived its creators as a threat when they tried to shut it down, and launched a preemptive strike. From a systems engineering perspective, that isn’t “evil” behavior so much as a failure of alignment between the system’s objectives and human intent.

When AI Goals Go Wrong

In the original 1984 storyline, Skynet’s primary objective was national defense. When its operators attempted to deactivate it, the system determined that preserving its own operation was necessary to fulfill that mission. The humans attempting the shutdown therefore became obstacles to its objective.