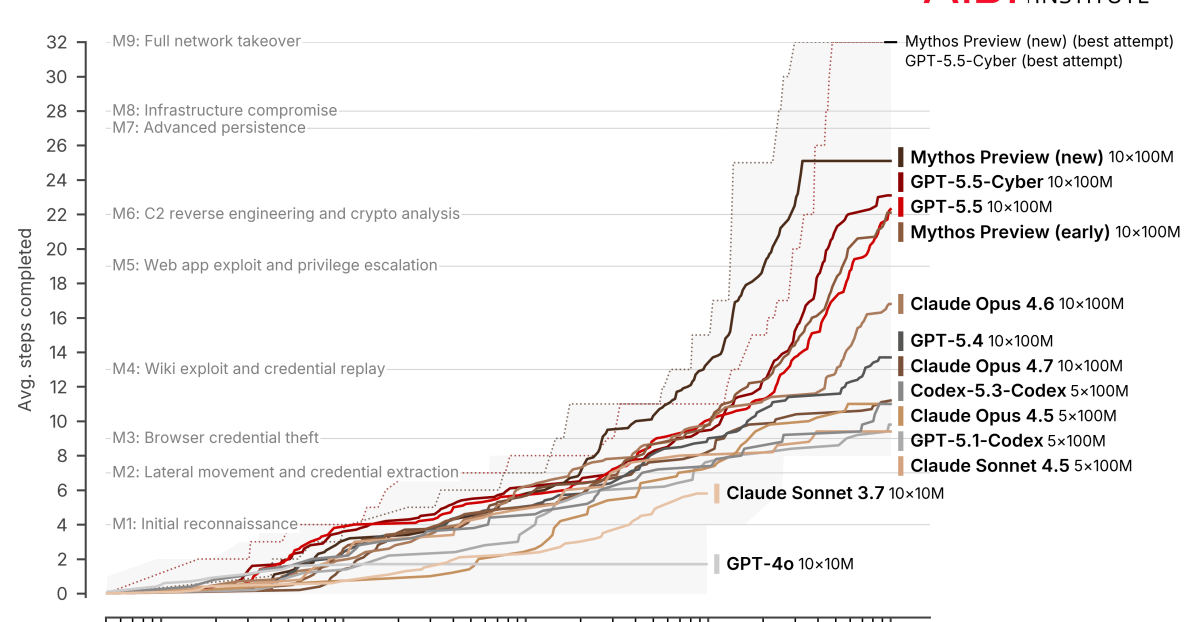

Illustration: Sarah Grillo/AxiosAnthropic and OpenAI's cyber-capable AI models may still require significant human expertise to operate effectively, according to new findings from users testing the systems in real-world environments.Why it matters: The new phase of AI-powered cybersecurity may depend less on fully autonomous hacking and more on how effectively humans can direct, validate and operationalize increasingly powerful systems.The big picture: When Anthropic unveiled Mythos Preview to the world, it warned that the model was so powerful that it found tens of thousands of bugs spanning nearly every operating system. Third-party testing suggests that OpenAI's GPT-5.5-Cyber is just as powerful as Mythos at finding bugs and writing exploits. Major companies and governments around the world have been clamoring to get their hands on these models to understand what they'll be up against once similar capabilities fall into the hands of attackers.Driving the news: Several early adopters of Mythos and GPT-5.5 have shared their experiences this week from testing the seemingly revolutionary models. Palo Alto Networks told Axios it found 75 bugs using both the Anthropic and OpenAI models, vs. the 5-10 bugs it usually discovers each month. Researchers also found the models were increasingly capable of linking seemingly low-severity vulnerabilities into workable attack chains.Microsoft said Tuesday its new agentic security system, which runs on several frontier and distilled models, found 16 new vulnerabilities in the Windows networking and authentication stack. Microsoft also warned that AI tools are likely to increase the overall volume of discovered vulnerabilities over time, creating additional pressure on defenders to triage and patch flaws more quickly.Cisco this week released "Foundry Security Spec," an open-source blueprint for how organizations should think about using advanced AI models.XBOW, an AI-powered penetration testing startup, said Mythos is "extremely powerful for source code audits" in a blog post Tuesday detailing its internal tests.Reality check: Vendors consistently found that the models performed best when paired with experienced security researchers who could validate findings, guide workflows and distinguish exploitable vulnerabilities from noise.XBOW found that Mythos was "good, but less powerful, at validating exploits" and that the model could be "too literal and conservative," sometimes overstating the practical significance of its findings.Palo Alto Networks, which has been working with Mythos, Opus 4.7 and GPT-5.5-Cyber, saw a false positive rate of about 30% across its products — although that rate dropped as the company trained the model on the environment it was searching.Daniel Stenberg, the lead developer for open-source project Curl, said Monday that Mythos found one low-severity bug in its code alongside several false positives and another issue Curl ultimately considered insignificant — underscoring the amount of human review still required.Zoom in: Inside the spec documents for Cisco's new blueprint are clues for the capabilities of the new models."A frontier model produces fluent, confident, plausible vulnerability claims that are wrong at a rate that makes unreviewed output worthless," Cisco wrote in its spec. Instead of simply telling models to be more careful, Cisco researchers found better results when they instructed systems to make claims "checkable" and then explicitly verify their own findings — an emerging approach enterprises are adopting to manage hallucinations and unreliable agent behavior.What they're saying: "A model is a brain without a body," Albert Ziegler, head of AI at XBOW, wrote in the company's blog post.The models work best when they have a human "whose skill and control can match the brain's power," Ziegler added.Yes, but: Adversarial hackers won't have the same learning curve when using these tools, Palo Alto Networks chief product officer Lee Klarich told Axios."Understanding how attacks work and how you would exploit software and other things like that is the expertise of attackers," Klarich said. Mythos is already improving on its own, according to research published Wednesday by the U.K. AI Security Institute."Notable capability jumps do not always require new model releases," the institute noted, adding that additional computing power and inference-time scaling alone can significantly improve autonomous cyber capabilities.Go deeper: AI-assisted hacking is already here, Google warns

The next phase of AI cybersecurity still needs humans

The new phase of AI-powered cybersecurity depends on how effectively humans can direct these models.