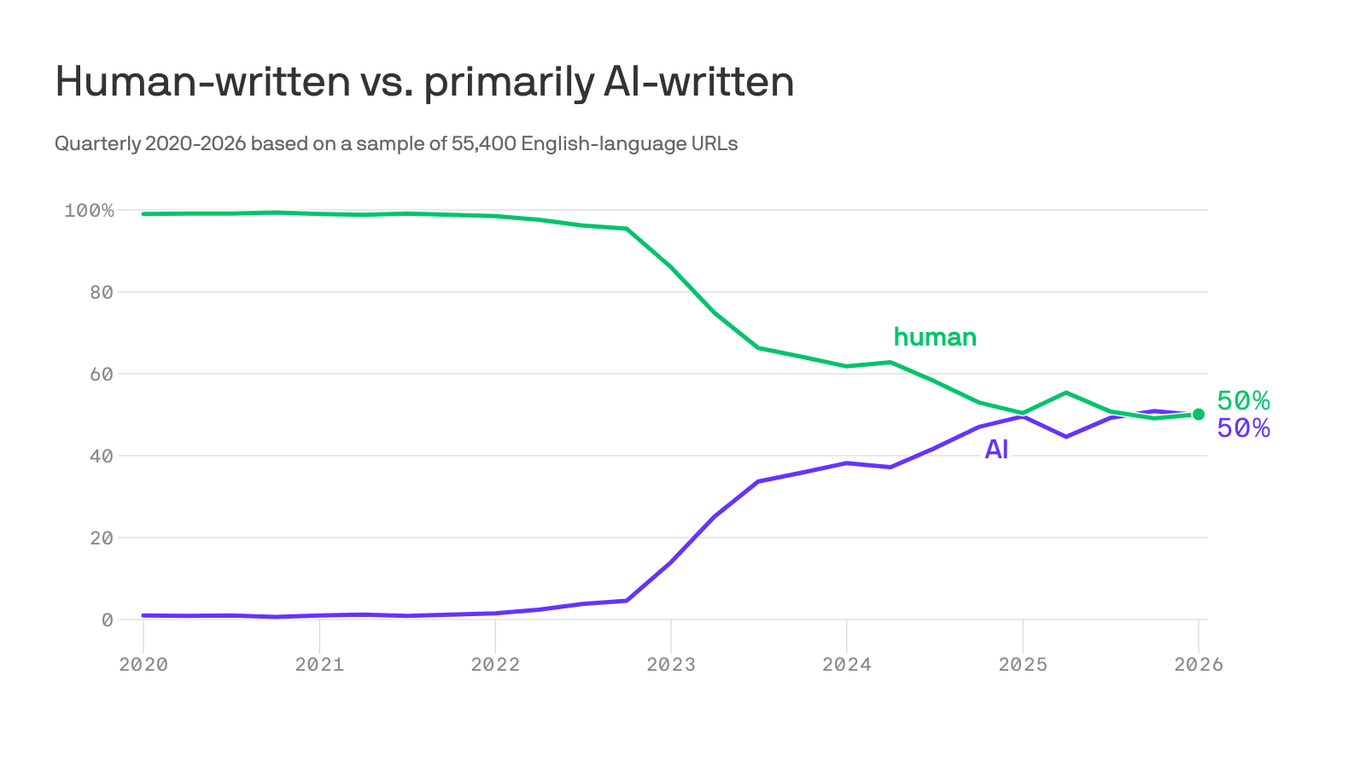

By Charles Pensig, Founding Partner, Stratus Data.gettyFor the past few years, AI’s stratospheric growth has been driven by one main ingredient: more data. Feed models ever-larger amounts of text, images and audio, and they get smarter.But that approach is hitting a ceiling. Based on analysis of publicly available LLM data-consumption projections, these models have already been trained on roughly 20% of the world’s publicly available data.So, what happens to AI when we run out of data? New data is being created every day, but not at the current pace of AI. When you eliminate “junk” data or regurgitation, there simply isn’t enough new, high-quality information left (or being created fast enough) to fuel exponential growth. Add to that the number of web sources newly restricting their use of data, and it poses a real problem. Here’s another consideration: Training bigger models also demands massive amounts of computing power and electricity. Data centers are ballooning in size, and we're running out of energy capacity in the U.S.What we’re facing is "AI collapse": AI is trained on human data > AI produces information on the web > AI then starts training on its own output > AI becomes like a Ponzi scheme, ready to collapse under its own strain. The answer is to stop chasing LLMs and start rethinking how we’re building AI and what we really want to get out of it. It isn’t a lost cause, and this isn’t the first time we’ve faced a tech dilemma like this. In the '90s and early 2000s, there was a similar race to increase computer clock speed (megahertz to gigahertz). But once we reached physical limitations (the fastest clock speeds for computers have been around three to four Ghz for the past 15 years), chipmakers found other ways to improve performance, like multi-core processors.So instead of brute forcing our way into bigger models by throwing more data and power at the algorithm, we should start focusing on smaller, more curated and focused models.China may have the answer. Recently, DeepSeek (often described as a competitor to OpenAI) made waves by releasing high-performing AI models that appear to achieve strong results at significantly lower cost. While not matching leading systems across every benchmark, their performance in some areas suggests that more efficient architectures and training approaches can reduce compute, hardware requirements and potentially energy use.How did DeepSeek do what others couldn’t? I would posit that DeepSeek was more resource constrained, so it was forced to be creative. As Eric Schmidt, former CEO of Google, once said: "Creativity loves constraints."The next leap forward won’t come from scraping the internet harder, racing to create more data or building ever-larger chatbots. It will come from rethinking what these systems are designed to do and how they do it, using architecture and training changes. We also need to stop chasing general-purpose “superintelligence” and start thinking smartly about how we use AI in business and in our daily lives. Right now, GenAI is like a very advanced parrot. It’s great at predicting the next word in a sentence, but it lacks nuance and doesn’t understand context the way a human does. That’s why ChatGPT and other GenAI models sometimes sound confident but provide incorrect (or entirely made up) answers. Hallucinations occur when models reach the “boundaries” of their training data. So it’s not always the right choice for what we need.There’s a quieter form of AI called “analytical AI” that makes much more sense for businesses, for example. This includes AI like predictive analytics to forecast things like customer demand or product input prices, and optimization engines that help businesses figure out the best way to use their resources (like inventory optimization based on customer demand cycles). Analytical AI has been around for much longer and is much more mature, but many organizations aren’t leveraging fully or at all. Sometimes a valuable, practical solution is right under your nose, but it’s not the new, shiny thing everyone is talking about.The future of AI is about using the right data smartly. All data isn’t created equal, and part of the challenge is figuring out what data will actually help you drive the growth you want. When it comes to AI, more isn’t always better. Forbes Technology Council is an invitation-only community for world-class CIOs, CTOs and technology executives. Do I qualify?

What Happens When The Industry Runs Out Of Data?

The answer is to stop chasing large language models and start rethinking how we’re building AI and what we really want to get out of it.