ByRon Schmelzer,

Contributor.

For the past three years, the AI industry has been in the model building and training race. This meant bigger models with bigger clusters and more compute, demanding greater amounts of data, compute capability and budget. This race is primarily focused on claiming a stake in the biggest IT movement of the past few decades, a new gold rush.

However, as models start to converge on capability and AI platform vendors now look to restrict or manage access to its most powerful, and expensive, models, these companies are shifting from grubstake market claims to a more business-oriented and brass tacks needs of billing and metering access to those models. AI companies are starting to look more like traditional cloud computing companies than cutting-edge AI research labs.

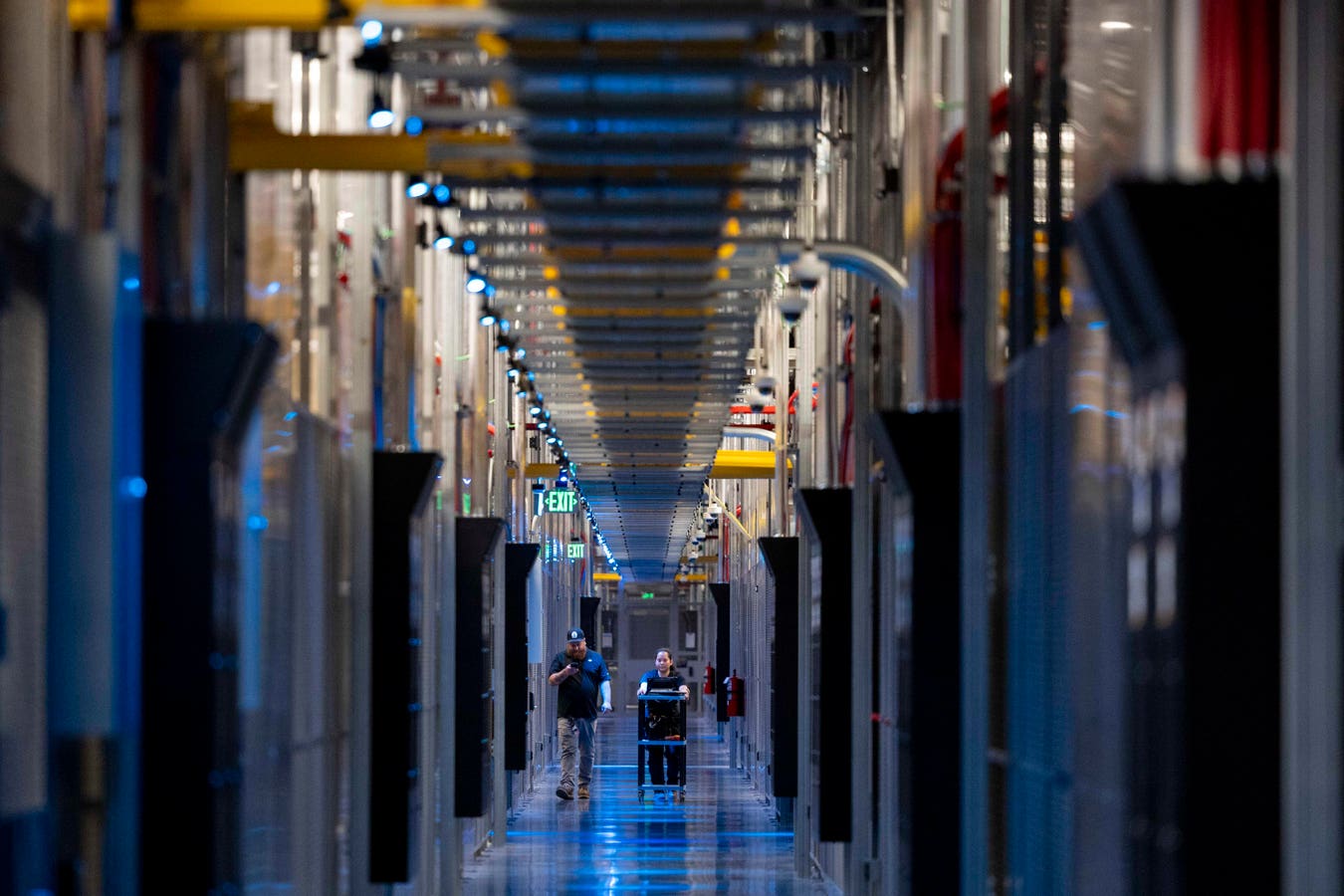

The next AI battleground is inference, the act of running trained models in real products, for real users, millions or billions of times a day. The AI race is shifting from increasing power and capability to affordability, privacy and energy usage. Recent market reporting indicates that investors are tracking a move from training-heavy demand toward inference, where autonomous agents, enterprise copilots and always-on AI services create constant AI consumption.