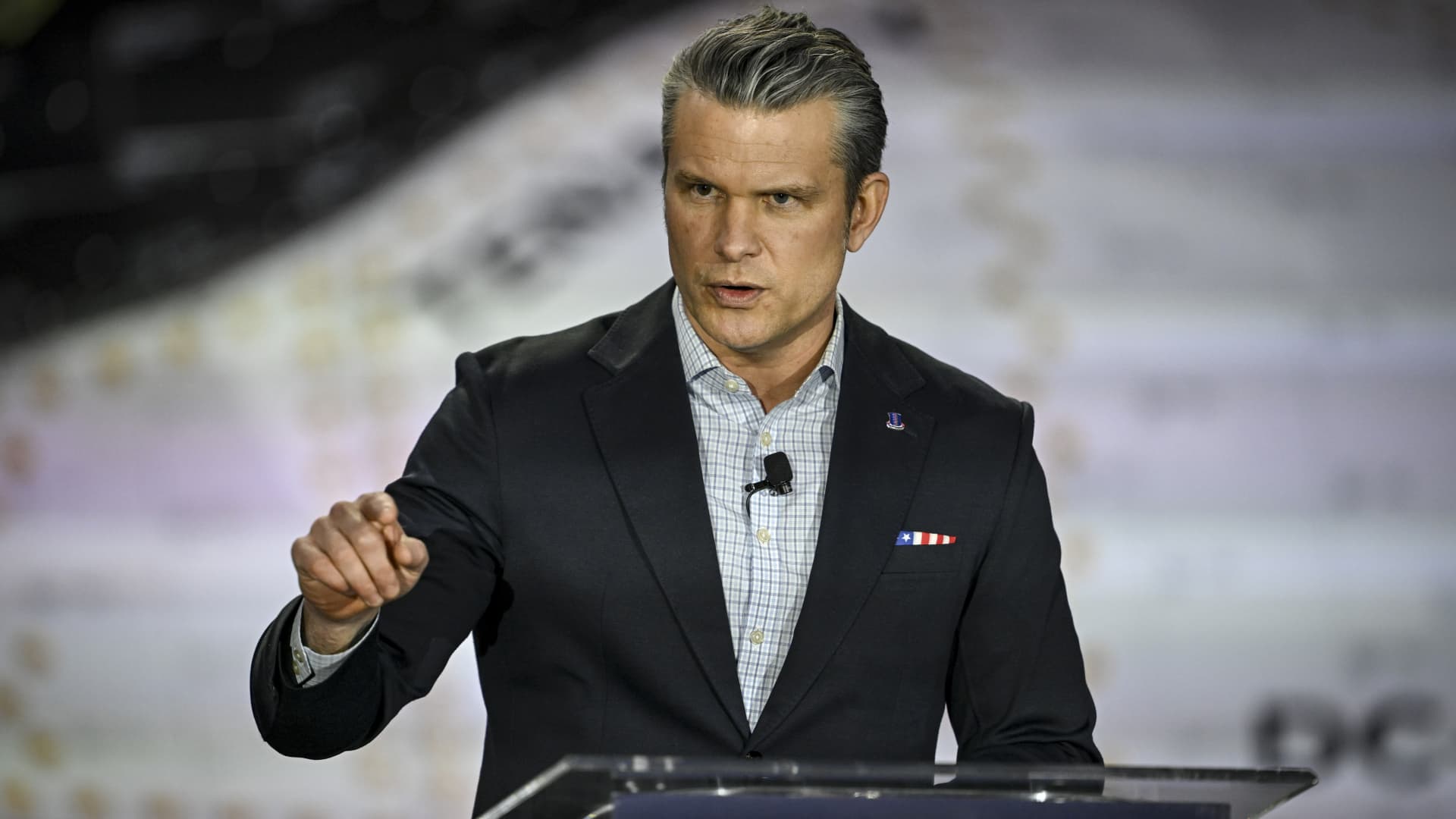

"War is too serious a matter to entrust to the military." This famous quote, attributed to Georges Clemenceau, could today be paraphrased as: War is far too sensitive an issue to be managed by artificial intelligence (AI). The debate has been fueled as much by current geopolitical tensions as by the standoff that broke out in late February in the United States between Secretary of Defense Pete Hegseth and Dario Amodei, the head of Anthropic, one of the world's leading AI firms. The fundamental question is: Who ultimately decides how AI is used, by what principles, within what institutional framework, and with what checks and balances?

These questions have taken on even greater urgency as AI is now routinely used in conflicts. Claude, the suite of large language models developed by Anthropic, was reportedly deployed in the capture of Venezuelan President Nicolas Maduro on January 3. It was also used by the US military to prepare the military operation against Iran launched on February 28, including the elimination of the Islamic Republic's Supreme Leader Ali Khamenei.

The tensions between the US administration and Anthropic stemmed from the company's refusal to lift certain restrictions on the military use of Claude: no autonomous lethal weapons without human oversight and no participation in mass surveillance systems. Amodei has emphasized these red lines, stressing the need to regulate systems that remain, at this stage, probabilistic, fallible and opaque. If such systems were to become sovereign arbiters of decisions involving human lives, the very foundations of the rule of law would be threatened.