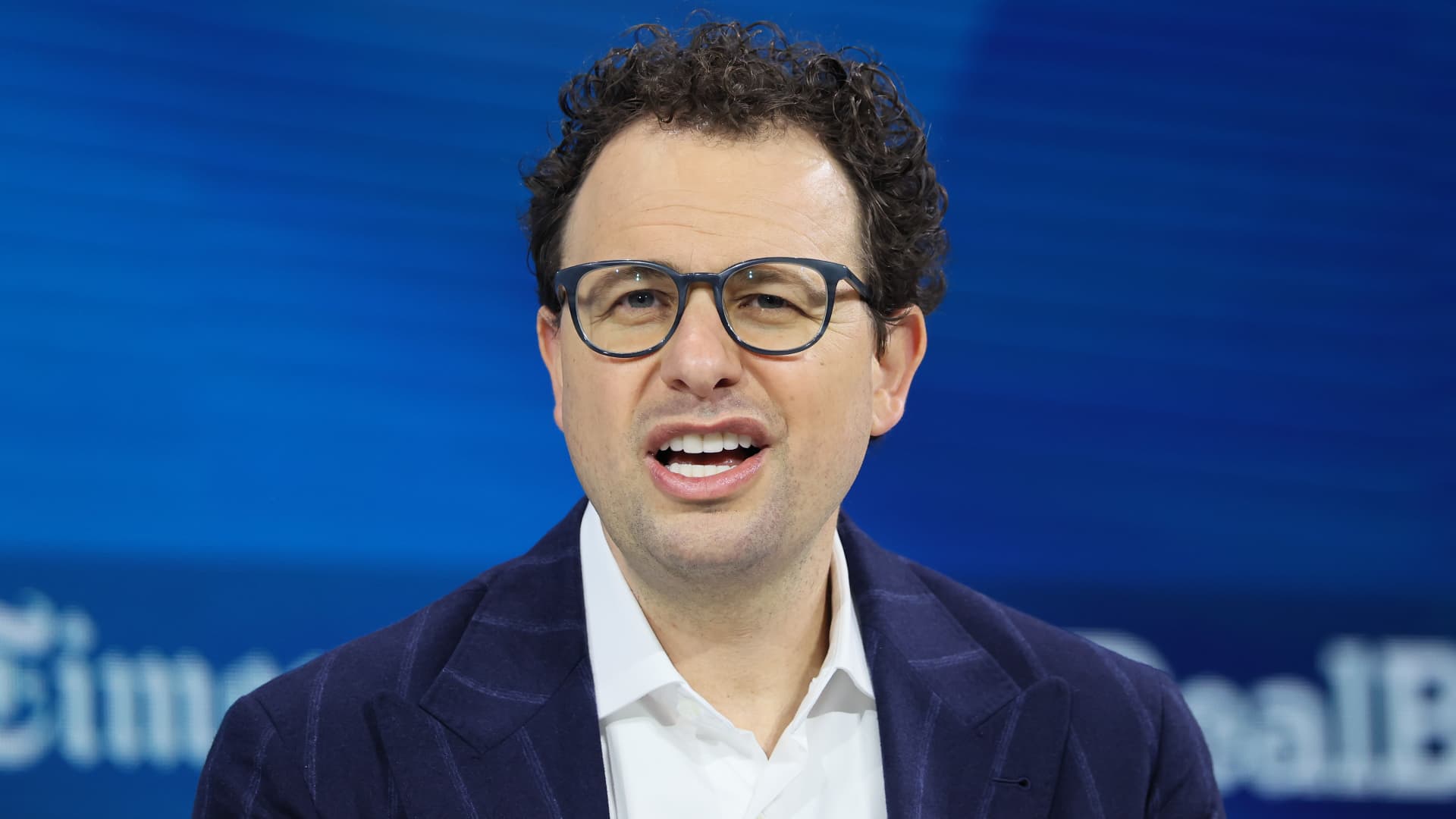

WASHINGTON: Anthropic CEO Dario Amodei said Thursday the artificial intelligence company “cannot in good conscience accede” to the Pentagon’s demands to allow wider use of its technology.

The company said in a statement that it’s not walking away from negotiation but that new contract language received from the Defense Department “made virtually no progress on preventing Claude’s use for mass surveillance of Americans or in fully autonomous weapons.”

The Pentagon’s top spokesman has reiterated that the military wants to use Anthropic’s artificial intelligence technology in legal ways and will not let the company dictate any limits ahead of a Friday deadline to agree to its demands.

Sean Parnell said Thursday on social media that the Pentagon “has no interest in using AI to conduct mass surveillance of Americans (which is illegal) nor do we want to use AI to develop autonomous weapons that operate without human involvement.”

Anthropic’s policies prevent its models, such as its chatbot Claude, from being used for those purposes. It’s the last of its peers — the Pentagon also has contracts with Google, OpenAI and Elon Musk’s xAI — to not supply its technology to a new US military internal network.