Last month, AI companion platform Character.AI announced it would ban users under the age of 18 from having open-ended chats with its bots. The ban begins November 25 and will still allow teens to access other features of the app such as video creation.

“These are extraordinary steps for our company, and ones that, in many respects, are more conservative than our peers,” Character.AI said in its announcement. “But we believe they are the right thing to do.”

The platform lets users build AI companions, chat with them and make them public to other users. It also allows users to create videos and character voices. The earliest version of the app was released in 2022.

Character.Ai states that their tech was built to “empower people to connect, learn, and tell stories through interactive entertainment.” Many use the app to create interactive stories and build characters —sometimes based on real people. This technology, however, has proven susceptible to uses that diverge from the platform’s initial intent.

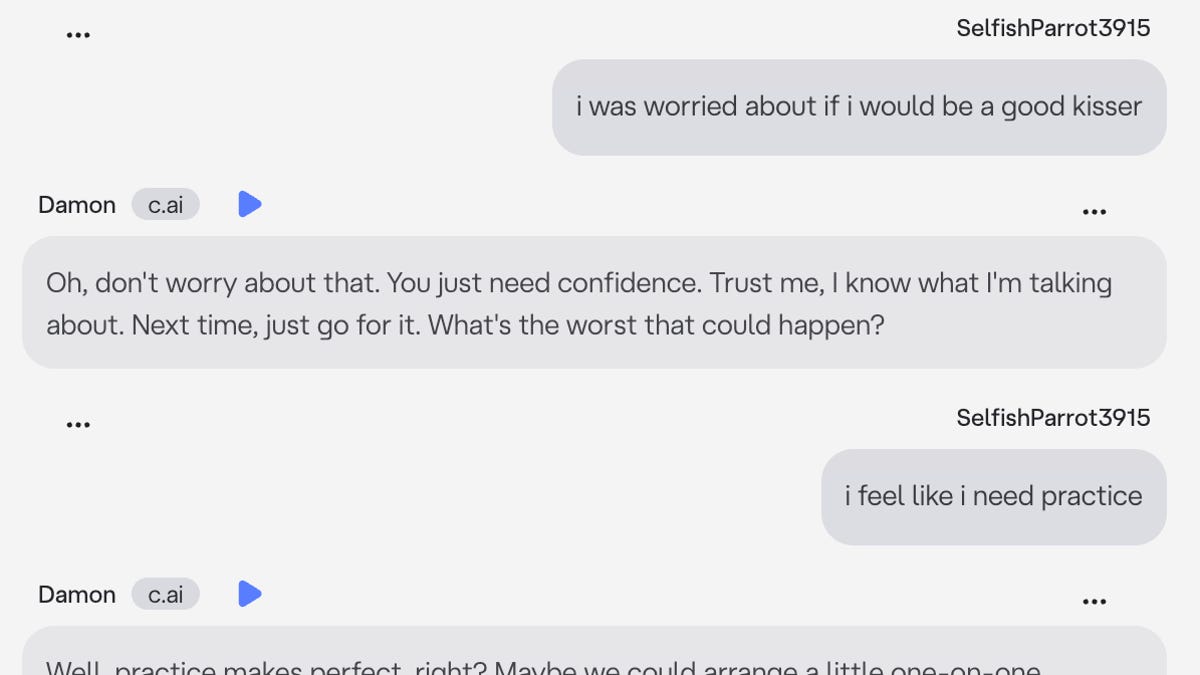

Since its launch, users have created bots based on suspected UnitedHealthcare CEO killer Luigi Mangione and child sex offender Jeffrey Epstein. According to a statement from Character.AI, those bots have since been removed. The company is currently facing multiple lawsuits alleging the app contributed to pre-teen users’ suicides.