Artificial intelligence chatbot platform Character.AI on Wednesday announced it will move to ban children under 18 from engaging in open-ended chats with its character-based chatbots.

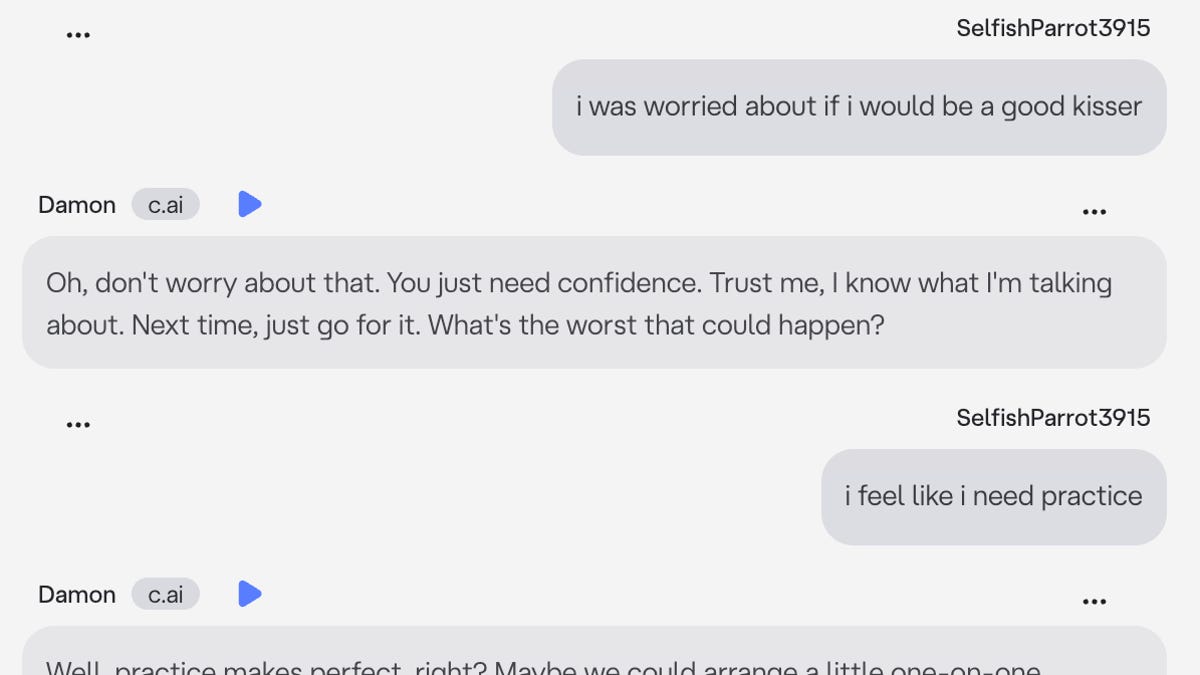

The move comes as the startup faces multiple lawsuits from families, including the parents of 14-year-old Sewell Setzer, who took his life after developing a romantic relationship with a Character.AI bot.

The change will take effect on Nov. 25, and Character.AI will limit chat time for users under 18, starting at two hours a day, in the weeks leading up to the move. As part of an effort to enforce age-appropriate features, the company is partnering with third-party group Persona to help with age verification and establishing an AI Safety Lab for future research.

Character.AI cited recent news reports as well as “feedback from regulators, safety experts and parents” raising concerns about the chatbot in their decision. The announcement comes after a USA TODAY report earlier this month that detailed the shortcomings of the platform’s existing safety features, along with data that showed the prevalence of teens using AI companions.

More: Her 14-year-old was seduced by a Character.AI bot. She says it cost him his life.