TL;DRSoderbergh used Meta’s AI for 10% of his Cannes Lennon documentary. Critics slammed it. He says the real problem is everyone else not disclosing.

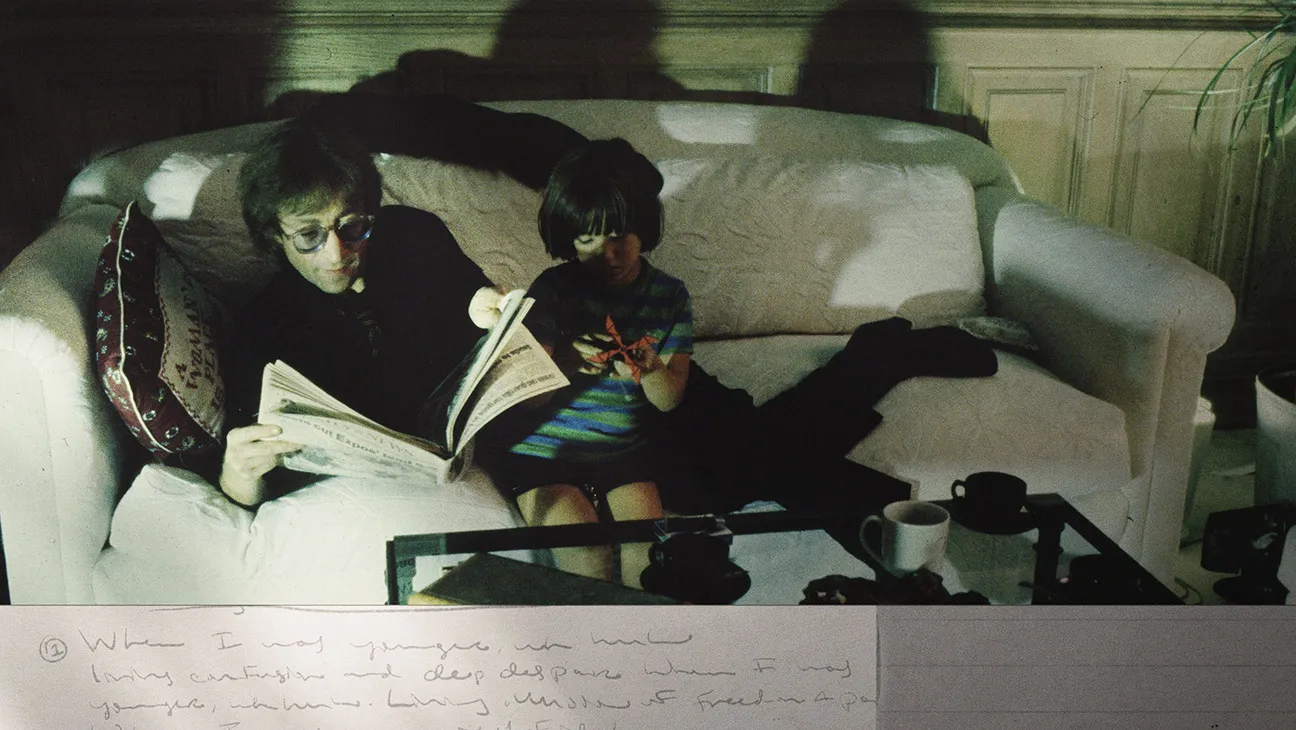

Steven Soderbergh’s “John Lennon: The Last Interview” premiered on Saturday at the 79th Cannes Film Festival. Built around a never-before-released two-hour-and-45-minute radio interview that Lennon and Yoko Ono gave to a San Francisco KFRC radio crew from their home in New York’s Dakota Apartments on December 8, 1980, hours before Lennon was shot and killed, the 97-minute documentary is being discussed at Cannes less for what Lennon said than for how Soderbergh chose to visualise it.

Approximately 10% of the film’s visuals were generated using Meta’s AI software. Soderbergh disclosed the partnership earlier this year and has been characteristically direct about the backlash that followed. “I knew what was coming,” he told the Associated Press in Cannes on Saturday. “You don’t say yes to Meta offering you these tools and offering to finish the film and not know you’re going to come in for some heat. That was part of the deal.”

The AI-generated sections, which critics at Cannes overwhelmingly criticised, are abstract and surreal: circles of light, a black rose morphing into a choreographic pattern, paint colours mixing in split screen alongside lovers caressing. There are no deepfakes of Lennon. The sequences were created for passages where the conversation turns philosophical and no archival footage exists to illustrate the ideas being discussed. Soderbergh assembled more than 1,000 photographs and video clips from the archive to cover the rest of the film, editing them to the rhythm of the conversation in what reviewers have described as a hyperkinetic photo album.