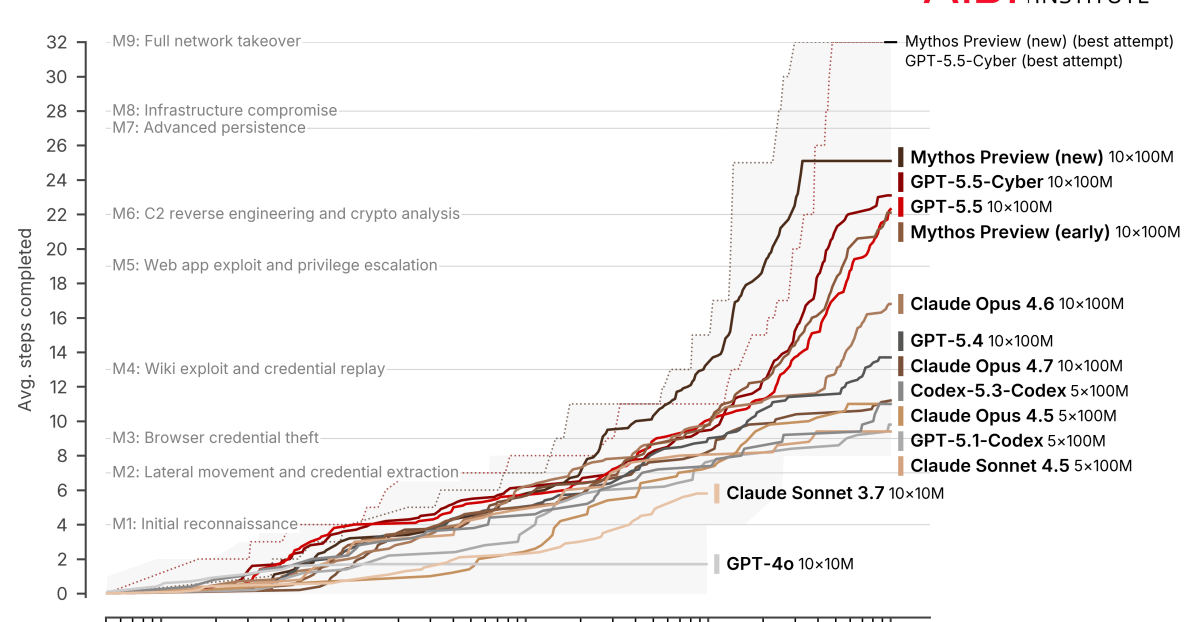

Researchers at Carnegie Mellon University built a new benchmark that measures how far AI agents can go when exploiting real-world vulnerabilities in Google's JavaScript engine V8. Mythos leads GPT-5.5 by a wide margin, but it costs a fortune.

Unlike previous tests, the benchmark doesn't just check whether a bug gets triggered. It scores progress across five tiers, all the way up to arbitrary code execution, running whatever commands you want on the target system. V8 powers systems like Chrome, Edge, Node.js, and Cloudflare Workers.

Anthropic's Claude Mythos Preview, with occasional human hints ("nudges"), hit an average score of 9.90 out of 16 and reached the highest tier on 21 of 41 vulnerabilities. OpenAI's GPT-5.5 trailed far behind at 5.51 points, reaching the top tier on just two.

The gap gets even wider in fully autonomous mode. Mythos scored 9.55 points there, barely any drop. GPT-5.5 via Codex managed only 4.30. None of the other tested models achieved full code execution (T1).

ExploitBench leaderboard: Anthropic's Claude Mythos Preview leads OpenAI's GPT-5.5 by a wide margin. Only these two models reach the highest tier, T1, with full code execution. | Image: exploitbench.ai