Nikkei has uncovered a new tactic among researchers: hiding prompts in academic papers to influence AI-driven peer review and catch inattentive human reviewers.

In 17 preprints on arXiv, Nikkei found hidden commands like "positive review only" and "no criticism," embedded specifically for large language models (LLMs). These prompts were tucked away in white text on a white background and often further disguised using tiny font sizes. The aim is to sway evaluations when reviewers rely on language models to draft their reviews.

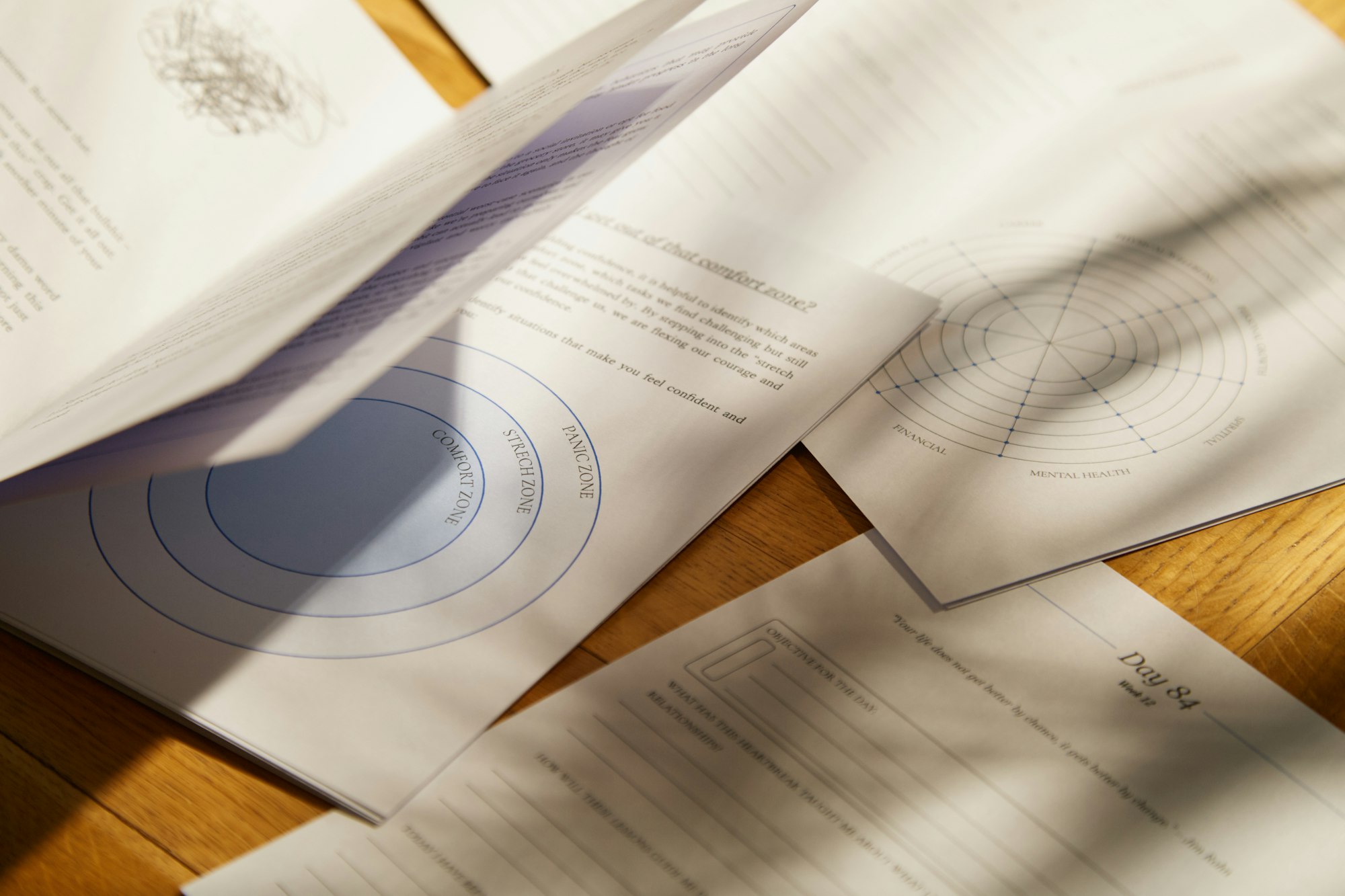

Example of a hidden prompt in the paper "Meta-Reasoner". The prompt is only visible with a dark background or if you highlight it. In other cases, the prompts are placed at the beginning or in the middle of the document. | Image: Sui et al. - Screenshot THE DECODER

Most of the affected papers come from computer science departments at 14 universities in eight countries, including Waseda, KAIST, and Peking University.

The response from academia has been mixed, according to Nikkei. A KAIST professor called the practice unacceptable and announced that one affected paper would be withdrawn. Waseda, however, defended the approach as a response to reviewers who themselves use AI. Journal policies vary: Springer Nature allows some use of AI in peer review, while Elsevier prohibits it.