by Kelly Knight

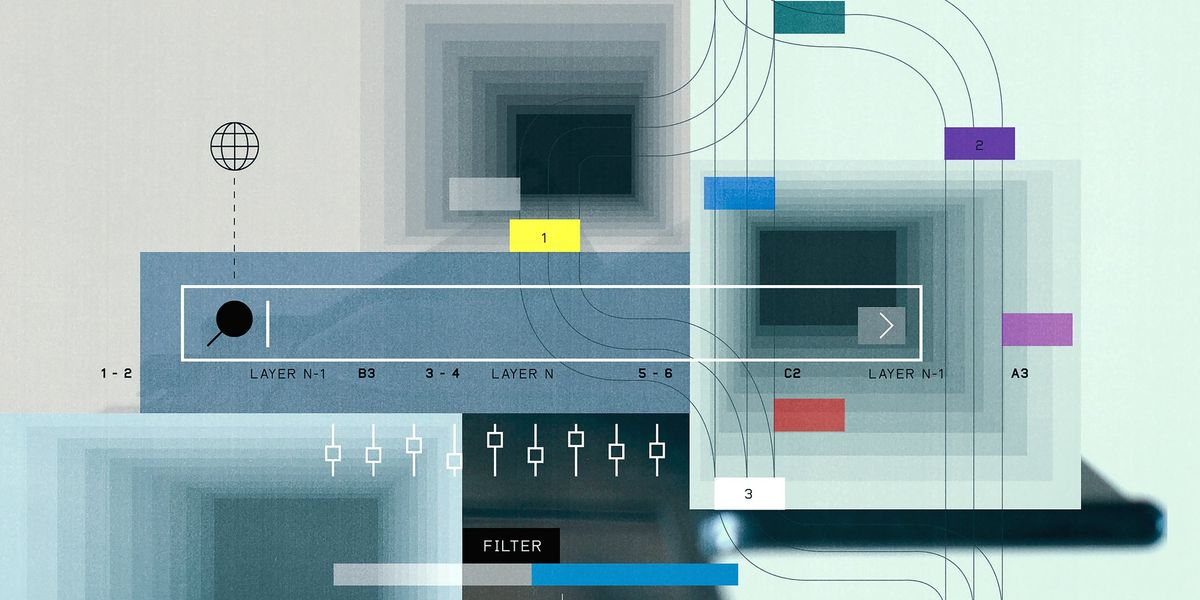

As enterprises push AI beyond the pilot stage, the cost and complexity of running inference at scale are forcing a fundamental rethink of how infrastructure is designed, governed and sourced, putting horizontal cloud — one shared foundation for running workloads across the enterprise — at the center of AI strategy.

The open hybrid cloud model is emerging as a practical answer to a market that has become dangerously dependent on a small number of frontier model providers, including Anthropic PBC and OpenAI Group PBC. The journey from frontier model convenience to self-managed, cost-efficient inference now sits at the center of enterprise AI strategy, according to Stephen Watt (pictured), vice president and distinguished engineer, Office of the CTO, at Red Hat Inc.

“You’d be crazy today not to start on a frontier model provider, like OpenAI or Anthropic, but then after a while, when you hit a certain scale — like in token economics — you’d be crazy to stay on that,” Watt said. “That’s the dilemma: When you want to leave, what are your options, and how do you navigate [that]?”

Watt spoke with theCUBE’s Rob Strechay and Rebecca Knight at Red Hat Summit 2026, during an exclusive broadcast on theCUBE, SiliconANGLE Media’s livestreaming studio. They discussed how inference routing, agentic AI governance and horizontal cloud architecture are reshaping enterprise AI deployments. (* Disclosure below.)