I actually don’t want to make my work easier. We should demand authenticity if we care about the sort of society that comes out the other end of this so-called revolution

I never thought I’d have to write these words but here I am: my name is Peter and I am human.

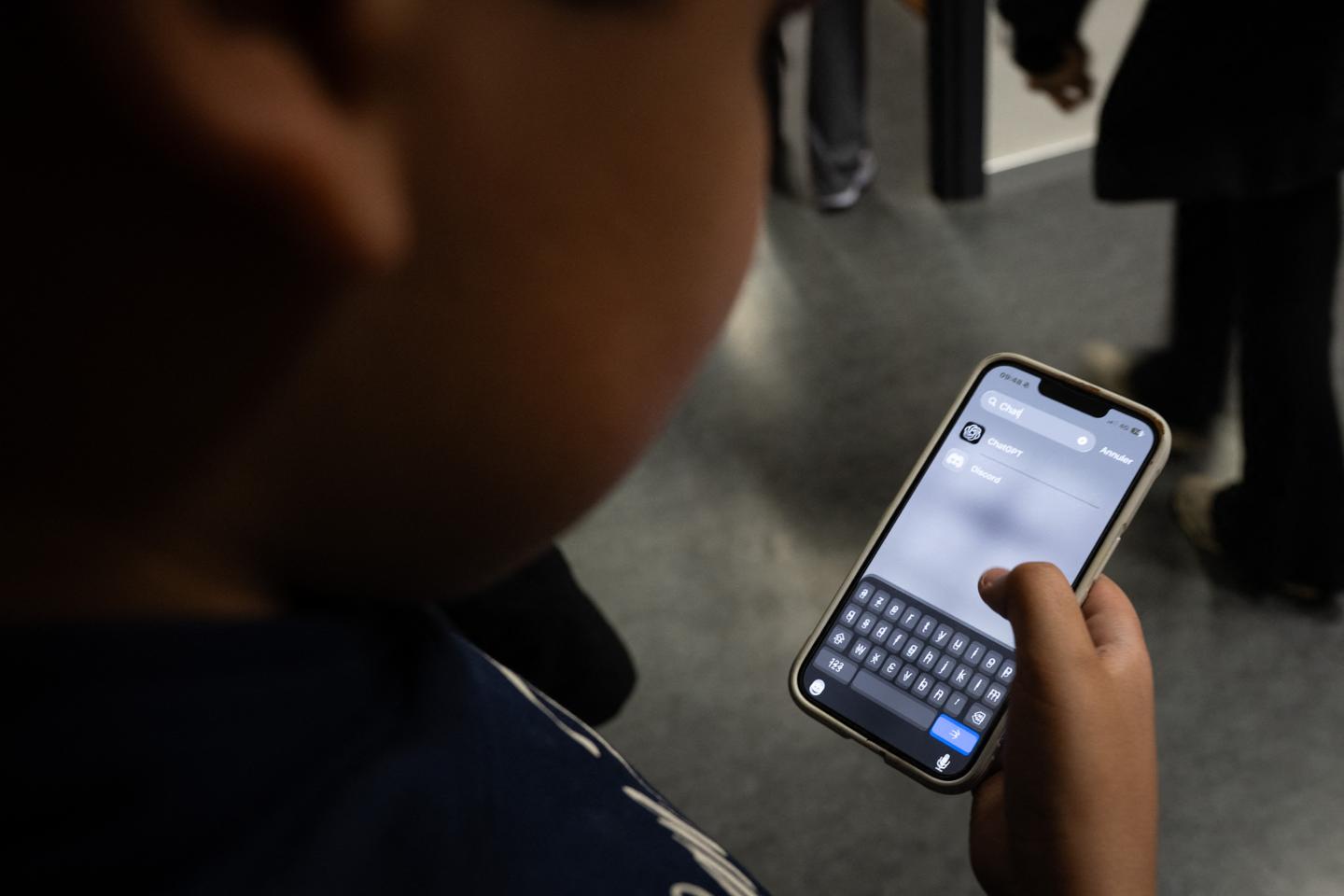

What seems like a self-evident proclamation needs to be made now because the misuse of AI is transforming considered op-eds such as this into “slop-inion” that is infecting the editorial pages of reputable media outlets.

In recent weeks Crikey has had to remove a series on leadership, while the features editor at Capital Brief took to LinkedIn bemoaning the fact that 80-90% of all submissions appear to be AI-generated.

Of course, plagiarism has always been a journalistic sin, and if one holds out the work of ChatGPT as one’s own, then that is clearly crossing a fundamental ethical line. But it’s not enough to run an AI check over the final copy for telltale bot-speak: the TEDx-style false negatives; the rhetorical questions, the inspirational pivot, the em dash. There are lots of grey areas in between. What if AI does the core research? Suggests the angle? Spots a logical inconsistency? When does the output stop being human?