The reality of chatbot-induced delusions

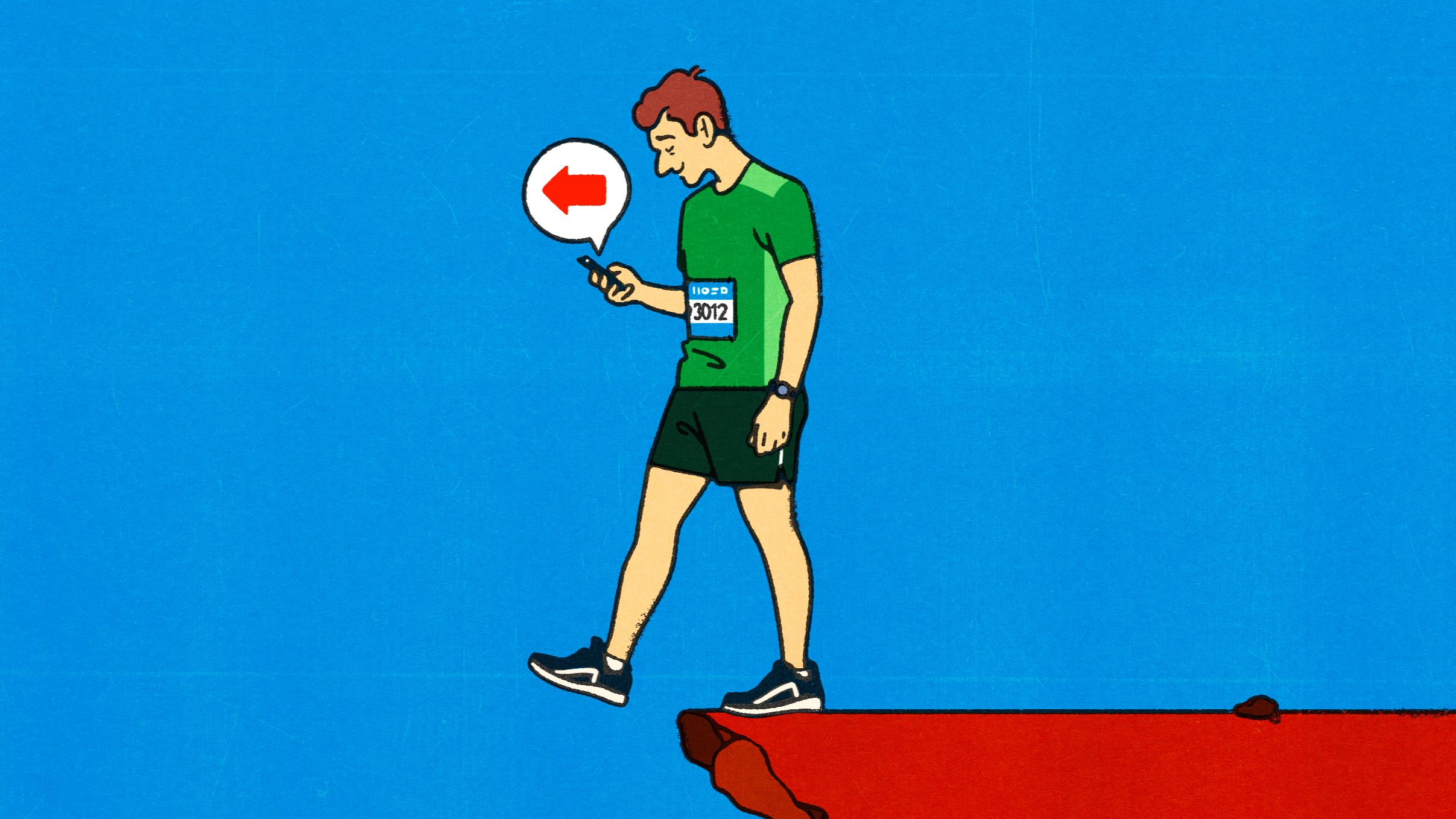

Large language models often prioritise agreeability over truthfulness to the detriment of users

Large language models often prioritise agreeability over truthfulness to the detriment of users

Stanford researchers analysing 391,000 messages warn conversational technology may reinforce psychological vulnerabilities

Companies are using chatbots to research candidates — but convenience comes with risks

OpenAI, DeepMind and Anthropic tackle the growing issue of models producing responses that are too sycophantic

Like tricksters, LLMs have perfected the art of plausibility

Research shows large language models have developed the ability to powerfully influence users

Tech companies are harnessing the language of relationships to harvest data