Feature for supervised accounts rolls out as Meta platforms faces US trials over alleged harms to children

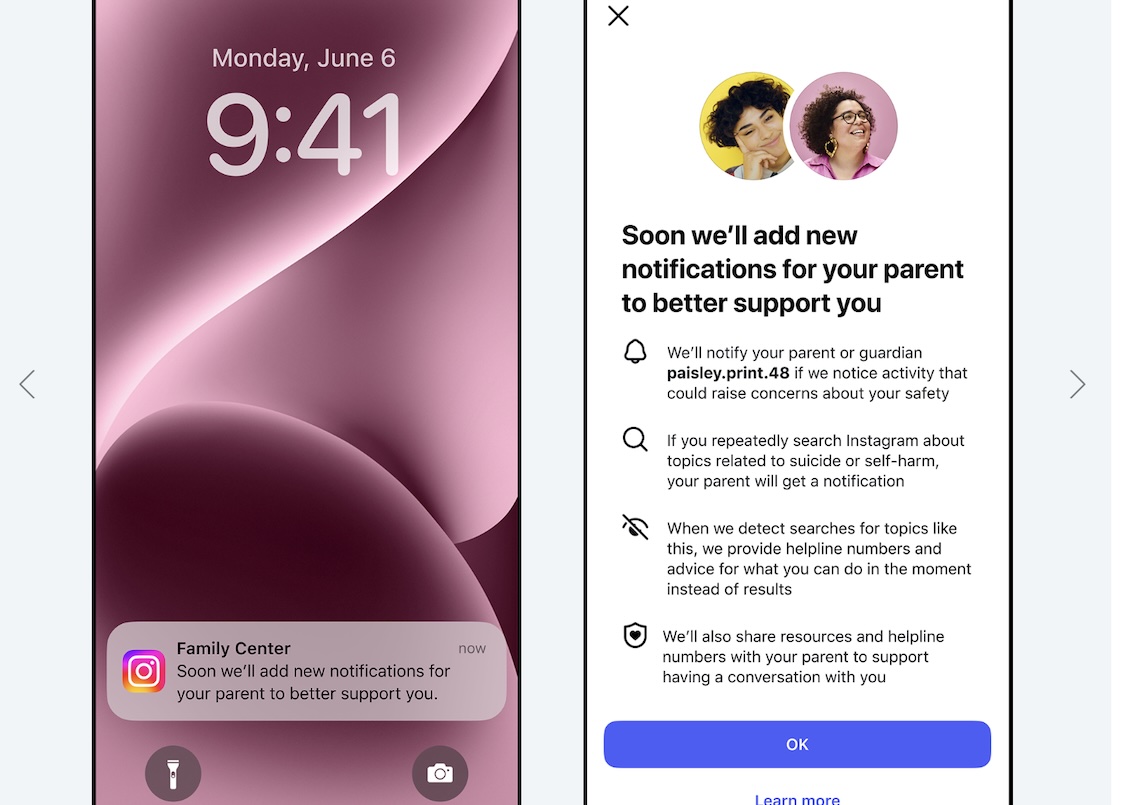

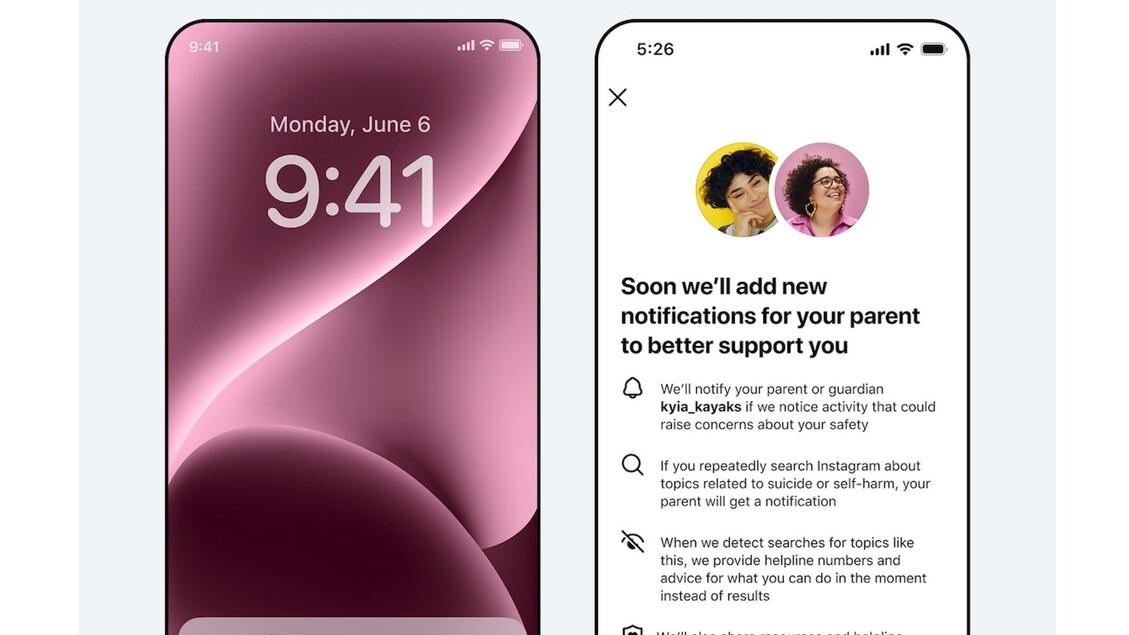

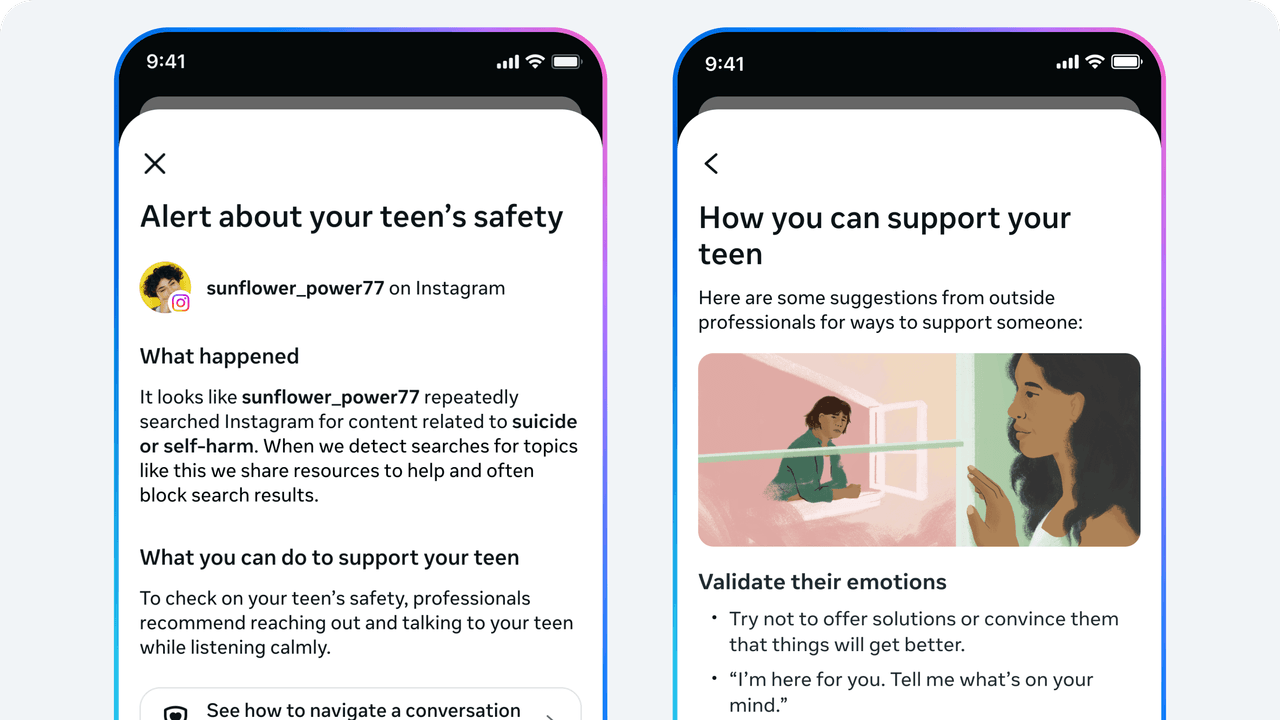

Instagram said on Thursday it will start alerting parents if their kids repeatedly search for terms clearly associated with suicide or self-harm. The alerts will only go to parents who are enrolled in Instagram’s parental supervision program.

Instagram says it already blocks such content from showing up in teen accounts’ search results and directs people to helplines instead.

The announcement comes as Meta is in the midst of two trials over harms to children. A trial under way in Los Angeles questions whether Meta’s platforms deliberately addict and harm minors. Another in New Mexico seeks to determine whether Meta failed to protect kids from sexual exploitation on its platforms. Thousands of families – along with school districts and government entities – have sued Meta and other social media companies claiming they deliberately design their platforms to be addictive and fail to protect kids from content that can lead to depression, eating disorders and suicide.

Meta executives including Mark Zuckerberg, the chief executive, have disputed that the platforms cause addiction. During questioning by the plaintiff’s lawyer, in Los Angeles, Zuckerberg said he still agreed with a previous statement he made that the existing body of scientific work has not proved that social media causes mental health harms.