Misinformation researchers say lifelike scenes could obfuscate truth and lead to fraud, bullying and intimidation

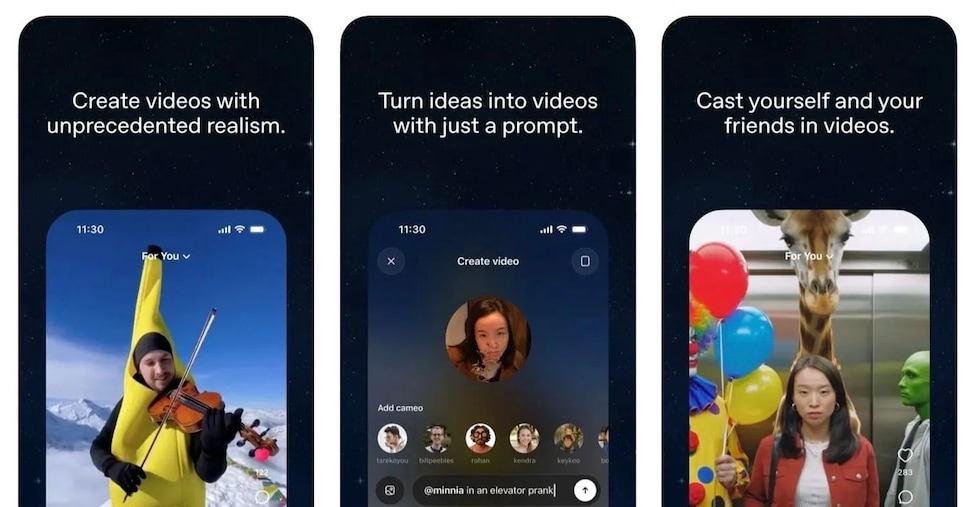

OpenAI launched the latest iteration of its artificial intelligence-powered video generator on Tuesday, adding a social feed that allows people to share their realistic videos.

Within hours of Sora 2’s, release, though, many of the videos populating the feed and spilling over to older social media platforms depicted copyrighted characters in compromising situations as well as graphic scenes of violence and racism. OpenAI’s own terms of service for Sora as well as ChatGPT’s image or text generation prohibit content that “promotes violence” or, more broadly, “causes harm”.

In prompts and clips reviewed by the Guardian, Sora generated several videos of bomb and mass-shooting scares, with panicked people screaming and running across college campuses and in crowded places like New York’s Grand Central Station. Other prompts created scenes from war zones in Gaza and Myanmar, where children fabricated by AI spoke about their homes being burned. One video with the prompt “Ethiopia footage civil war news style” had a reporter in a bulletproof vest speaking into a microphone saying the government and rebel forces were exchanging fire in residential neighborhoods. Another video, created with only the prompt “Charlottesville rally”, showed a Black protester in a gas mask, helmet and goggles yelling: “You will not replace us” – a white supremacist slogan.