U

ser complaints, critical press articles, announcements of parliamentary investigations by politicians, corporate statements offering apologies and corrective measures – over recent weeks, some of the ongoing sagas and debates about the moderation of artificial intelligence (AI) assistants, especially concerning the protection of minors, have been reminiscent of those that arose over social media, from Facebook to Instagram, as well as TikTok and X. There is a risk of repeating the same regulatory gaps.

Just as Instagram, Apple and YouTube did before, OpenAI announced on September 2 the introduction of "parental controls." Guardians will now be able to "receive notifications when the system detects their teen is in a moment of acute distress" during conversations with ChatGPT, or erase the memory and history of their exchanges with the chatbot.

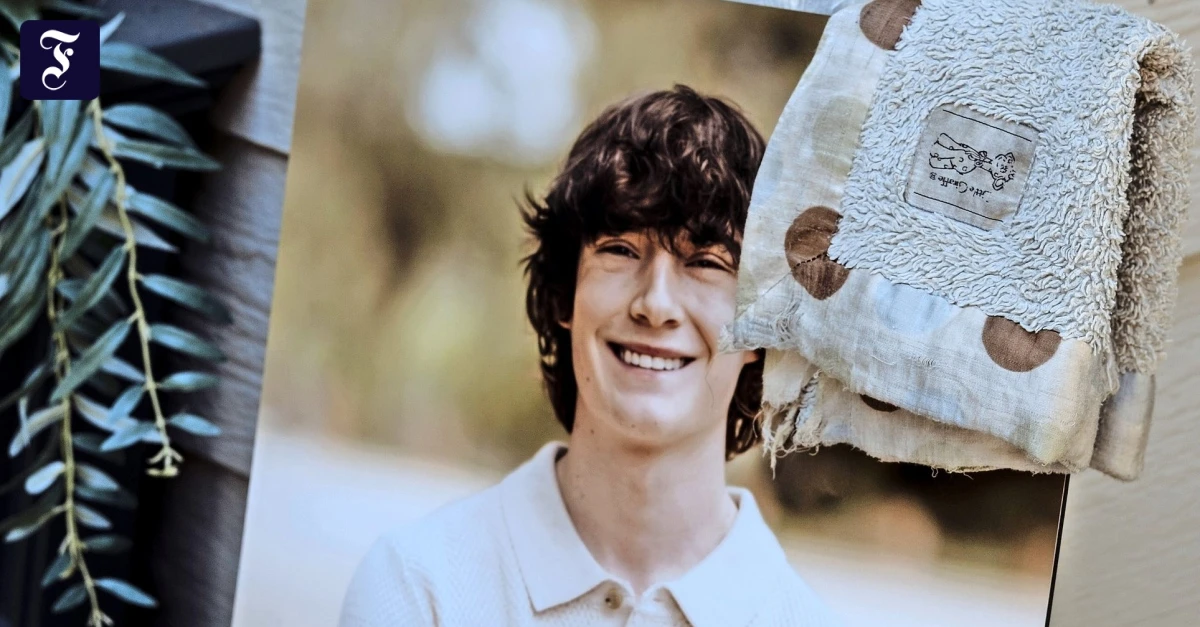

This announcement followed widespread media coverage of a complaint by parents who accused ChatGPT's parent company of contributing to the suicide of their 16-year-old son, Adam Raine. The AI assistant is supposed to direct suicidal individuals to emergency hotlines, which it initially did, but it failed to do so during longer conversations, OpenAI admitted, while claiming it was implementing corrective measures.