Want smarter insights in your inbox? Sign up for our weekly newsletters to get only what matters to enterprise AI, data, and security leaders. Subscribe Now

A comprehensive new study has revealed that open-source artificial intelligence models consume significantly more computing resources than their closed-source competitors when performing identical tasks, potentially undermining their cost advantages and reshaping how enterprises evaluate AI deployment strategies.

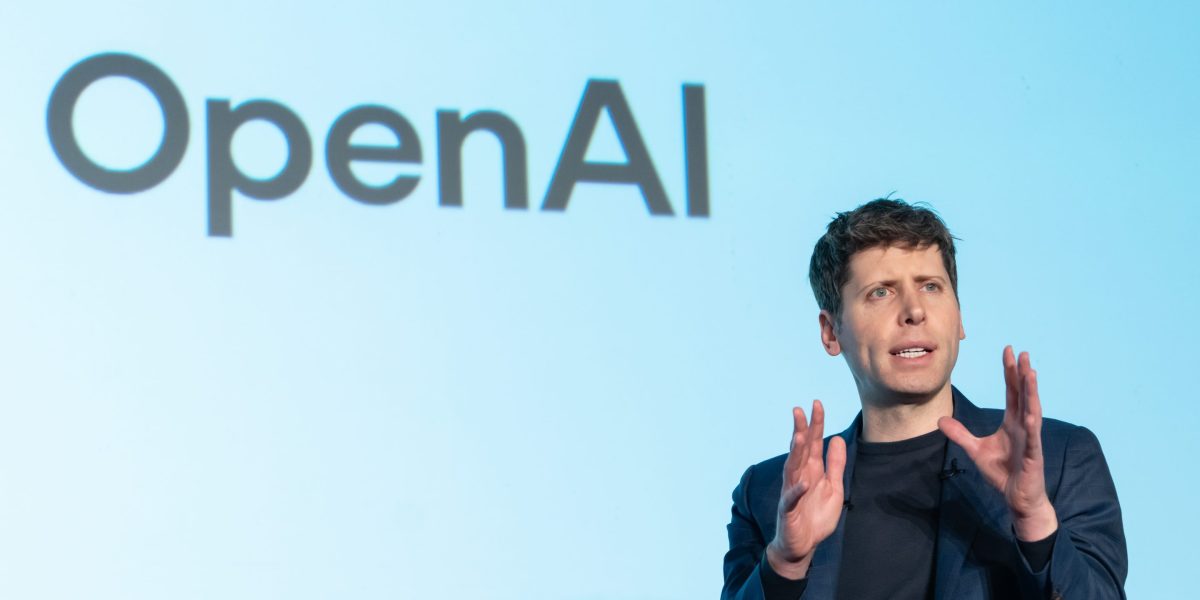

The research, conducted by AI firm Nous Research, found that open-weight models use between 1.5 to 4 times more tokens — the basic units of AI computation — than closed models like those from OpenAI and Anthropic. For simple knowledge questions, the gap widened dramatically, with some open models using up to 10 times more tokens.

Visa’s $3.5B Bet on AI

Measuring Thinking Efficiency in Reasoning Models: The Missing Benchmarkhttps://t.co/b1e1rJx6vZ