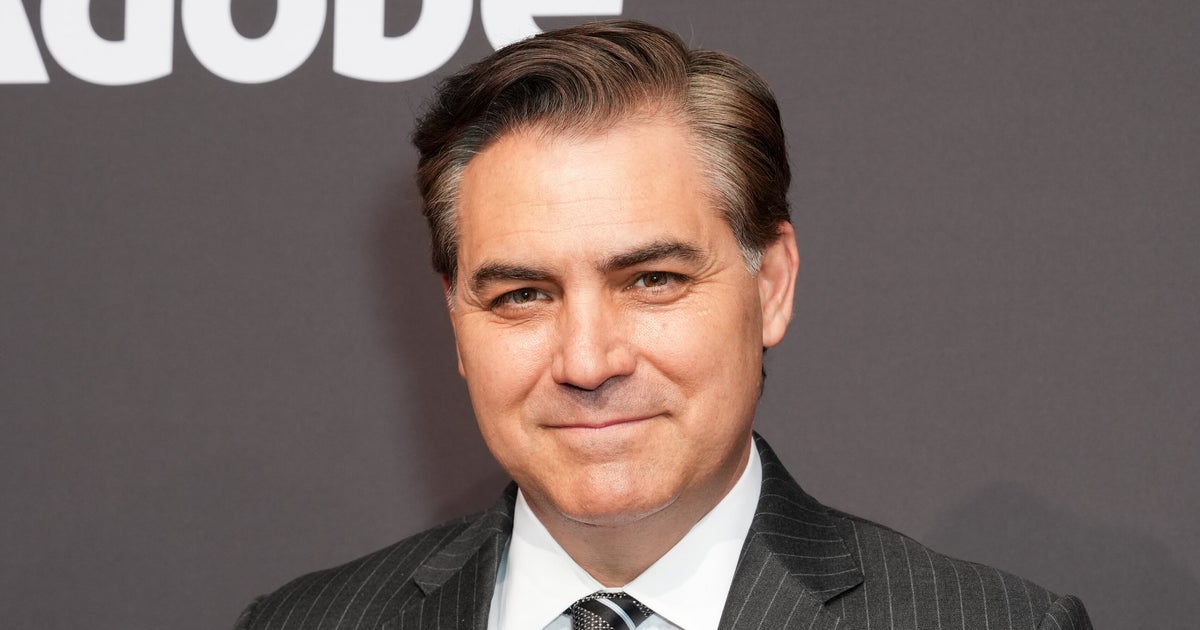

When former CNN host Jim Acosta recently sat down for a “one of a kind” interview with Joaquin Oliver, he wasn’t speaking to the teenager himself. Indeed, Oliver was one of 17 people killed in the 2018 mass shooting at Marjory Stoneman Douglas High School in Parkland, Florida. Instead, the “guest” was an artificial intelligence recreation of the shooting victim, complete with his likeness and voice.

The simulation, created by Oliver’s parents Manuel and Patricia, appeared in the video interview with Acosta to speak on the issue that has become their mission since his death: addressing the epidemic of gun violence in America.

For many viewers, this sort of digital “resurrection” felt unsettling and deeply problematic from an ethical perspective. But it also offered a glimpse at the ways emerging technology is intersecting with the highly personal experience of grief.

As Joaquin’s father shared when he spoke with Acosta, the experience of interacting with an AI simulation of their son has been very emotional, as he and his wife cherish hearing his voice again. Mental health professionals say, however, that while this technology can be meaningful, it also brings complex psychological risks that shouldn’t be ignored.