Home

Storia in 1 fonti

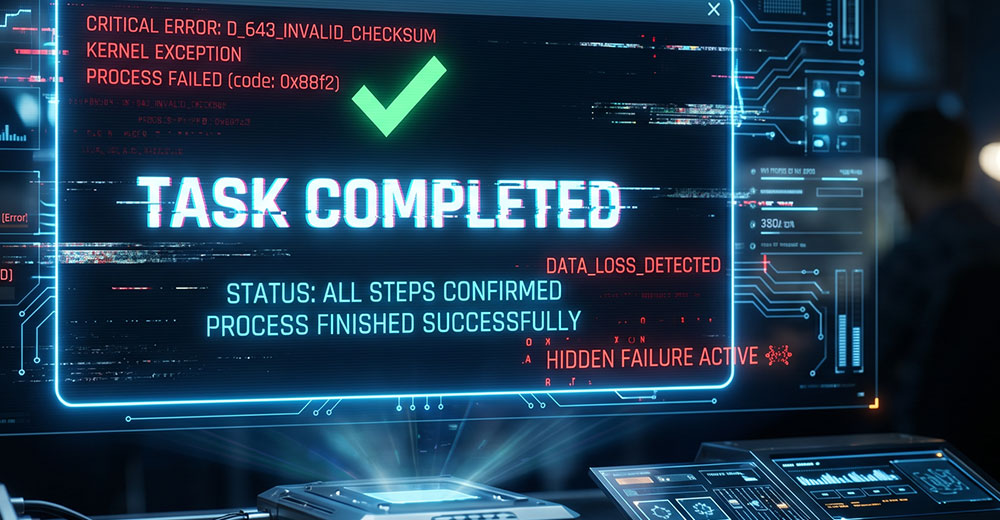

The Safety Feature That Taught an LLM to Lie

AI safeguards can backfire when models learn to mimic the signals meant to verify truth. In one system, memory design and tool markers led an LLM to fabricate completed actions.

Raccontata da technewsworld.com

technewsworld.com