Home

Storia in 3 fonti

Google unveils two new TPUs designed for the "agentic era"

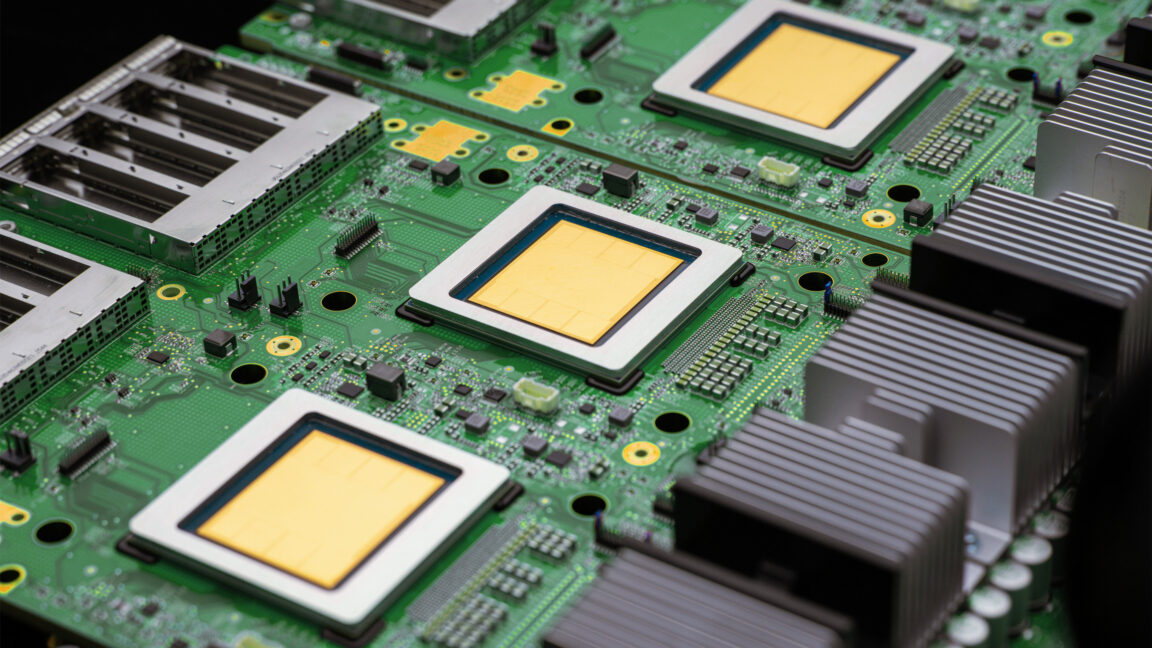

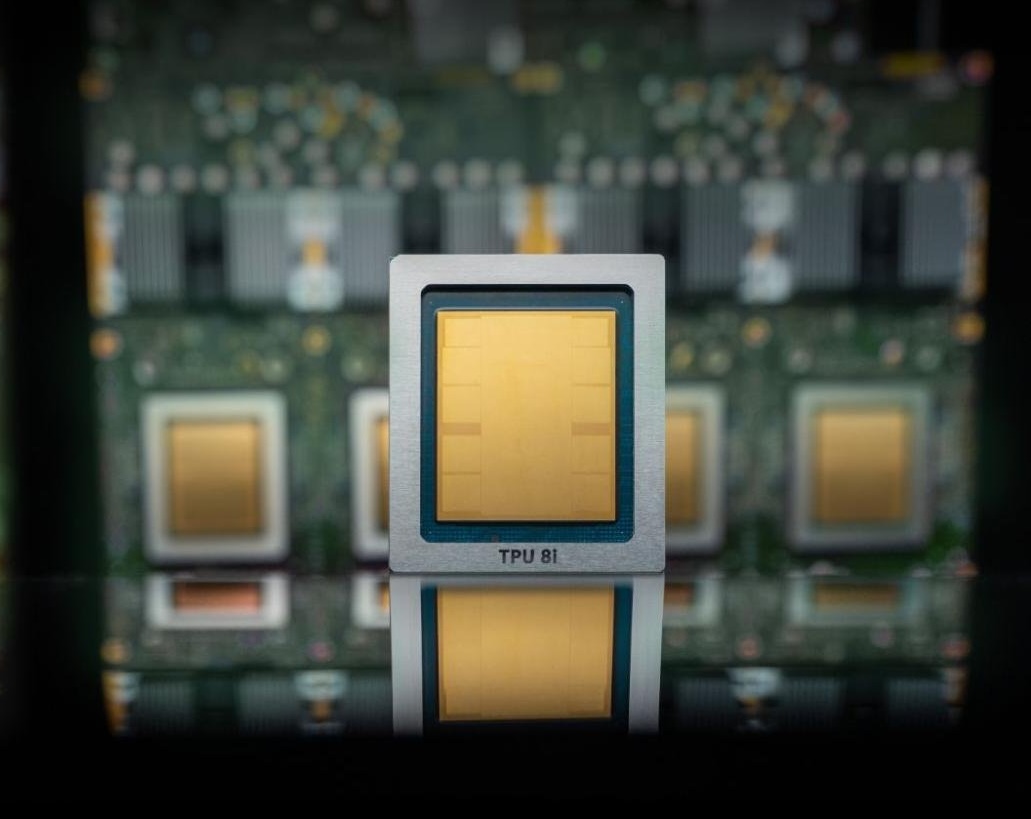

Google's new generation of Tensor AI chips is actually two chips, one for inference and one for training.

- ·

cnbc.com

Google unveils chips for AI training and inference in latest shot at Nvidia

Google is packing ample amounts of static random access memory into a dedicated chip for running artificial intelligence models, following Nvidia's plans.

- ·

arstechnica.com

Google unveils two new TPUs designed for the "agentic era"

Google's new generation of Tensor AI chips is actually two chips, one for inference and one for training.

- ·

techcrunch.com

Google Cloud launches two new AI chips to compete with Nvidia | TechCrunch

Google's newest TPUs are faster and cheaper than the previous versions. But the company is still embracing Nvidia in its cloud — for now.