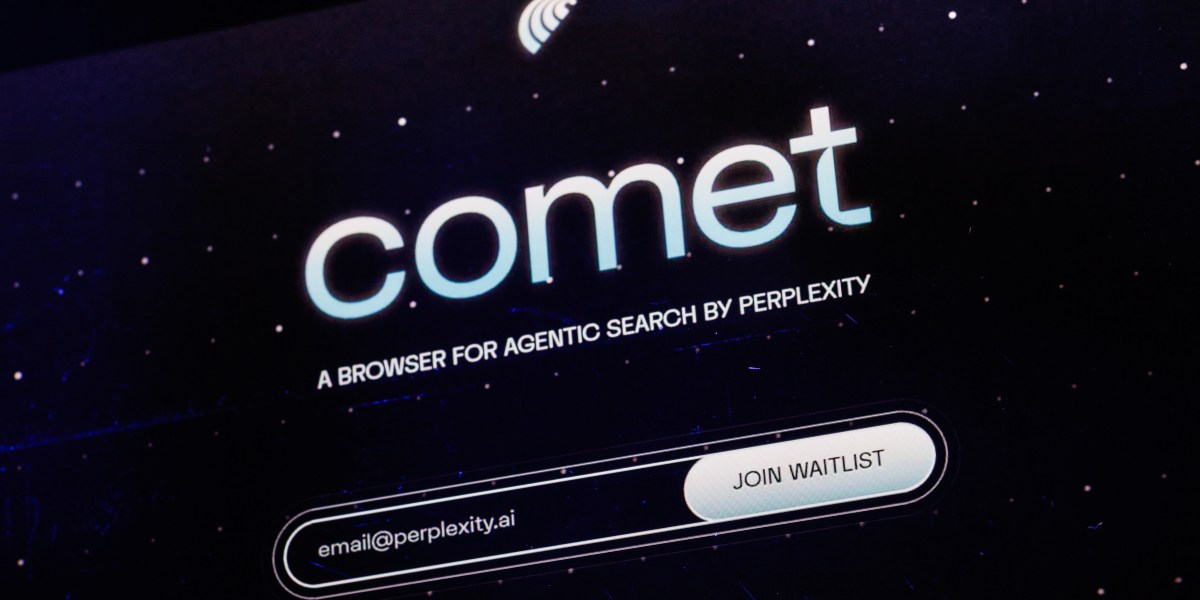

2026-04-2115 min readThis post is also available in 日本語 and 한국어.For us humans to interact with the online world, we need a gateway: keyboard, screen, browser, device. What is called "human detection" online are patterns that humans use when interacting with such devices. These patterns have changed in recent years: a startup CEO now uses their browser to summarize the news, a tech enthusiast automates the process to book their concert tickets when sales open at night, someone who's visually impaired enables accessibility on their screen reader, and companies route their employee traffic through zero trust proxies.At the same time, website owners are still looking to protect their data, manage their resources, control content distribution, and prevent abuse. These problems aren’t solved by knowing whether the client is a human or a bot: There are wanted bots and there are unwanted humans. These problems require knowing intent and behavior. The ability to detect automation remains critical. However, as the distinctions between actors become blurry, the systems we build now should accommodate a future where "bots vs. humans" is not the important data point.What actually matters is not humanity in the abstract, but questions such as: is this attack traffic, is that crawler load proportional to the traffic it returns, do I expect this user to connect from this new country, are my ads being gamed?What we discuss with the term “bots” is really two stories. The first is whether website owners should let known crawlers through when they are not getting traffic back. We have touched on this with bot authentication with http message signatures for crawlers that want to identify without being impersonated. The second is the emergence of new clients that do not embed the same behaviors as web browsers historically did, which matters for systems such as private rate limit.In this post, we explore how web protection works today, and how it must evolve when the line between bot and human is fading.

Moving past bots vs. humans

As AI assistants and privacy proxies challenge the capabilities of traditional bot detection, the Web needs new models for accountability. We believe that control should remain with the client, and that an open ecosystem of anonymous credentials is key to preserving user privacy while protecting origins from abuse.