For all their technical capabilities, large language models (LLMs) still have a memory problem. They can lack the ability to retain context across conversations, and don’t always contain the frameworks to let them access relevant data, ultimately making their results unreliable and untrustworthy.

NoSQL database pioneer MongoDB is taking on this problem, releasing new persistent memory, retrieval, embedding, and re-ranking features, all integrated into one platform. The company is also introducing new security connectivity, open-source plugins, and other framework integrations to support agentic AI workloads.

“Unlocking the power of agents requires memory,” Pete Johnson, MongoDB’s field CTO of AI, said during a press briefing. “Just like human memory, a good agentic memory organizes knowledge. It helps agents retrieve the right knowledge based on context and learn to make smarter decisions and take optimized actions over time.”

To advance automated retrieval and persistent agent memory, the company is adding Automated Voyage AI Embeddings in MongoDB Vector Search. The capability is now available in public preview.

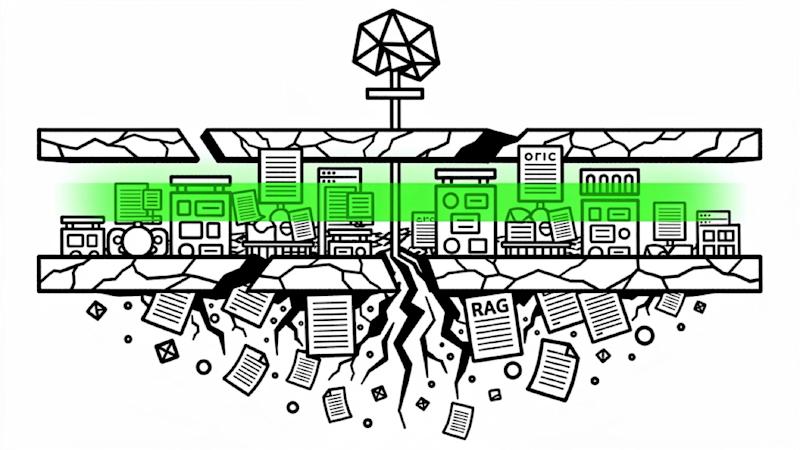

Fragmented AI stacks present another challenge. As builders grapple with them, they are often stuck paying what Ben Cefalo, MongoDB CPO, called the “synchronization tax.” To make data agent-searchable, developers must stitch together factor search, operational data stores, embedded models, and caches, then take the time to build complex data pipelines that keep everything in sync across systems.