The first category is the work that already existed. It includes novels, journalism, scientific papers, code repositories, academic textbooks, forum posts, lyrics, legal research, encyclopedias, personal essays, tutorials, art criticism, and the millions of pages people built and published on the internet. This work trains base models.

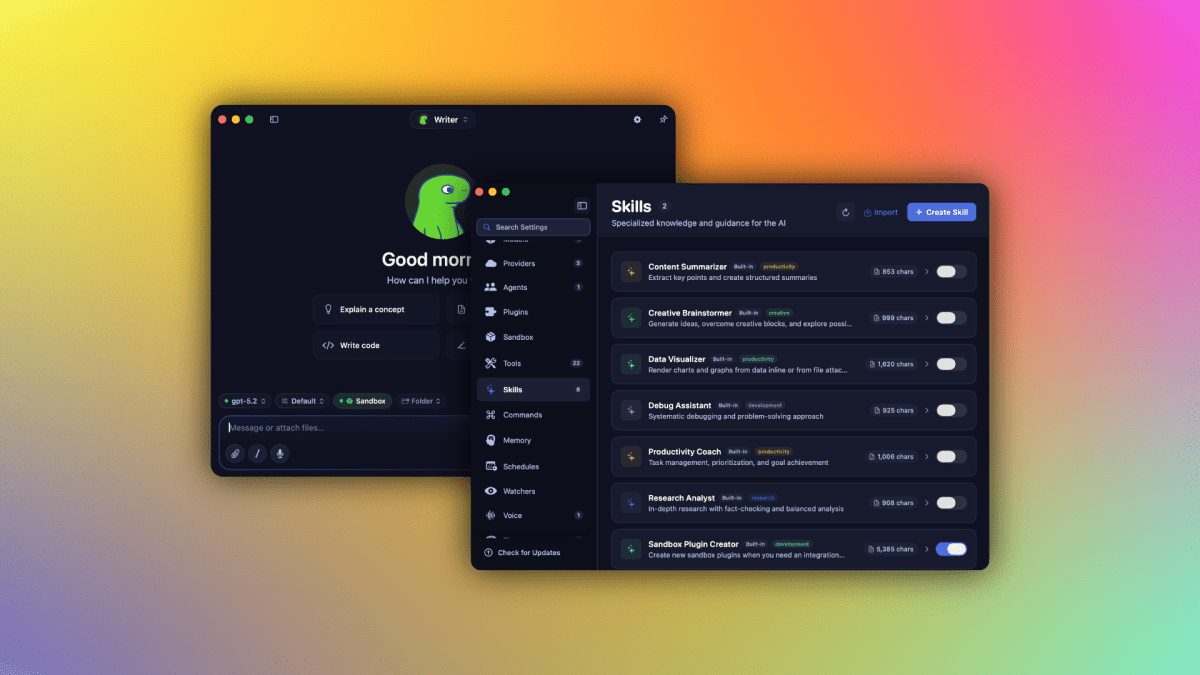

The second category is the work created after the scrape. It includes annotation, ranking, red-teaming, moderation, preference labeling, instruction writing, expert feedback, and exposure to the worst material the internet contains. This work turns a raw base model into something a customer can use and a company can sell.

The industry calls the first category "publicly available data." It calls the second "human feedback". Both labels hide the issue. Public access is not permission. Human feedback is not automatically fair pay, informed consent, psychological safety, or transparent labor.

Urro's position is simple. Modern AI has been built by converting other people's work into private model capability. The source material is renamed data. The people who clean, rank, and repair the model are hidden behind contractors. The finished system is then treated as proprietary property.