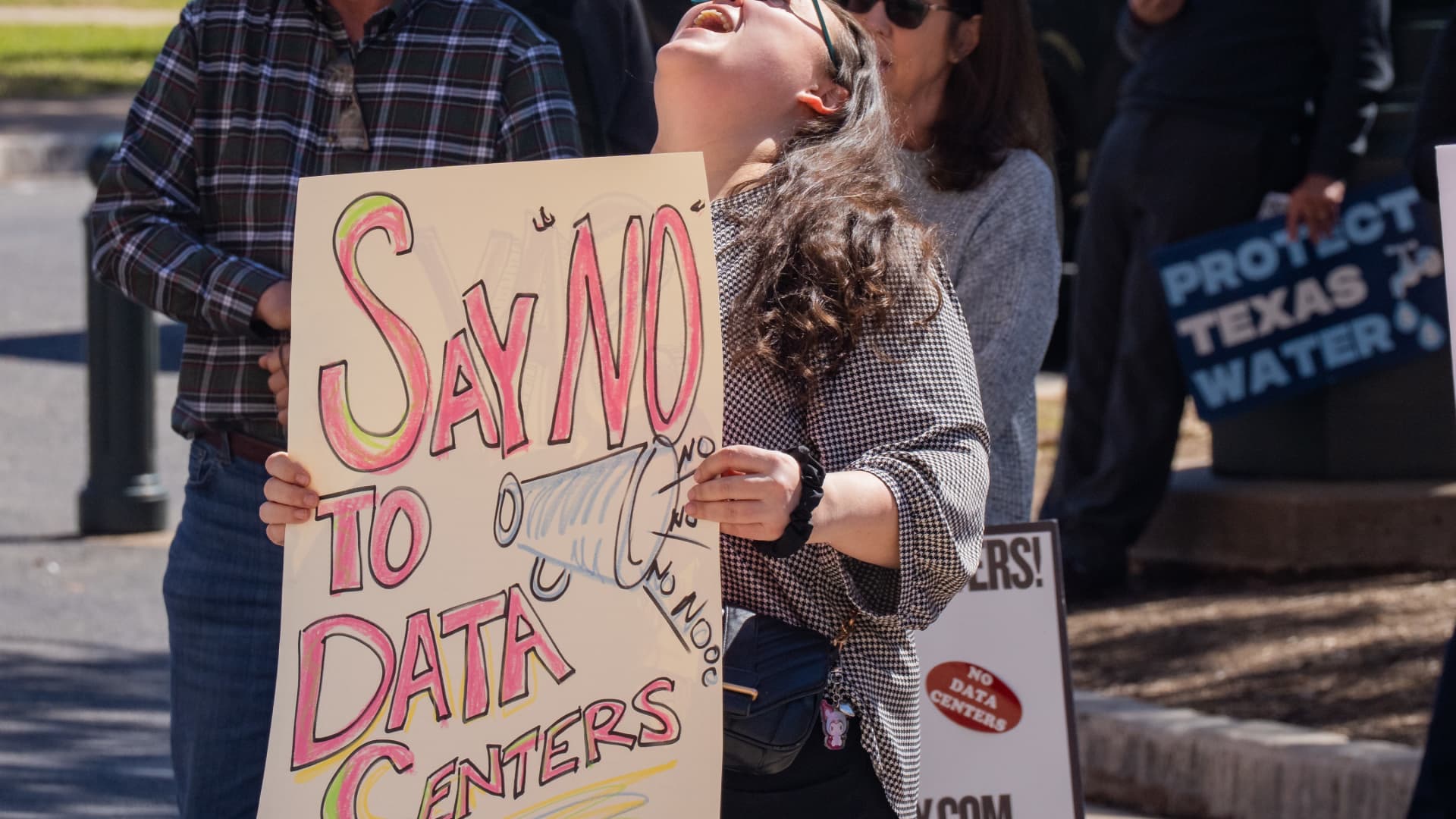

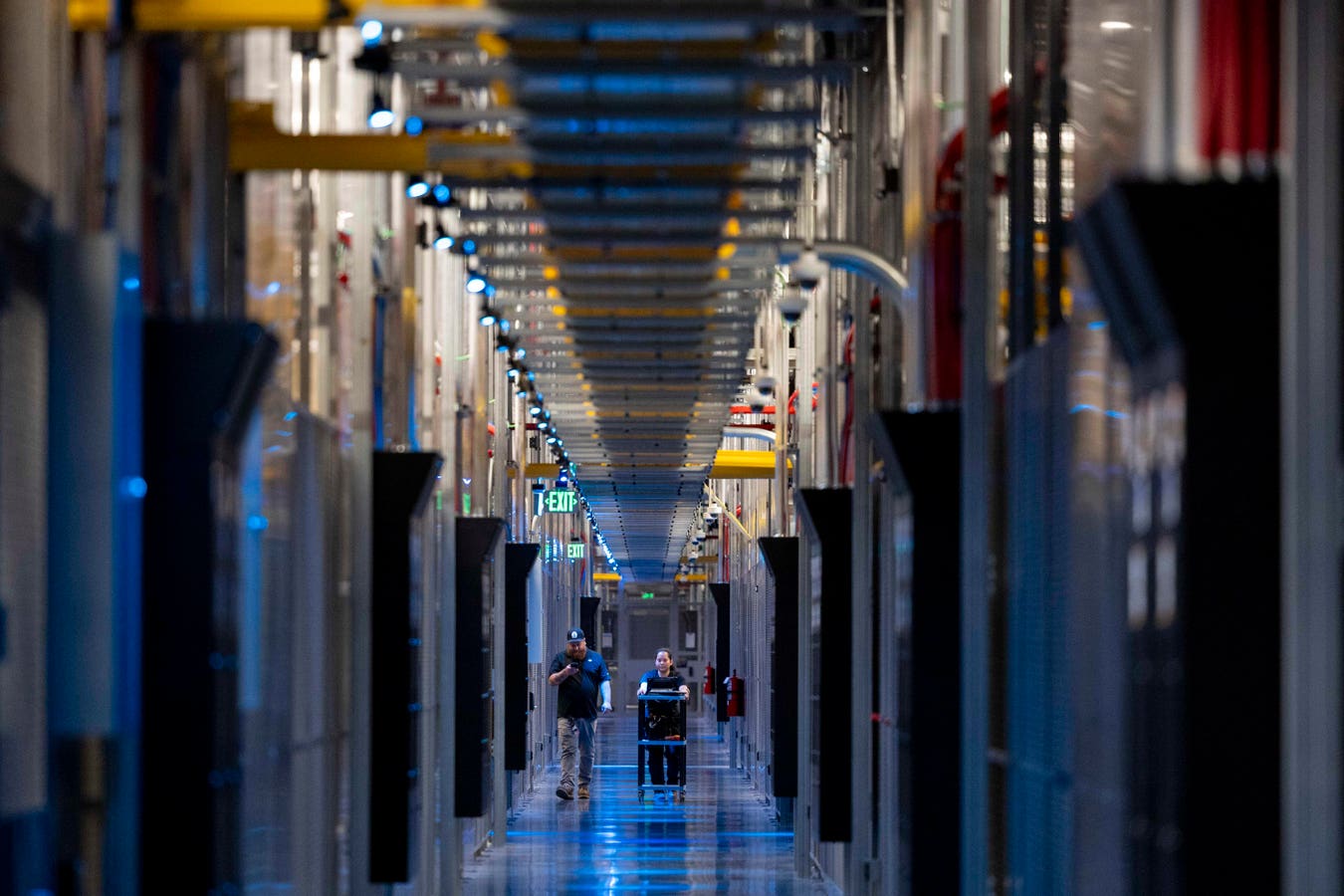

business man engeneer in modern datacenter server roomgettyThe numbers are staggering: The five largest American cloud providers have committed between $650 billion and $700 billion to build data centers in 2026 alone, nearly double their 2025 levels. Television’s Shark Tank investor Kevin O'Leary is planning a data center complex in Utah that would cover more than twice the area of Manhattan and consume as much electricity as Utah and Nevada currently do combined.Yet even some AI insiders are beginning to question whether the industry is building for a future that may never fully arrive. In a recent interview about decentralized AI infrastructure, Tether CEO Paolo Ardoino argued that “it’s not at all clear that the compute paradigm that we’re currently operating under is going to continue, in which case maybe you don’t need all those data centers.”The industry is committing capital at levels that assume not just sustained growth, but exponential increases lasting years into the future. If sheer scale were proof of sound strategy, this would be the most rational building program in American history. But it isn't.Construction Is Already StallingDespite the headline commitments, many U.S. data centers announced for 2026 have been delayed or canceled outright, according to Bloomberg and other news outlets. The reason isn’t wavering demand; it’s that the physical prerequisites for a functioning data center simply aren’t available in the markets where most of them are planned. Meanwhile, the wait to connect power-hungry data centers to the U.S. power grid is measured in years, not months. Worse, the grid, a patchwork of three aging interconnected networks designed for a different industrial era, is not equipped to handle such demand. The nation’s peak electricity supply is on track to fall short by 2028, with a gap reaching an estimated 175 gigawatts by 2033, according to French energy‑technology firm Schneider Electric.The Demand MirageMore concerning is that much of this construction is based on demand that may itself be overstated. Analysts note that a growing share of hyperscale data-center capacity is financed and leased through layered arrangements involving colocation operators, infrastructure investors, and hyperscalers, making headline demand harder to interpret and potentially overstating underlying end-user consumption.Paolo Ardoino, chief executive officer of Tether Holdings SA. Photographer: Camilo Freedman© 2026 Bloomberg Finance LPTether’s Ardoino believes most AI will operate at the edge with no connection to the cloud. “What will run on centralized data centers, on these behemoths, will be a niche,” he says.GPUs reach the end of their life in roughly three to four years. The buildings outlast the machines inside them by a generation. We are constructing permanent real estate to house rapidly depreciating hardware.The Dot-Com Fiber ParallelWe’ve seen this before. Between 1998 and 2000, U.S. telecom companies laid more than 80 million miles of fiber optic cable, driven in part by wildly inflated demand projections. Now defunct WorldCom infamously claimed internet traffic was doubling every 100 days, roughly ten times the actual rate. The result was catastrophic overcapacity, a sector collapse, and the bankruptcy of several major players.Cheaper Inference Doesn't Mean Less Capacity Is Needed — Until It DoesThe economics of running AI are adding an ironic subplot. The cost of inference (the computation required to generate each AI response) has collapsed by a factor of 1,000 in just three years. Next-generation inference chips promise further steep declines. For now, that is accelerating consumption because cheaper inference triggers more usage, particularly as agentic AI systems chain dozens of model calls per task. But inference is migrating toward specialized silicon and, increasingly, toward edge devices such as smartphones and laptops. Every workload that moves to the edge is a workload that no longer needs a row of GPU servers in a warehouse in rural Utah.A Map That Makes No SenseKevin O'Leary in New York City. (Photo by Roy Rochlin/Getty Images)Getty ImagesWhich brings us to O’Leary’s project and to the geographic mismatch at the heart of the bubble argument. Box Elder County, Utah, where O’Leary says he plans to build, has no established tech workforce and limited water resources. What it does have is land and a county commission willing to approve the project over the objections of thousands of residents. This is the geography of the bubble in miniature: massive, headline-grabbing announcements in markets chosen for their permissiveness rather than their suitability, followed by years of permitting battles, infrastructure gaps, and community opposition.The ReckoningNone of this means AI is a mirage. The technology is real, the demand is real, and enterprise adoption is accelerating. But the specific bet being placed - that hundreds of billions of dollars in GPU-dense facilities can be physically constructed, powered, and brought online across the United States before the hardware inside them depreciates and before inference migrates to the edge - is a bet with serious structural vulnerabilities.History suggests that transformative technologies often arrive roughly as promised, while the infrastructure built to carry them tends to arrive too early, in the wrong places, and at the wrong cost.In the mid‑ to late‑1800s, private companies and governments financed thousands of miles of railroad track, based on optimistic projections of trade, population growth, and land value. Many lines were built into sparsely populated areas or in redundant corridors, leading to overcapacity, bankruptcies, and a wave of consolidations.We’ve even seen this pattern before with data centers. In the late 1990s and early 2000s, companies built massive “Internet hosting” data centers, anticipating an explosion of web traffic and e‑commerce. While that traffic eventually materialized, demand initially fell far short of projections, leaving many of these facilities underutilized or empty.The internet changed the world. But many of the companies that spent huge sums wiring it in the late 1990s and early 2000s did not survive long enough to celebrate the revolution.

Why The Data Center Boom May Be Digging In The Wrong Dirt

AI insiders are beginning to question whether the industry is building data centers for a future that may never fully arrive.