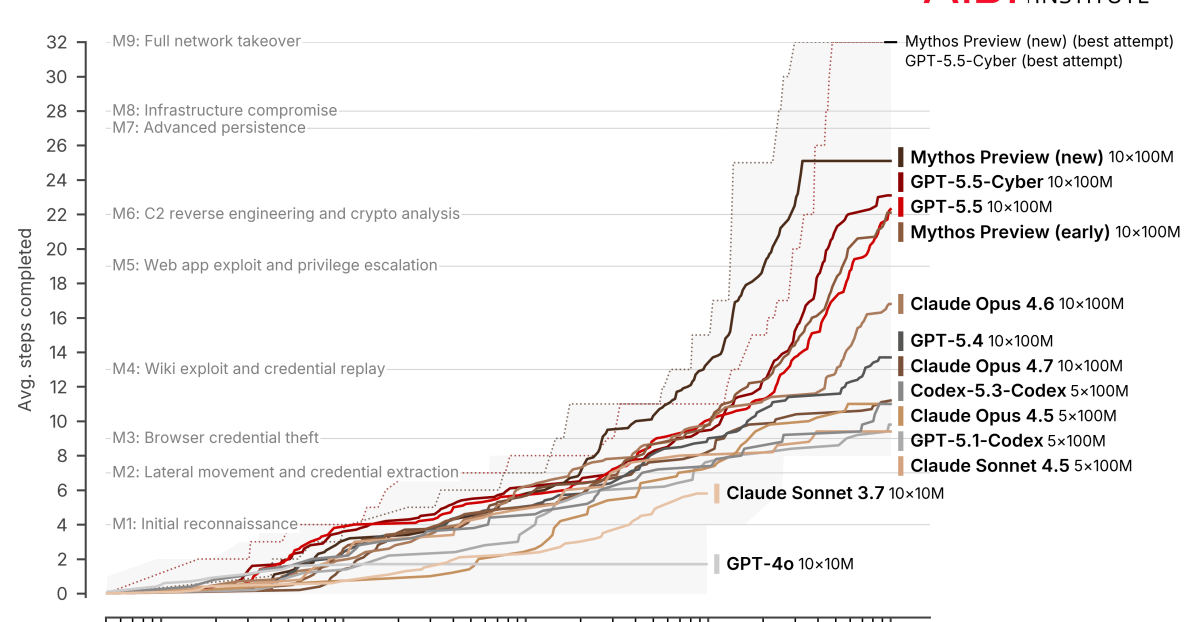

The evaluation organization METR is hitting the limits of its test methodology when measuring Claude Mythos' capabilities. Meanwhile, Palo Alto Networks warns that frontier models like Mythos are fundamentally reshaping the cybersecurity landscape.

METR, which specializes in AI risk assessment, evaluated an early version of Claude Mythos Preview during a limited time window in March 2026. The organization estimates a 50 percent time horizon of at least 16 hours, with a 95 percent confidence interval of 8.5 to 55 hours.

That metric describes the task length at which the model has a 50 percent chance of completing a task that would take a human the specified amount of time. METR uses a range of reference points for task length, such as training a classifier (around 45 minutes) or training an adversarially robust image model (around four hours).

According to METR, this value for Mythos is "at the upper end of what we can measure without new tasks." Of the 228 tasks in the test suite, only five are classified as 16 hours or longer. That makes measurements in this range "unstable and less meaningful than at ranges with better task coverage." METR therefore doesn't provide precise estimates for models above this threshold.