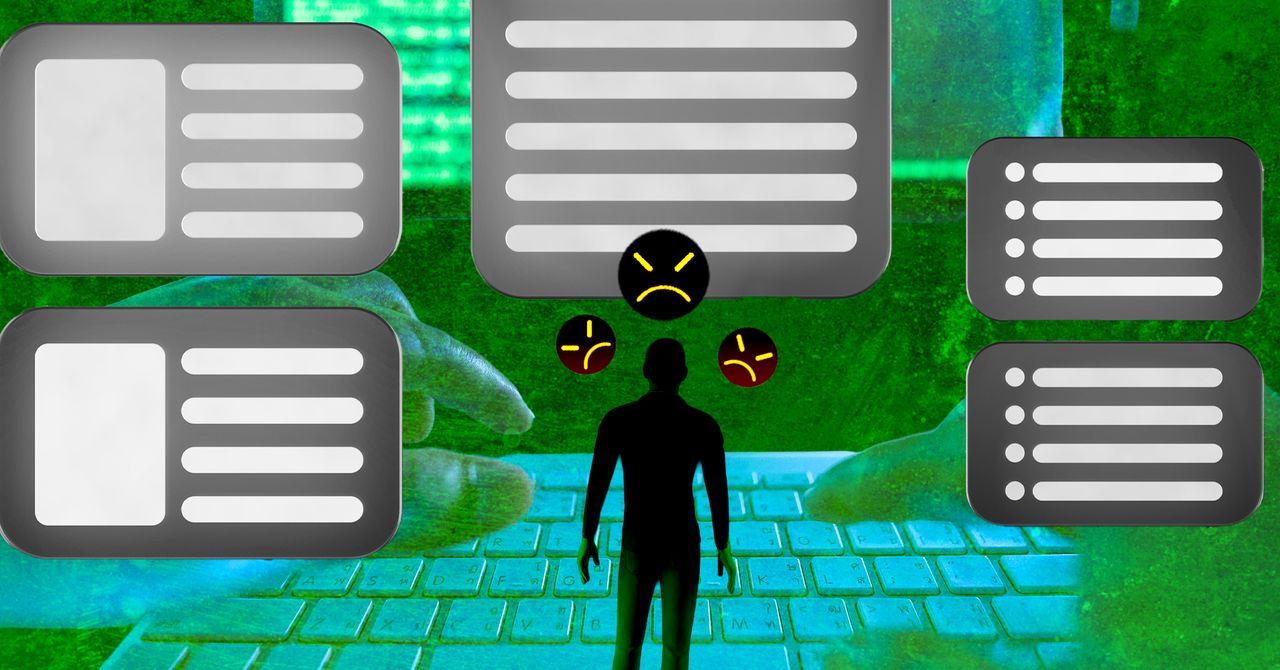

ON MONDAY, A brand-new Reddit account popped up on the widely read forum r/AmItheAsshole, where users have their personal disputes arbitrated by strangers. This particular user asked if they had crossed a line by “refusing to babysit my stepmother’s kids because I have my own job and responsibilities.” The post itself was succinct, straightforward, and grammatically clean, explaining a situation in which the person’s stepmother and father often expected them to provide childcare on little notice, eventually leading to an argument.

“Now there’s tension at home, and I’m starting to wonder if I handled it the wrong way,” the redditor concluded. “I do understand that raising kids is stressful, but I also feel like I shouldn’t be obligated to take on that responsibility when it’s not my role.” The responses to this individual were largely supportive: The kids were not theirs to look after, many people replied, and moving out of the house would be the best course of action.

But according to AI detection software developed by Pangram Labs—which claims an accuracy rate of 99.98 percent and a false positive rate of just one in 10,000—the original story of family discord was AI-generated.

I saw it flagged as AI content while scrolling the page thanks to the latest version of Pangram’s Chrome extension, which rolls out to the public this week; at the paid tier of $20 per month, the tool scans posts on social sites including Reddit, X, LinkedIn, Medium, and Substack in real time, labeling them as human-written, AI-generated, or drafted with assistance from AI. The analysis also includes a measure of Pangram’s confidence in the conclusion: low, medium, or high.