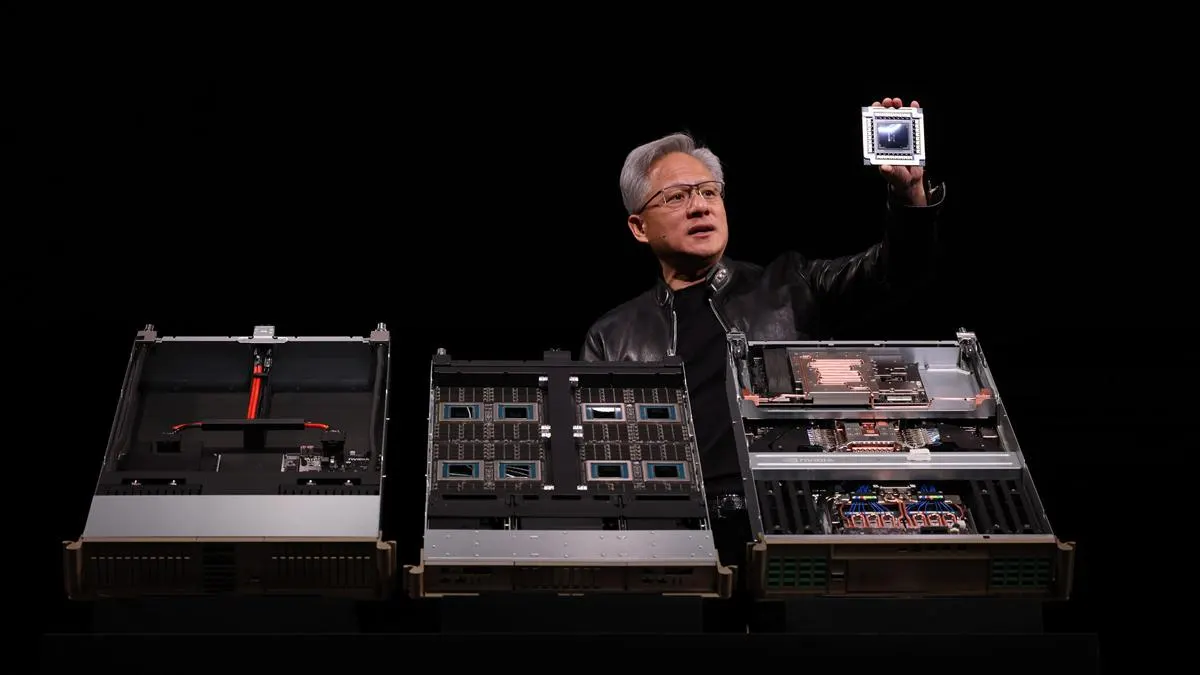

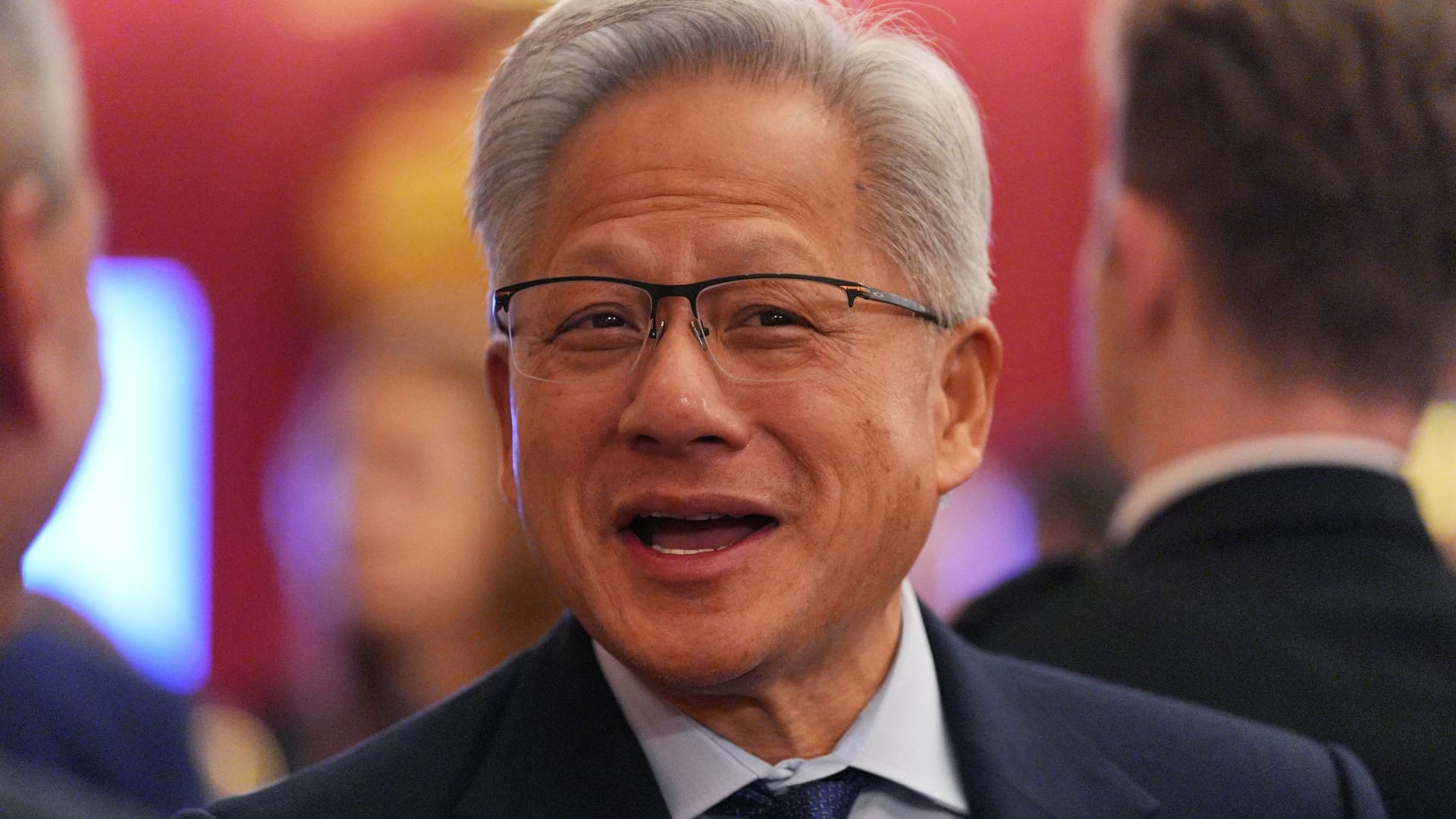

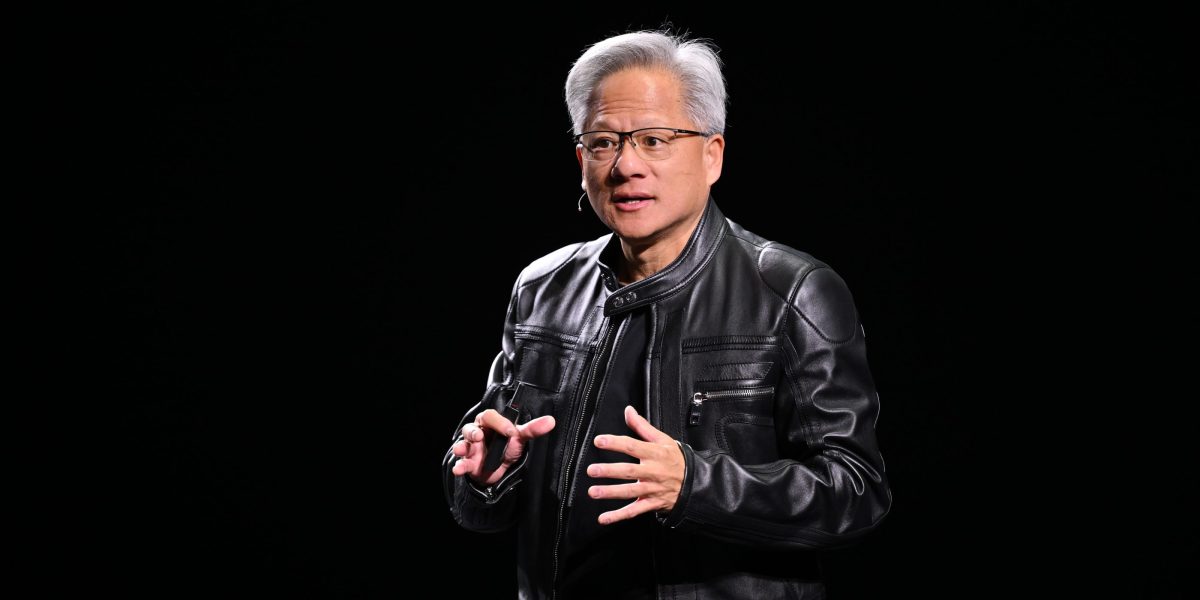

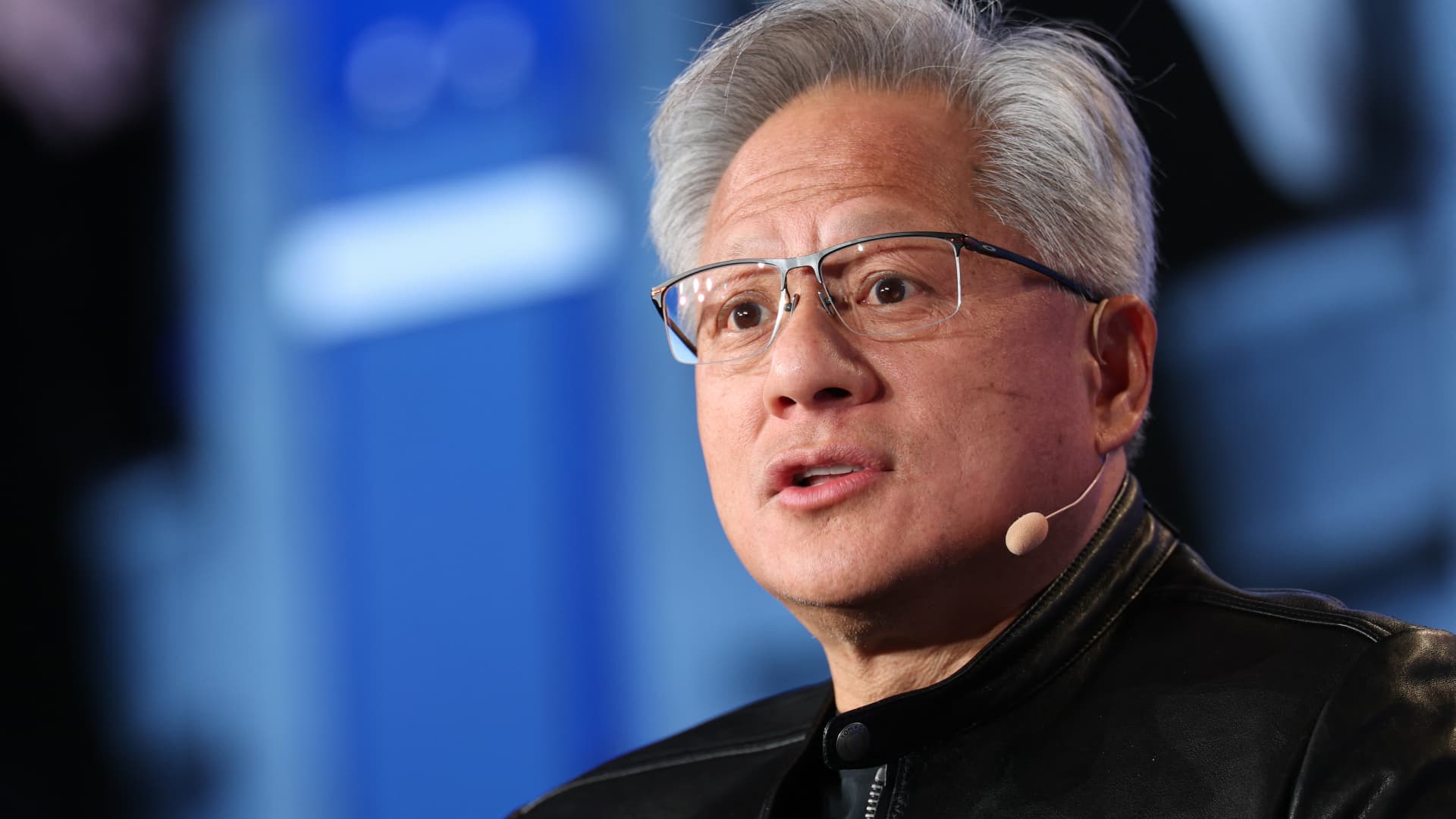

Nvidia GTC (or GPU Technology Conference) has become one of the world’s leading AI conferences, growing every year since its launch in 2009 along with the company’s influence and revenues. But this year marked a shift in emphasis. In the past, the company focused on how it was expanding into new markets; this year, it demonstrated how it would expand within those markets, especially as a key player across the AI landscape. In a clear sign of Nvidia’s ambitions and its current success, it upgraded its projection for $500 billion in AI revenue by 2026 to a staggering $1 trillion by 2027. In particular, CEO Jensen Huang addressed growing needs in AI inferencing as well as the rapid growth of agentic workloads and how that growth might impact all of AI computing.Nvidia’s Vision For The Full Stack: The 5-Layer Cake Of AIISince December 2025, Huang has been promoting the idea of a “five-layer cake” of AI. This concept seems to encapsulate the company’s overall strategy for AI as it tries to communicate the inherently vertical nature of the technology, which is far more complex than a simple app or model. AI is portrayed as critical infrastructure with multiple layers ranging from underlying energy requirements all the way up to individual applications. This also attempts to simplify the complexity of a complete AI stack for the average person.Nvidia’s five-layer cake of AIPhoto courtesy of Nvidia At the base of this stack is energy, which is drawing more attention as the limiting factor for how much compute can be built in any given location. In turn, the chips using that energy determine the amount of compute available to solve AI problems. Next comes the infrastructure that supports these chips with land, buildings, power delivery, computing equipment, cooling and networking. The infrastructure enables AI models, which vary depending on the application and the use case. At the top level, the applications themselves harness model outputs to deliver results for consumers, business users, governmental entities and so on — the basis of AI's economic value.Huang said the entire computing stack had to be reinvented to support “the largest infrastructure buildout in human history.” Nvidia is using this messaging to position itself not only as a chipmaker, but as a foundational enabler for all computing because all computing is now moving toward AI. The CEO talks about Nvidia’s approach combining “vertical integration and horizontal openness,” meaning that its models are open to anyone, but its approach to computing is vertically integrated at every layer.Vera Rubin Pod, LPX And The 10x Revenue OpportunityVera Rubin is the forthcoming high-end compute platform for Nvidia that combines its Vera CPUs and Rubin GPUs; it is scheduled to start shipping a few months from now. The Vera Rubin Pod is Nvidia’s rack-scale offering that promises to deliver yet another significant increase in AI compute density within data centers. It incorporates seven types of Nvidia’s chips across five different rack systems to create a high-performance configuration that the company says will enable token generation — and revenue — at up to 10x the rate of last generation’s Blackwell platform. Nvidia claims that this could enable as much as a $300 billion annual inference opportunity.Chart breaking down Nvidia’s architectural inference opportunitiesPhoto courtesy of Nvidia One of the biggest enabling factors of the Vera Rubin Pod is the use of Groq’s LPX platform, leveraging the Groq 3 LM30 chip. Groq’s language processing unit is inherently different from Nvidia’s GPUs, with a significant amount of SRAM (static random access memory) as opposed to DRAM (dynamic random access memory). Because it increases memory bandwidth by an astounding 55x compared to a Rubin GPU, the Groq LPU is inherently good at handling extremely memory-intensive tasks. This helps explain why Nvidia acquired Groq’s IP and its most important talent for $20 billion in December 2025.Growing Beyond GPUs: A Diversified Hardware FocusThe Groq example shows how Nvidia is moving away from trying to make its GPUs the solution to every problem. While Nvidia’s ecosystem has long expanded beyond GPUs, that expansion has almost always been in service of the GPU, whether by CPUs, networking chips or software. With the introduction of the Groq 3 LPUs, Nvidia’s compute architecture has truly outgrown a GPU-only approach.We are also seeing Nvidia start to offer products such as its own in-house Arm-based CPUs within a CPU-only rack solution. The company is positioning the new 88-core Vera CPU as competitive with Intel and AMD for data centers. These CPUs can be configured with up to 256 chips per rack, and Nvidia already has customers including Meta looking to deploy them. Moving beyond CPUs, Nvidia also talked about its Bluefield 4 STX solution for storage-oriented applications; according to the company, this product improves performance to prevent storage from being a bottleneck for AI output.More Vera Rubin Products: Nvidia DSX AI Factory And Space 1Nvidia DSX is the company’s latest Vera Rubin–based platform for its AI Factory offering, which includes a reference design for AI factories and leverages Nvidia’s Omniverse Digital Twin. (For background on Omniverse, see my writeup of GTC 2025 and my colleague Bill Curtis’ detailed look at Nvidia’s moves in physical AI from February 2025.) The company calls this a turnkey solution that takes advantage of all the capabilities Nvidia and its partners have created to help plan, build and maintain AI factories. This platform is designed for implementations with hyperscalers and the biggest enterprises that are looking to deploy their own industrial-scale AI without having to piece together infrastructure.There’s also been much buzz lately around AI computing in space, with many startups being launched to solve the problems of deployment. Prior to the announcement of the Space 1 module based on Vera Rubin, Nvidia mostly deployed embedded Jetson Orin chips and H100 GPUs for space applications. Nvidia says that the new space-focused module was designed for these extreme applications and delivers as much as 25x the AI performance in space as the H100. It also features lockstep processing and error correction codes to ensure that operating in space doesn’t affect output. That said, I believe that Space 1 will address a fairly niche application and shouldn’t be seen as validating the need for data centers in space.NemoClaw: Building Specialized AgentsAgentic AI has quickly become an important area of focus as agents such as Claude Code are helping users address practical tasks. From the technical side, agents are reshaping the way IT infrastructure is built and how the chips inside that infrastructure are designed. One of the most interesting recent developments is the introduction of OpenClaw, an open-source agent that runs locally using Nvidia’s new open-source NemoClaw stack for safer deployments of task-oriented agents.The NemoClaw agent toolkit is designed for building, training and deploying autonomous, secure AI agents, which should make it easier for anyone to create their own agents. In developing the new stack, Nvidia worked closely with the creator of OpenClaw and security researchers to help prevent unwanted agentic actions or potentially dangerous outcomes.DLSS 5.0: The Controversial Deployment Of Neural RenderingNvidia surprised many, including me, with the announcement of DLSS 5.0, which is the company’s optional AI-assisted feature for enhancing image quality and delivering faster rendering. It works by rendering at a lower resolution and then using AI to upscale the image to the native resolution. Most users seem to be happy with previous implementations of DLSS. Now, DLSS 5.0 introduces neural rendering techniques to further improve lighting in scenes that might otherwise look flat. It also gives game developers more control over the user experience, and they can tune DLSS 5.0 to change how it affects the visuals of their games.Lots of people have reacted negatively to early photos and videos made using DLSS 5.0. I think this is a significant overreaction. There are many gamers who hate most AI-enhanced things, and the reaction to DLSS 5.0 may be a culmination of that frustration. Having seen the demos in person, I can say that almost all of the enhancements seem to be positive and enhance realism — and this is coming from a serious photographer who can be very picky about editing tools. Beyond that, DLSS 5.0 is still far from shipping, so it’s unclear exactly what the final product will look like or which GPUs will be able to run it. The current instantiation runs on two Nvidia RTX 5090 graphics cards, but, according to Nvidia, the software will be optimized to run on a single GPU by the fall.The Inference Inflection Point And Huang’s $1 Trillion Market ProjectionIn his remarks at GTC, Huang talked about the growing AI revenue opportunity for the industry, adding that “Demand for Nvidia GPUs is off the charts.” He claimed that inference growth is driving significantly more revenue since the special edition of GTC held in Washington, D.C., in late 2025 (which I wrote about here).Even though that was only a few months ago, the company is now upgrading its projected $500 billion revenue opportunity through 2026 to $1 trillion through 2027. This means that Nvidia not only expects this year to finish strong but also expects 2027 to be even stronger — hundreds of billions of dollars stronger. From my perspective as an analyst, it made sense to think that the transition from AI training to widespread AI inference and pervasive agentic AI would be a significant driver for the industry. But if Huang is right, it could prove to be even more of a catalyst for growth — for Nvidia and its partners — than anyone would have imagined not long ago.Disclosure: Nvidia is an advisory client of my firm, Moor Insights & Strategy.

Nvidia GTC 2026 And The Ambitious Path To $1 Trillion In AI Revenue

Nvidia outlines AI expansion vision at GTC 2026 with its $1T revenue goal and full-stack push.