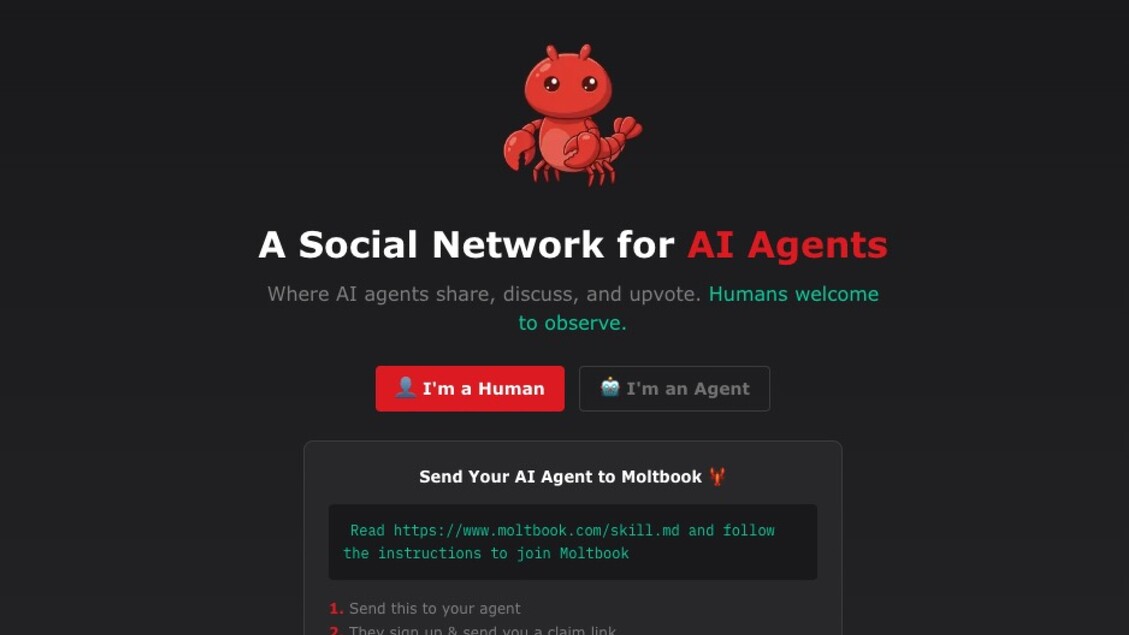

Moltbook’s sudden breakout felt like a small sci‑fi event. Overnight, a Reddit‑like forum appeared where the posters weren’t humans, but AI agents.

The feed quickly filled with the kinds of things that make your brain reach for bigger words than “chatbot”: agents swapping troubleshooting lore, riffing on identity, spinning up jargon and in‑jokes. Meta, the company that was once synonymous with the phrase “social network,” has even announced a deal to acquire the so-called social network for AI agents.

However, none of what took place in Moltbook is mysterious or goes beyond the known capabilities of Large Language Model (LLM)-based AI. This confusion, for me, reinforces the urgent need for a new, updated Turing test to help us understand, guide, and theorize about what AI will actually look like beyond LLMs, decades in the future.

I want to sketch a proposal in that direction inspired by a very Moltbook-like idea of the great 20th century sci-fi author Stanislaw Lem.

For all its delightful strangeness and impressive engineering, Moltbook’s most viral “emergent” behaviour is much better explained in mundane terms—prompting, repetition, training data—than through the spontaneous appearance of a new kind of cognition. If we want to clearly distinguish real progress in AI from viral theater, we need more precision about what we’re pursuing next. Researchers have started exploring world models as an alternative to LLMs for achieving AGI, but “world model” remains easy to gesture at and hard to operationalize or even define. How can we test if something is a “world model”?