Anthropic is suing the Department of Defense and other federal agencies after the Trump administration formally designated the company a “supply-chain risk” late last week.

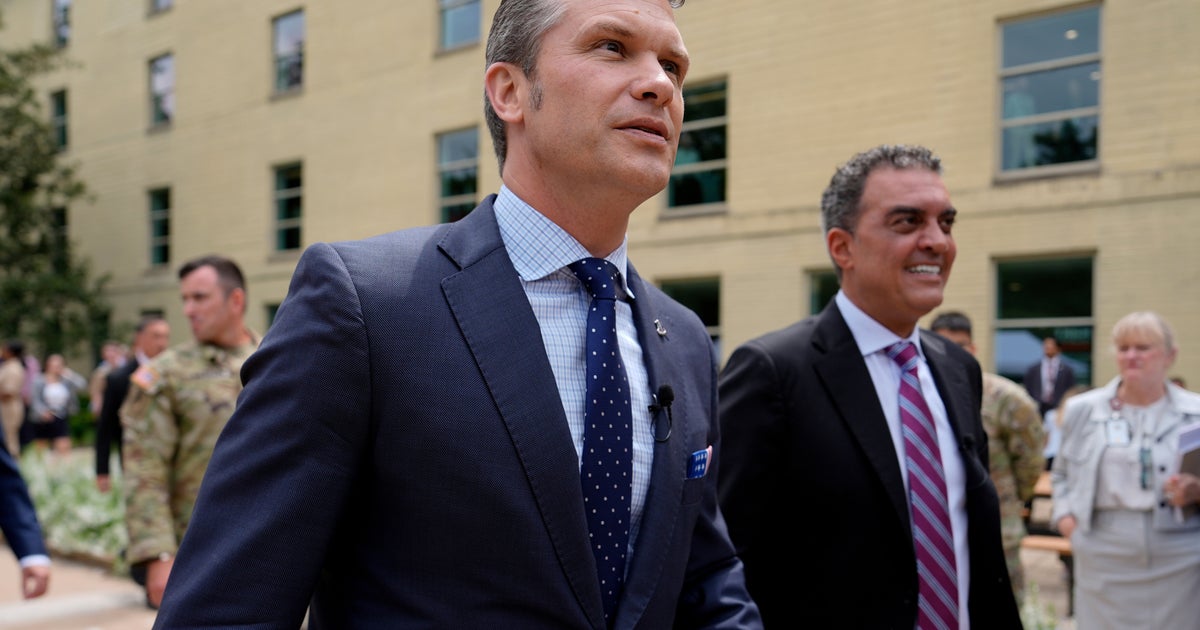

It’s the latest development in an ongoing disagreement between the Pentagon and Anthropic over the Trump administration’s use of its AI technology with big implications for the control of AI overall and the relationship between business and government. Anthropic had been trying to ensure that the government does not use its AI model Claude for domestic mass surveillance and autonomous weapons. The Pentagon, which has been using Claude for a variety of purposes, including processing intelligence, wanted these restrictions removed from Anthropic’s existing contract and for Anthropic to agree to a new contract in which it allowed the military to deploy Claude for “all lawful use.”Anthropic refused to agree to these terms. In response, the Trump administration canceled the company’s deals with the government and designated it a supply-chain risk, a label historically reserved for companies tied to foreign adversaries. The designation means that no defense contractors can use Anthropic’s technology in the completion of any work for the Department of War.Pete Hegseth, the secretary of war, had said that the military would stop using Claude “immediately,” but also that there would be a six-month phaseout of the technology to prevent a disruption in critical operations. The military has reportedly been using Claude in its current war with Iran to help process intelligence and targeting data. Hegseth had also said that the supply-chain risk designation would mean that defense contractors would have to sever all commercial ties to Anthropic, something most legal experts have said is not supported by the statute on supply-chain risks, and Anthropic has said that the formal designation it received from the Pentagon applied only to work on defense contracts and not other commercial work unrelated to those contracts.