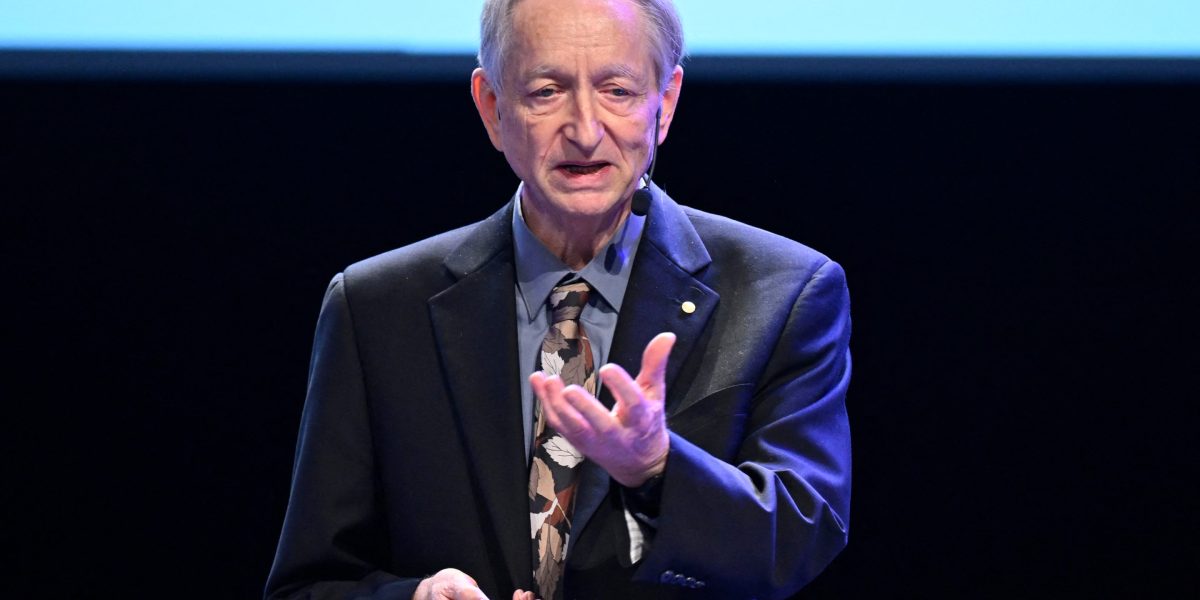

AI won’t just reshape work and markets, Joseph Stiglitz says it will quietly rot the information those systems depend on. As large language models (LLMs) scrape our sarcastic Reddit comments and loud marginal voices on extremist forums, the Nobel laureate warns of a world where everything looks more data‑driven, yet the underlying data is increasingly, well, “garbage.”

“In the case of AI, I think there are a couple of other deeper problems,” the economist told Fortune. “We have not only a problem in the labor market … but there’s another side of what I would call information externalities,” which Stiglitz describes simply as garbage in, garbage out (GIGO).

The risk isn’t just lost jobs; it’s a broken feedback loop between truth and the systems we use to interpret reality—from prediction markets to financial models to political debate. In essence, AI is only as smart as the input it receives, and when it continues to scrape less-than-accurate information, the output becomes just as distorted as the information it absorbed.

In his view, today’s models are built on a faulty bargain: They voraciously scrape journalism, research, and online chatter while undermining the very institutions that produce high‑quality knowledge in the first place. The result, he fears, is a world where people are driven by the online rhetoric they see perpetuated by AI—think of the market downturn prompted by a Citrini Research paper publicizing “ghost GDP” or Matt Shumer’s viral AI doomsday essay—and not one based in actual reality.