Hello and welcome to Eye on AI. In this edition…The Pentagon fight with Anthropic raises three crucial questions…OpenAI raises $110 billion in new funding…Meta experiments with an AI shopping assistant…LLMs can identify pseudonymous internet users at scale…data centers on the front lines in the Iran war.

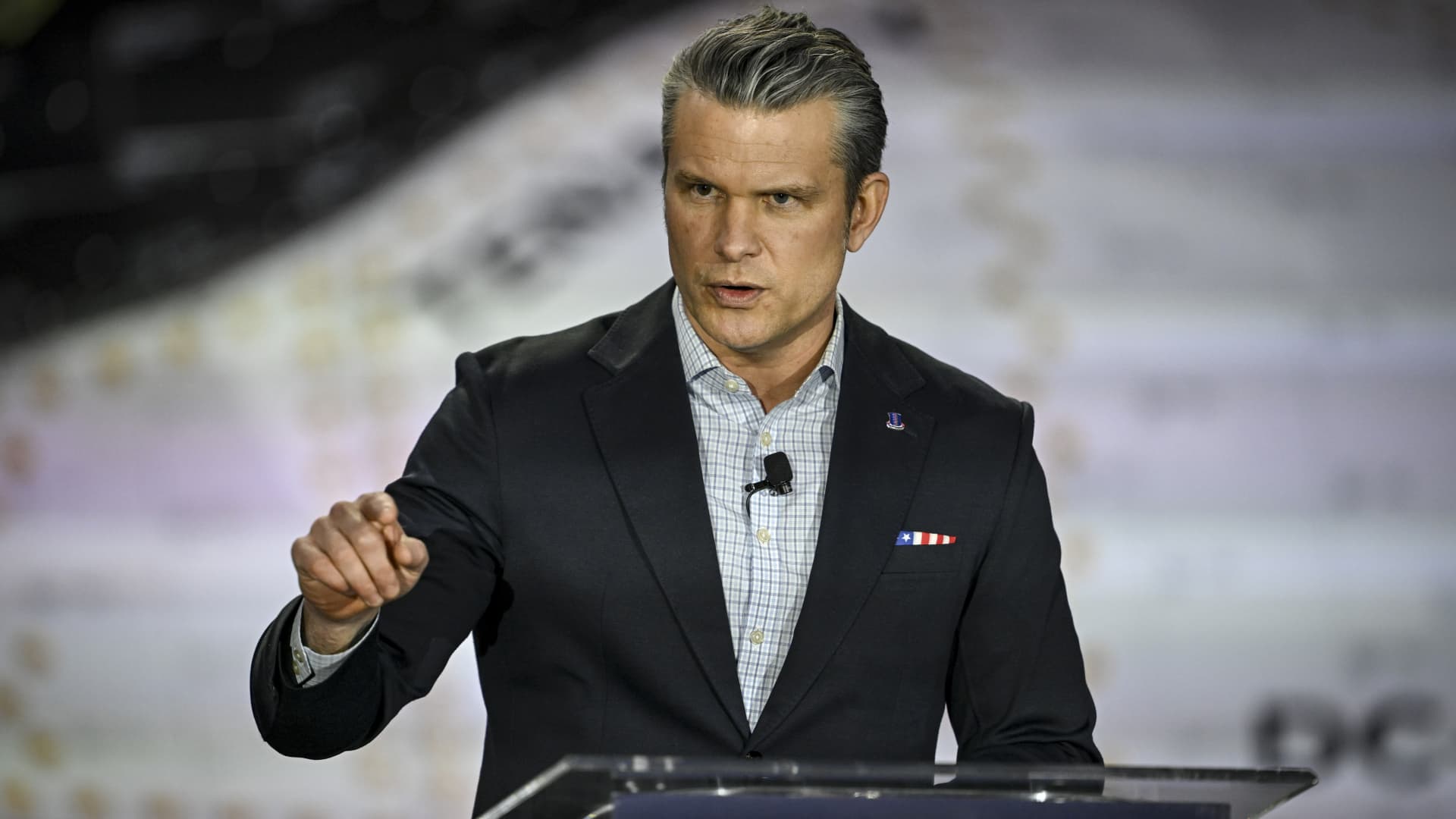

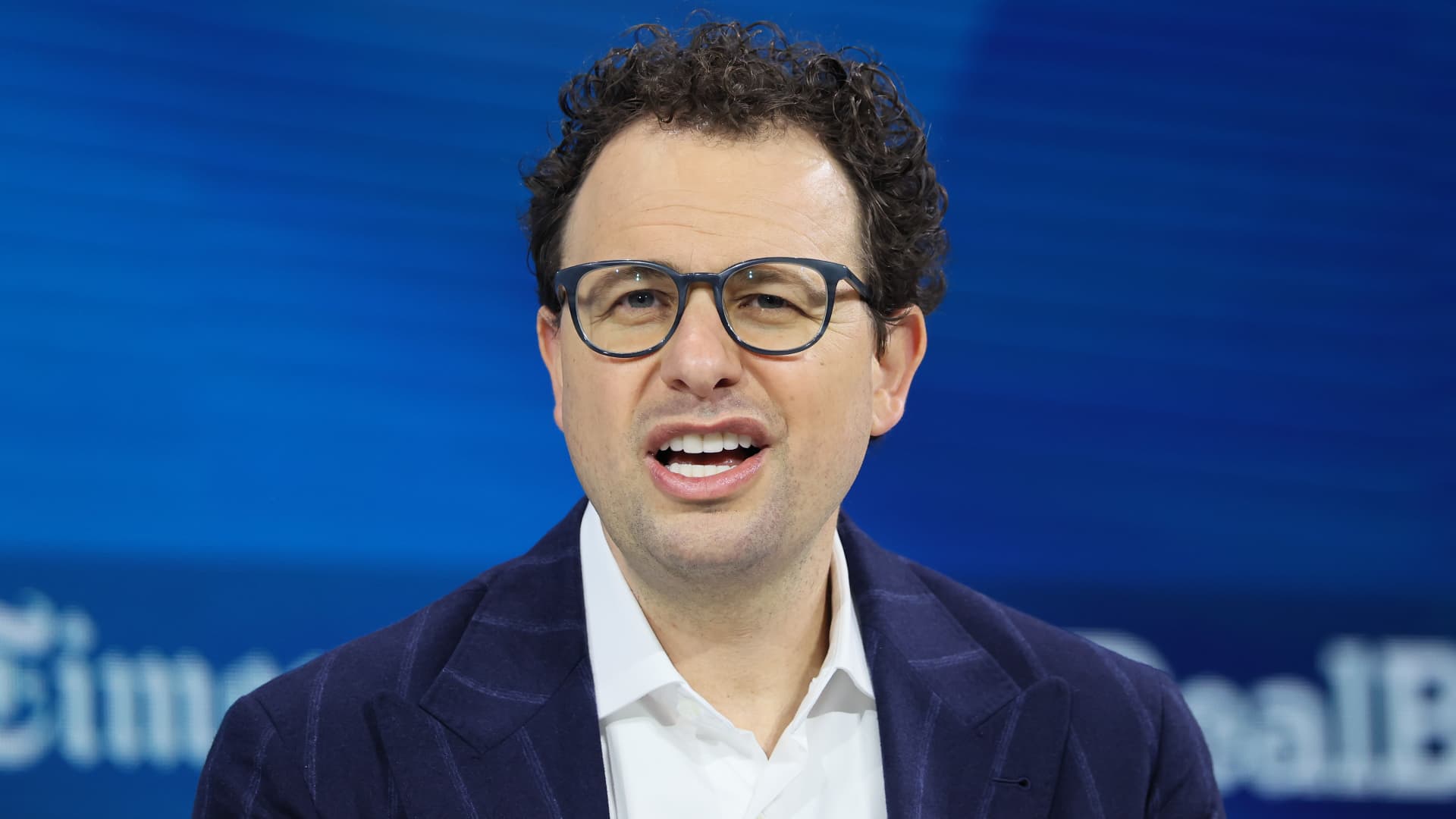

The most important story in AI at the moment, without a doubt, is the fight between the U.S. Department of War and Anthropic. If you haven’t been following the drama, you can catch up on the story by reading coverage from me and my Fortune colleagues here, here, here, here, here and here.This story raises at least three critical questions: who should have control over how AI is used in a democratic society? How should that control be exercised? What should the consequences be for a company that disagrees with the government’s policy?Whatever you think of OpenAI CEO Sam Altman and his decision to sweep in and sign a deal with the Pentagon—including a contractual obligation to allow the military to use OpenAI’s AI models “for any lawful purpose” that Anthropic had refused to agree to—Altman correctly identified what’s at stake in this fight.

In an “Ask Me Anything” session on X over the weekend, Altman said:A really important point: we are not elected. We have a democratic process where we do elect our leaders. We have expertise with the technology and understand its limitations, but I think you should be terrified of a private company deciding on what is and isn’t ethical in the most important areas. Seems fine for us to decide how ChatGPT should respond to a controversial question. But I really don’t want us to decide what to do if a nuke is coming towards the US.