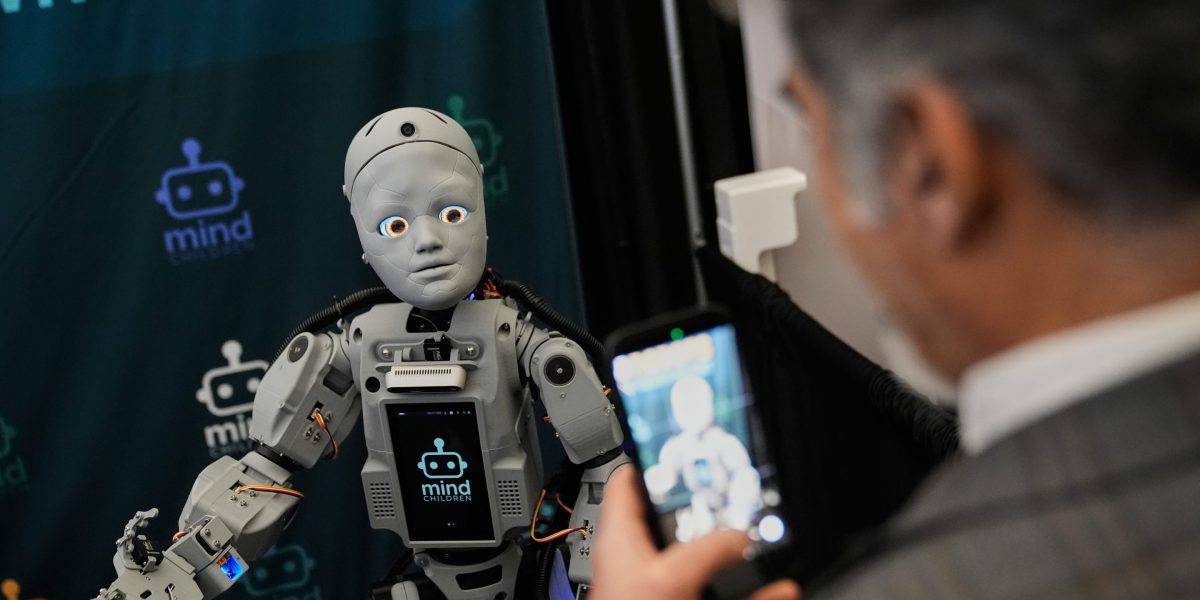

I’m a founder who spends a lot of time around humanoid robots. And while today’s innovation is cutting-edge, the majority of today’s humanoids are militant, aggressively masculine, and plain creepy-looking.

Just look at what Tesla announced this week with its shift in strategy from producing EVs, to producing robots. Their Optimus general-purpose humanoid robot is a prime example of the physical design most of these robots share. They may be technically impressive, but they are not systems most people will feel comfortable sharing space with, let alone inviting into their homes.

When it comes to humanoids, the conversation is almost always the same. We talk about what they can do — how fast they move, how precisely they grasp, how much work they can take on. We benchmark performance and reliability, then spiral into debates about dexterity, payload, and battery life.

What we talk about far less is how they behave when things don’t go to plan. When a robot freezes mid-conversation or powers down without warning.

As robots begin to move out of labs and warehouses and into hospitals, care facilities, and homes, that omission starts to look less like an oversight and more like a structural blind spot. Recent research projects the humanoid robot market will reach 8 billion by 2035, with over 1.4 million units shipped annually. Yet the most critical questions about how these machines will integrate into human spaces remain largely unanswered.