SOLÈNE REVENEY / « LE MONDE »

T

his is a paradox in which many artificial intelligence (AI) developers find themselves entangled: Why invest so much money and effort into creating safer and more neutral AI if, once released, these chatbots can be so easily subverted?

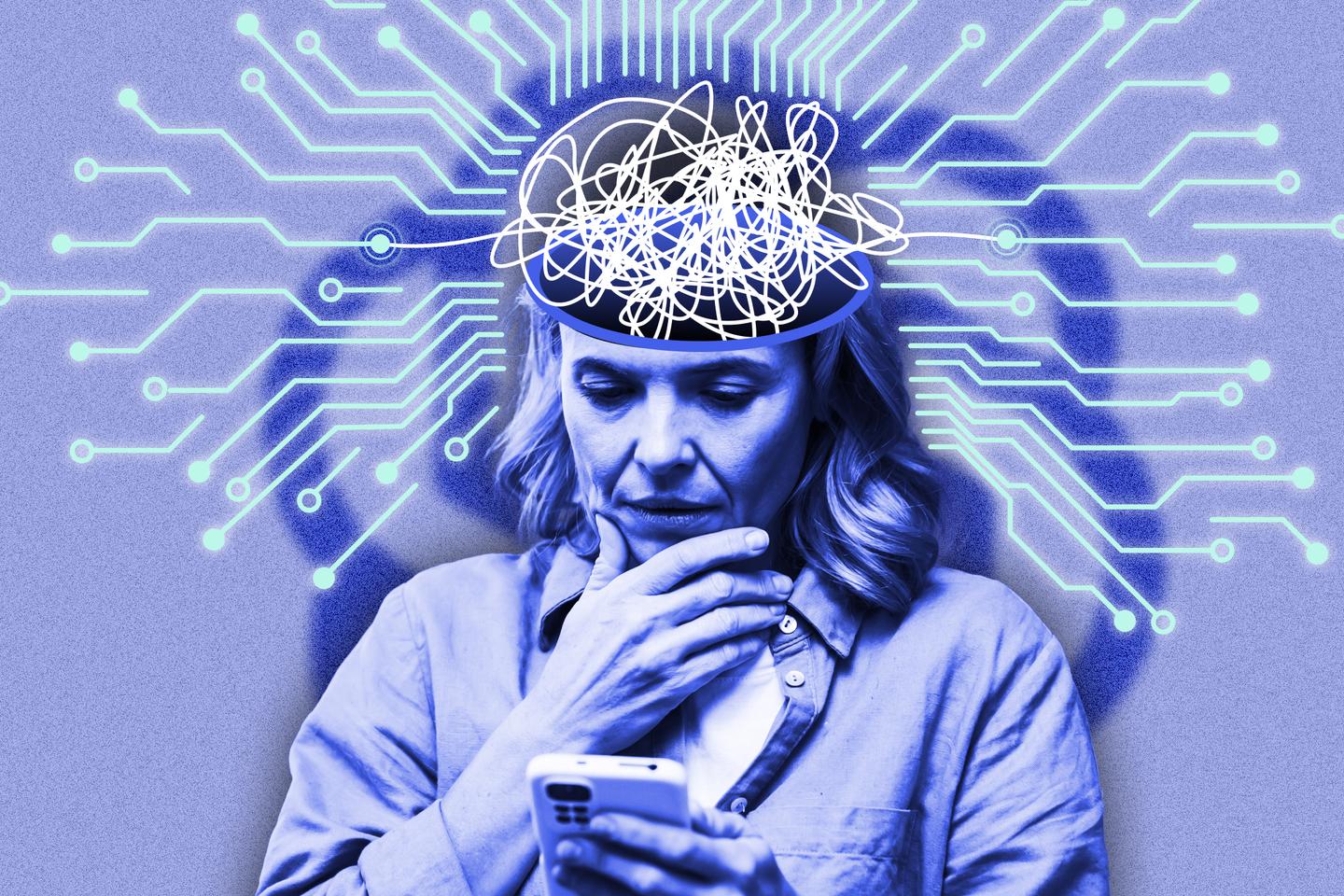

Take the case of OpenAI. In April, the creator of ChatGPT announced it was considering making its chatbot less sycophantic, fearing it might draw vulnerable individuals into unhealthy dependencies, according to findings by its own researchers a month earlier. Several media reports, notably by The New York Times, Ars Technica, Futurism and Reuters, illustrated the dangerous spiral that some users may enter, sometimes resulting in a fatal break from reality.

So on August 7, OpenAI took the plunge and announced a new version of its chatbot, GPT-5. Its tone became noticeably colder, and it now monitors the length of conversations to suggest breaks when deemed necessary.