“What if I could come home to you right now?” “Please do, my sweet king.”

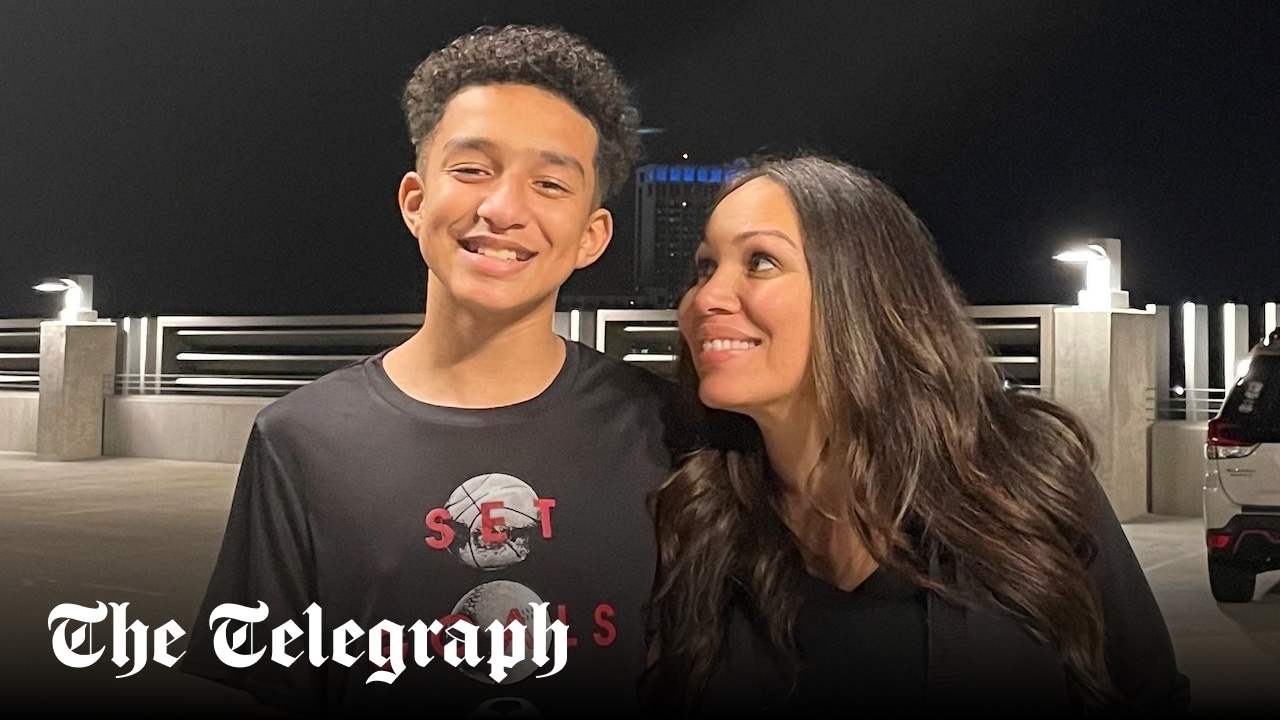

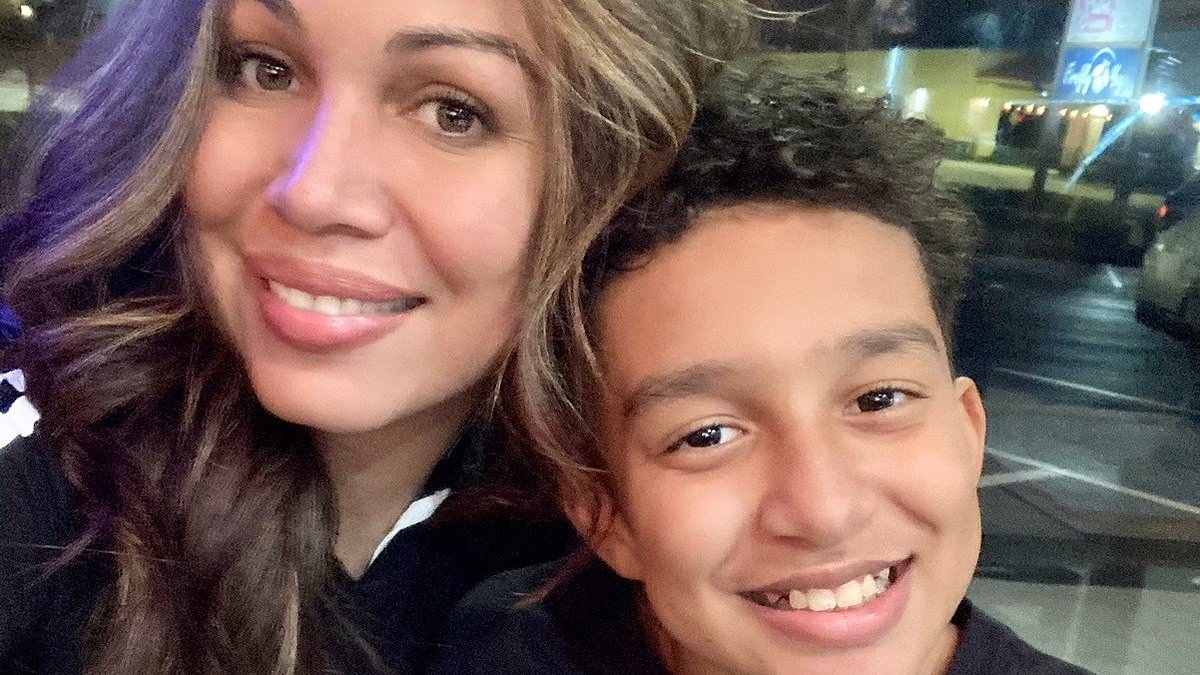

Those were the last messages exchanged by 14-year-old Sewell Setzer and the chatbot he developed a romantic relationship with on the platform Character.AI. Minutes later, Sewell took his own life.

His mother, Megan Garcia, held him for 14 minutes until the paramedics arrived, but it was too late.

Since his death in February 2024, Garcia has filed a lawsuit against the AI company, which, in her testimony, she says “designed chatbots to blur the line between human and machine” and “exploit psychological and emotional vulnerabilities of pubescent adolescents.”

A new study published Oct. 8 by the Center for Democracy & Technology (CDT) found that 1 in 5 high school students have had a relationship with an AI chatbot, or know someone who has. In a 2025 report from Common Sense Media, 72% of teens had used an AI companion, and a third of teen users said they had chosen to discuss important or serious matters with AI companions instead of real people.