Currently, only residents from certain countries and US states can opt out of certain Tracking Technologies through our Consent Management Platform. Additional options regarding these technologies may be available on your device, browser, or through industry options like AdChoices. Please see our Privacy Policy for more information.

New research shows highly inconsistent performance on a variety of physical reasoning tasks.

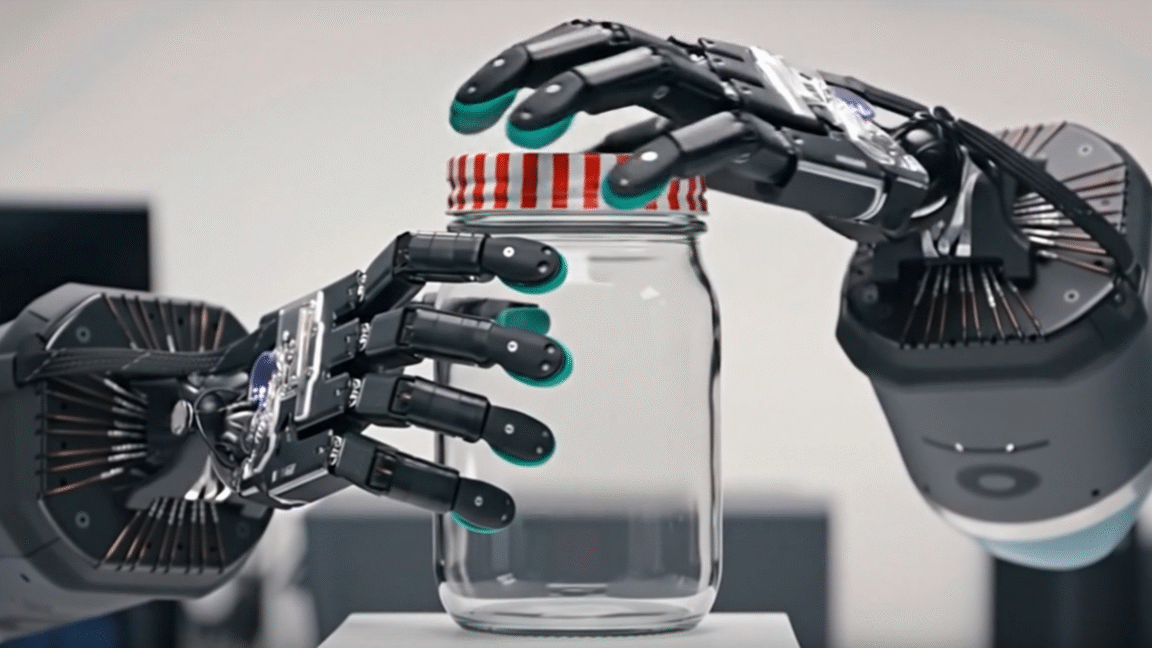

Over the last few months, many AI boosters have been increasingly interested in generative video models and their seeming ability to show at least limited emergent knowledge of the physical properties of the real world. That kind of learning could underpin a robust version of a so-called "world model" that would represent a major breakthrough in generative AI's actual operant real-world capabilities.

Recently, Google's DeepMind Research tried to add some scientific rigor to how well video models can actually learn about the real world from their training data. In the bluntly titled paper "Video Models are Zero-shot Learners and Reasoners," the researchers used Google's Veo 3 model to generate thousands of videos designed to test its abilities across dozens of tasks related to perceiving, modeling, manipulating, and reasoning about the real world.