Currently, only residents from certain countries and US states can opt out of certain Tracking Technologies through our Consent Management Platform. Additional options regarding these technologies may be available on your device, browser, or through industry options like AdChoices. Please see our Privacy Policy for more information.

To revisit this article, visit My Profile, then View saved stories.

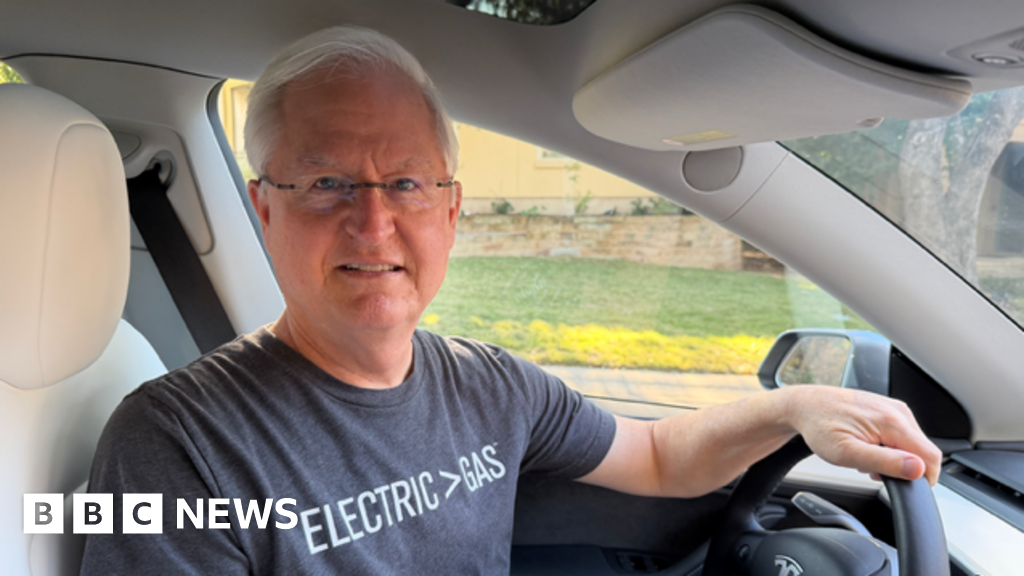

SINCE TESLA LAUNCHED its Full Self-Driving (FSD) feature in beta in 2020, the company’s owner’s manual has been clear: Contrary to the name, cars using the feature can’t drive themselves.

Tesla’s driver assistance system is built to handle plenty of road situations—stopping at stop lights, changing lanes, steering, braking, turning. Still, “Full Self-Driving (Supervised) requires you to pay attention to the road and be ready to take over at all times,” the manual states. “Failure to follow these instructions could cause damage, serious injury or death.”

Now, however, new in-car messaging urges drivers who are drifting between lanes or feeling drowsy to turn on FSD—potentially confusing drivers, which experts claim could encourage them to use the feature in an unsafe way. “Lane drift detected. Let FSD assist so you can stay focused,” reads the first message, which was included in a software update and spotted earlier this month by a hacker who tracks Tesla development.