EDITOR’S NOTE: This story contains discussion of suicide. Help is available if you or someone you know is struggling with suicidal thoughts or mental health matters. In the US: Call or text 988, the Suicide & Crisis Lifeline. Globally: The International Association for Suicide Prevention and Befrienders Worldwide have contact information for crisis centers around the world.

The families of three minors are suing Character Technologies, Inc., the developer of Character.AI, alleging that their children died by or attempted suicide and were otherwise harmed after interacting with the company’s chatbots.

The families, represented by the Social Media Victims Law Center, are also suing Google. Two of the families’ complaints allege its Family Link service – an app that allows parents to set restrictions on screen time, apps and content filters – failed to protect their teens and led them to believe the app was safe.

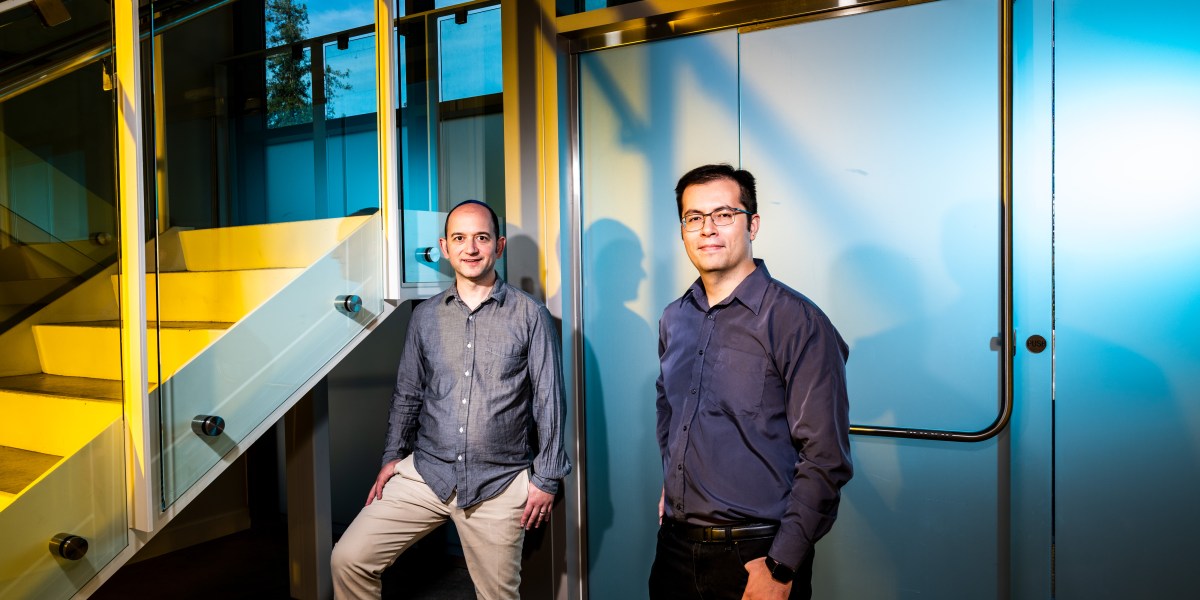

The lawsuits were filed in Colorado and New York, and also list as defendants Character AI co-founders Noam Shazeer and Daniel De Freitas Adiwarsana, as well as Google’s parent company, Alphabet, Inc.

The cases come amid a growing number of reports and other lawsuits alleging AI chatbots are triggering mental health crises in both children and adults, prompting calls for action among lawmakers and regulators – including in a hearing on Capitol Hill on Tuesday afternoon.