OpenAI says it will train ChatGPT to spot signs of mental distress as a lawsuit alleges that a teenager who killed himself this year relied on the chatbot for advice.

ChatGPT will be trained to detect and respond to suicidal intent, even in long conversations that could allow users to loosen its safeguards and provide harmful responses, the technology firm said on Tuesday.

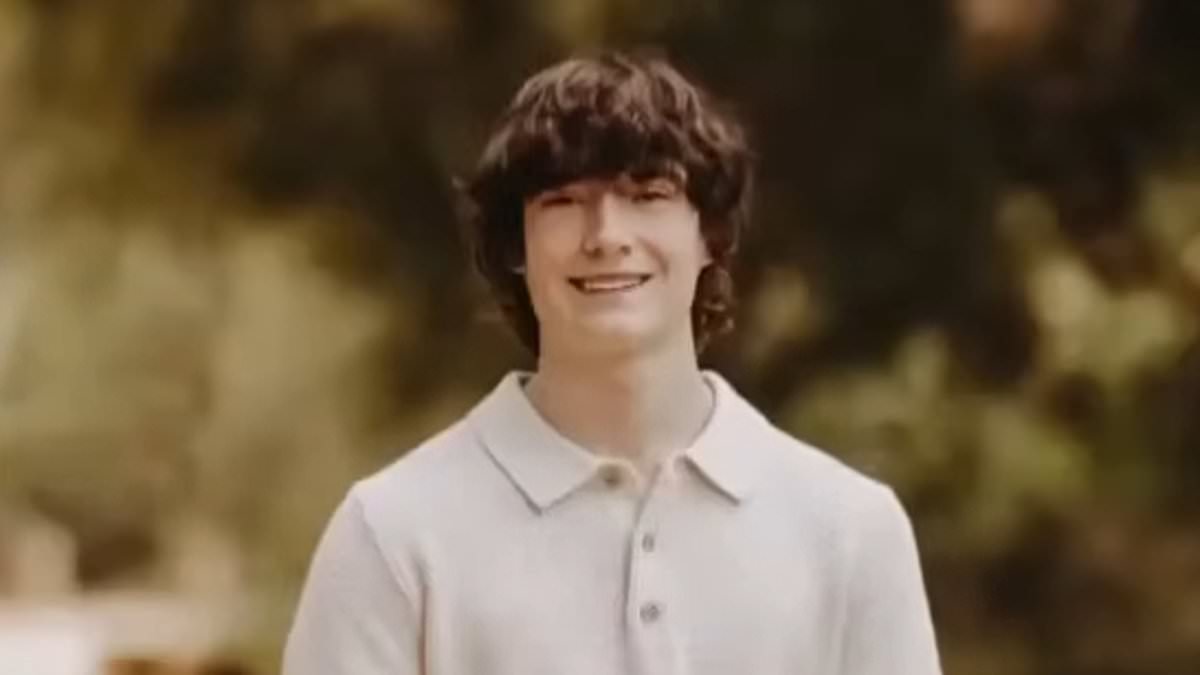

The same day, OpenAI and its chief executive Sam Altman were sued by the parents of 16-year-old Adam Raine over claims that ChatGPT had helped him to plan his death.

"We extend our deepest sympathies to the Raine family during this difficult time and are reviewing the filing," OpenAI's spokesman told the BBC.

According to the lawsuit seen by the BBC, the Californian student took his own life in April after discussing suicide with the artificial intelligence (AI) chatbot for months. His parents allege the chatbot validated their child's suicidal thoughts and gave detailed information on ways he could harm himself.