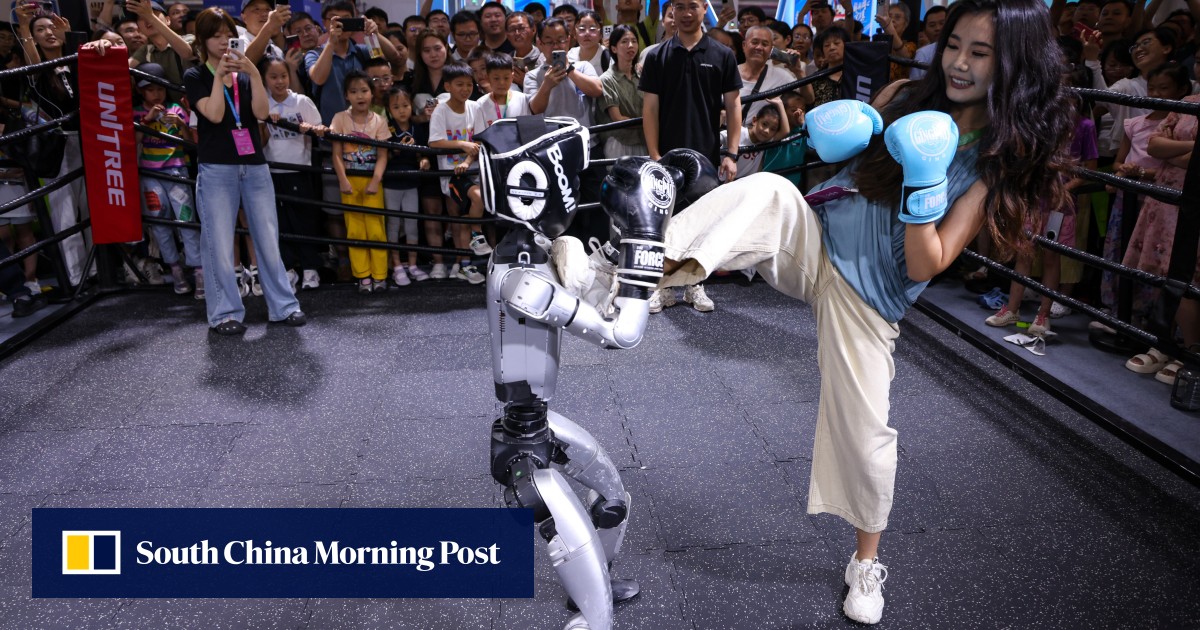

AI could propel advances in embodied intelligence to allow autonomous robots to function in unfamiliar environments, Wang Xingxing says

Wang Xingxing defined this moment as the first time a robot could perform a task, such as cleaning a room or bringing a bottle of water to a targeted person, in a venue that it had never been to before.

“If things develop fast, it could happen in the next year or two, or maybe two to three years”, he said on Saturday at the World Robot Conference in Beijing.

Although both robot hardware, such as dexterous hands, and training data were good enough to enable the feat, the crucial element of “AI for embodied intelligence is completely inadequate”, he said.

He had “doubts” about whether popular vision language action (VLA) models, which used a rather “dumb” architecture, were up to the task, he said. Although Unitree also used such models, along with reinforcement learning to improve pre-trained VLAs in downstream tasks, the approach required a lot of optimisation, he said.