An exclusive conversation with the company’s heads of research about AI’s newest frontiers.

OpenAI has given itself a dual mandate. On the one hand, it’s a tech giant rooted in products, including of course ChatGPT, which people around the world reportedly send 2.5 billion requests to each day. But its original mission is to serve as a research lab that will not only create “artificial general intelligence” but ensure that it benefits all of humanity.

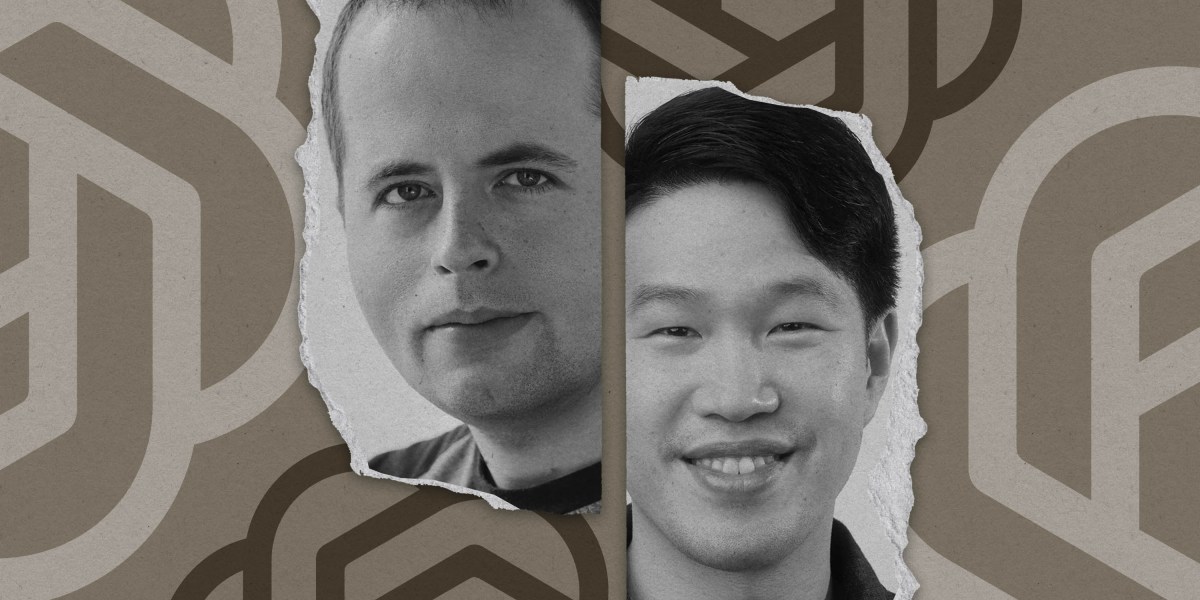

My colleague Will Douglas Heaven recently sat down for an exclusive conversation with the two figures at OpenAI most responsible for pursuing the latter ambitions: chief research officer Mark Chen and chief scientist Jakub Pachocki. If you haven’t already, you must read his piece.

It provides a rare glimpse into how the company thinks beyond marginal improvements to chatbots and contemplates the biggest unknowns in AI: whether it could someday reason like a human, whether it should, and how tech companies conceptualize the societal implications.

The whole story is worth reading for all it reveals—about how OpenAI thinks about the safety of its products, what AGI actually means, and more—but here’s one thing that stood out to me.