Join the event trusted by enterprise leaders for nearly two decades. VB Transform brings together the people building real enterprise AI strategy. Learn more

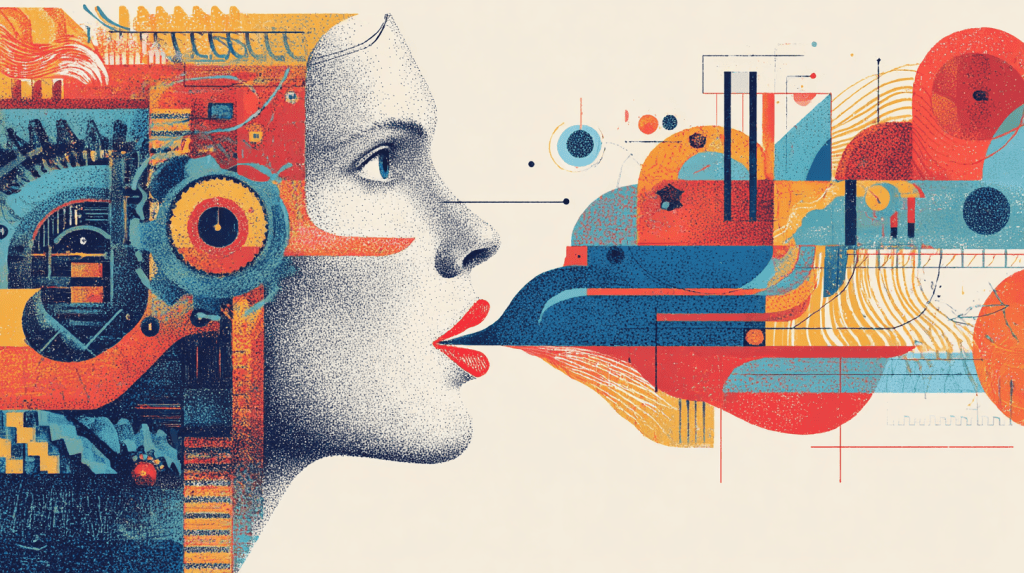

New York-based AI startup Hume has unveiled its latest Empathic Voice Interface (EVI) conversational AI model, EVI 3 (pronounced “Evee” Three, like the Pokémon character), targeting everything from powering customer support systems and health coaching to immersive storytelling and virtual companionship.

EVI 3 lets users create their own voices by talking to the model (it’s voice-to-voice/speech-to-speech), and aims to set a new standard for naturalness, expressiveness, and “empathy” according to Hume — that is, how users perceive the model’s understanding of their emotions and its ability to mirror or adjust its own responses, in terms of tone and word choice.

Designed for businesses, developers, and creators, EVI 3 expands on Hume’s previous voice models by offering more sophisticated customization, faster responses, and enhanced emotional understanding.

Individual users can interact with it today through Hume’s live demo on its website and iOS app, but developer access through Hume’s proprietary application programming interface (API) is said to be made available in “the coming weeks,” as a blog post from the company states.